Deaths happen at any moment and there is nothing we can do to stop them. While most deaths are certainly unavoidable, some can be prevented, and they are the ones that are intended or planned.

As the largest social media on the web, Facebook is in the position to prevent any suicide to happen on its platform.

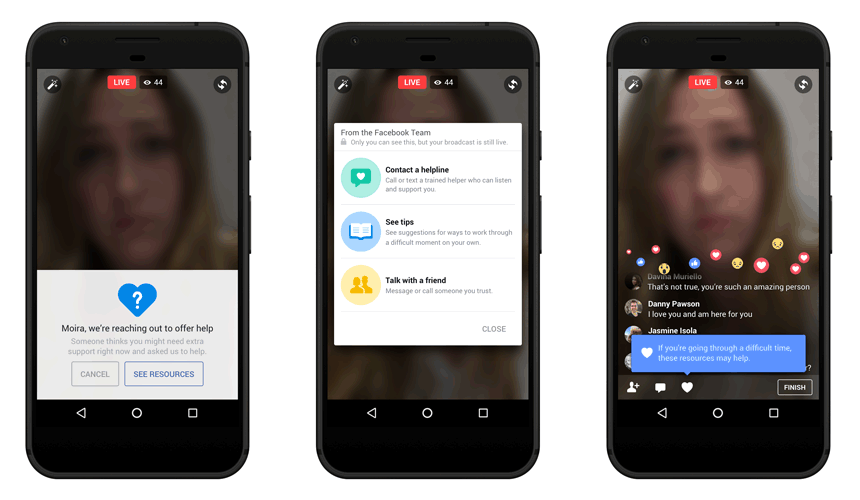

Facebook is introducing an update to its previous suicide prevention measure that includes crisis support and streamlined reporting. The feature is aimed to prevent people in doing nasty things on Facebook Live and Messenger.

With the suicide prevention measure feature, users that happen to see something worrying, can help. They are having the option to contact an organization for help. Participating organizations are Crisis Text Line, the National Eating Disorders Association, and the National Suicide Prevention Lifeline.

And not only depending on users who happen to stumble to such contents, Facebook also monitors behind the scene with Artificial Intelligence which uses pattern recognition to see potentially-suicidal individuals and reach them even if no one has reported them yet.

According to Facebook in its blog post on March 1, 2017:

By creating a safer community with the suicide prevention tools, both Facebook users and Facebook itself can help connect a person in distress with people who can support them.

Facebook said that it's having teams working around the world, 24/7. They are reviewing reports that come in and prioritize the most serious reports like suicide.

Earlier in 2017, a 12-year-old girl Katelyn Nicole Davis broadcast her death on a livestream. While it wasn't on Facebook, the video of her death has found its way into Facebook.

The company has had a hard time in removing the videos, taking it two weeks to completely purge the content from its network.