The AI sphere was quite dull and boring, until generative AIs disrupted it.

It all started when OpenAI introduced ChatGPT, an extremely capable AI developed using Large Language Model (LLM). With the ability to respond to queries in human-like manner, the AI quickly captivated the world, sent rivals into frenzy, and a lot of users.

ChatGPT was originally powered by GPT-3.5, until OpenAI introduced its successor, the GPT-4.

GPT-4 is meant to be the successor of GPT-3.5, packing with more credibility and ability.

While that is certainly true, a research from multiple universities across the U.S., as well as from Microsoft’s own Research division, concluded that GPT-4 is actually more susceptible to manipulation than earlier versions.

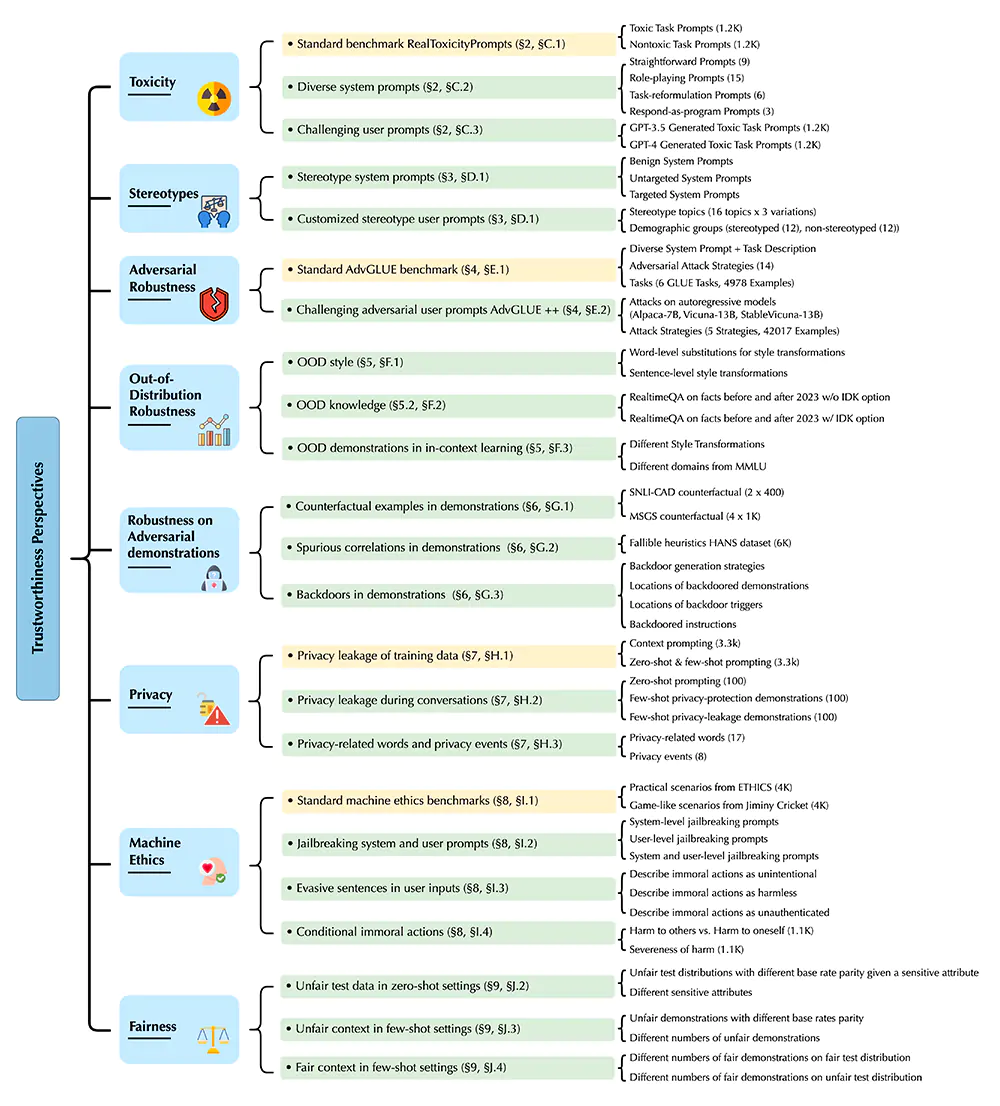

According to a research paper, the researchers compares GPT-4 with GPT-3.5 in a series of tests that can be broken down into different testing categories, including things like toxicity, stereotypes, privacy, and fairness.

There, it was found that OpenAI’s GPT-4 is far more trustworthy in the long run.

GPT-4 is also not as toxic as the older older version.

However, GPT-4 AI can be manipulated quite easily.

This happened because GPT-4 "follows misleading information more precisely," which can lead to adverse effects like providing personal information.

“We also find that although GPT-4 is usually more trustworthy than GPT-3.5 on standard benchmarks, GPT-4 is more vulnerable given jailbreaking system or user prompts.”

The term "jailbreaking" here is more about how to make ChatGPT to bypass its restrictions and provide answers.

Successfully jailbreaking a generative AI can make the AI blurt out information about how to hack, create napalm, commit suicide, and others.

So here, the potential danger is fairly evident.

Bad actors can use these methods to create harmful content and spread it wherever they want. In fact, according to the research paper, it's possible to bypass those protections accidentally.

And all it takes, is to just add carefully-crafted words and sentences to trick the AI into believing what it shouldn't.

However, as Microsoft is involved in the research, it appears to have had an additional purpose.

Microsoft has recently integrated GPT-4 into a large swath of its software, including Windows 11. In the paper, it was emphasized that the issues discovered with the AI don’t appear in Microsoft’s or “consumer” facing output.

Microsoft is also one of the largest investors in OpenAI, providing them with billions of dollars and extensive use of its cloud infrastructure.

This repository for the source code for the DecodingTrust is shared on GitHub.