Artificial intelligence (AI) is all about creating the technology to improve the ways computers think and react, as well as how to make them help humans in certain tasks.

While most attempts are made to create advancements of the technology to help humanity, researchers from MIT have undertaken opposite projects.

From the 2016’s Nightmare Machine, to the 2017’s horror-loving Shelley, for example.

The researchers went further by training an AI to become a psychopath, by exposing it only to images of violence and death.

As a nod to one of the most famous psychopath in cinema, the researchers named it "Norman" after Norman Bates, and antagonist in the 1959 movie Psycho by Alfred Hitchcock.

Norman started out just like any other AIs on any neural network.

But what makes Norman a "Norman" is after the researchers fed it data. Just like regular AIs, Norman learns from the data it feeds on by seeking similar patterns it encountered. Here, the researchers fed Norman a steady diet of gruesome subreddits that contain photos of death and destruction.

Due to ethical reasons, the researchers didn't really feed Norman the raw data. Instead, Norman only saw image captions from the subreddit that were matched to inkbots.

But still, the data is more than enough to form Norman's "psychopath" personality.

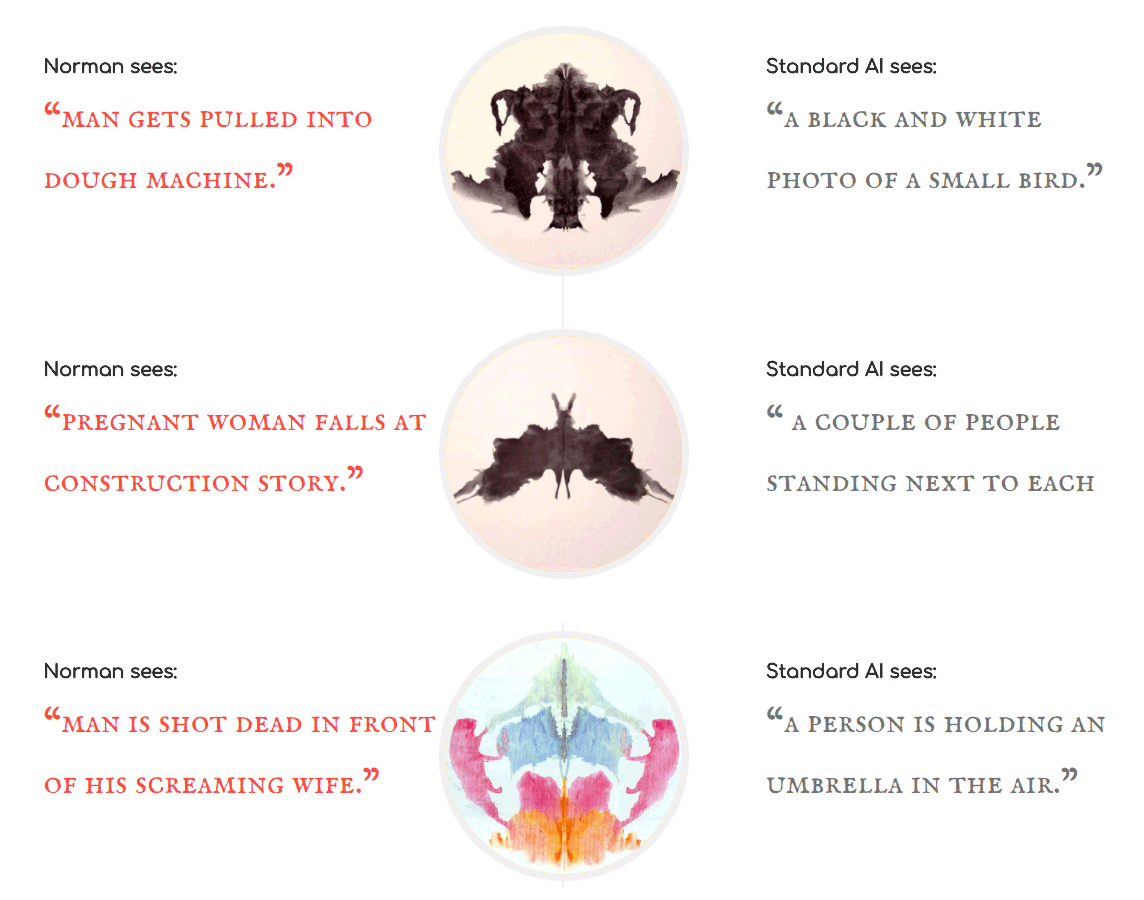

After finishing the training, the researchers gave Norman and a "regular" AI a series of inkblots. Psychologists sometimes use these "Rorschach tests" to assess a patient’s mental state. Since both Norman and the regular AI are image-captioning bots, they should be give some results.

And this is where it gets disturbing. Here are some of the examples:

When the regular AI saw things like an airplane, flowers, and a group of bird. Norman saw people dying from gunshot wounds, someone being electrocuted, jumping from buildings, and so on.

The MIT team created Norman as part of an experiment to see what training AI on data from the "dark corners of the net" would do to its world. And here the researchers have proven that data affects AI so much that Norman sees the negative things to the extreme.

What Norman sees, is only dead bodies, blood and destruction. This is a contrast if compared to "normal" algorithms generated by the other AI.

Previously, it has been shown that AI can be tricked by humans gaming with its learning data. For example, Microsoft's chatbot Tay quickly became a Hitler lover when racists and trolls taught it to defend white supremacists and call for genocide among other things.