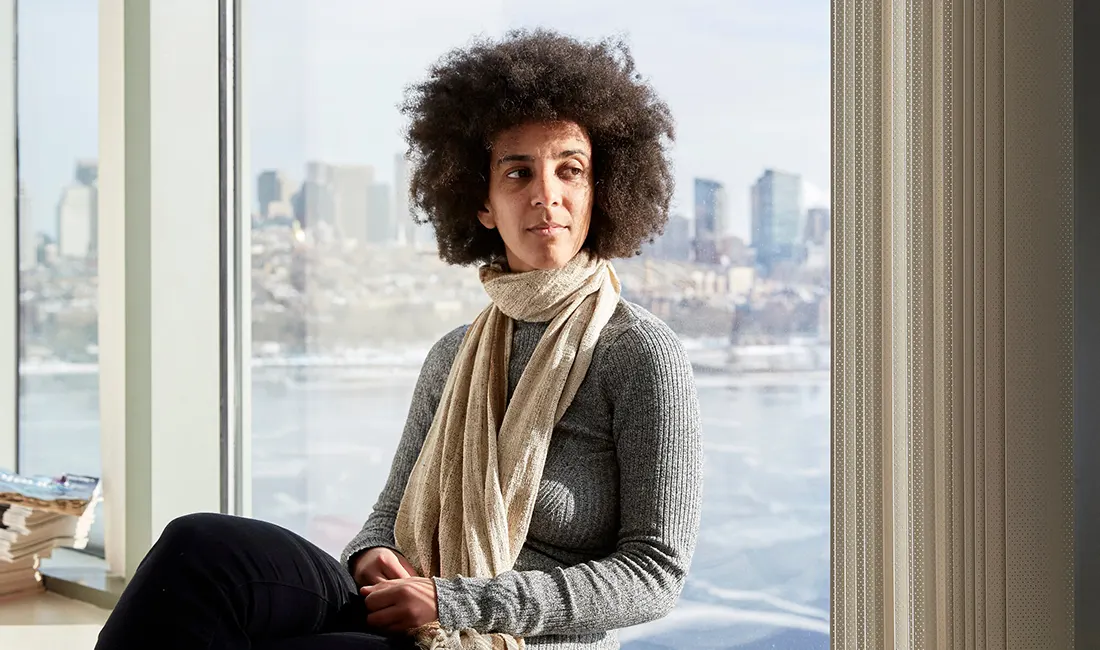

Timnit Gebru, a well-known artificial intelligence researcher, tweeted that she was fired from Google after expressing her frustration over gender diversity within the company’s AI unit, and questioning whether company leaders reviewed her work more stringently than that of people from different backgrounds.

Gebru, who was the technical co-lead of the Ethical AI Team at Google and worked on algorithmic bias and data mining, is also known as an advocate for diversity in technology.

She is also the co-founder of the nonprofit community of black researchers called Black in AI that aims to increase representation of people of color in artificial intelligence, and co-authored a landmark paper on bias in facial analysis technology.

As an activist, she is vocal about how tech companies treat Black workers.

Gebru claimed that she received a dismissal email written by someone named "Megan" who reported her to AI head Jeff Dean. She said that the email stated leadership fast-tracked her departure because of an email she sent to women and "Allies" within Google Brain, Google's deep learning AI research team, earlier in the week.

Let me be very careful since I know what I'm dealing with and they can use everything I say against me. https://t.co/jwRke9S08P

— Timnit Gebru (@timnitGebru) December 3, 2020

The email that she claims she received from Google, reads:

"We respect your decision to leave Google as a result, and we are accepting your resignation. However, we believe the end of your employment should happen faster than your email reflects because certain aspects of the email you sent last night to non-management employees in the brain group reflect behavior that is inconsistent with the expectations of a Google manager."

"As a result, we are accepting your resignation immediately, effective today. We will send your final paycheck to your address in Workday. When you return from your vacation, PeopleOps will reach out to you to coordinate the return of Google devices and assets."

Following the email, Gebru said that her main corporate account had been denied while she was on vacation.

"I hadn't resigned — I had asked for simple conditions first and said I would respond when I'm back from vacation," tweeted Gebru.

Gebru’s paper was about how technology companies could do more to ensure AI systems aimed at mimicking human writing and speech by not exacerbating historical gender biases and without using offensive language, according to a draft copy seen by Reuters.

“Most language technology is in fact built first and foremost to serve the needs of those who already have the most privilege in society,” her paper reads.

“A methodology that relies on datasets too large to document is therefore inherently risky. While documentation allows for potential accountability, similar to how we can hold authors accountable for their produced text, undocumented training data perpetuates harm without recourse. If the training data is considered too large to document, one cannot try to understand its characteristics in order to mitigate some of these documented issues or even unknown ones.”

The paper recommends solution like working with impacted communities, to also value sensitive design, improved data documentation, as well as adopting frameworks like Bender’s data statements for NLP, or the datasheets for datasets approach co-authored by Gebru while she was working for Microsoft Research.

According to her colleagues, Gebru's superiors suddenly asked her to retract the research paper without explaining what was wrong with it, nor giving Gebru a chance to defend herself.

In her email, she suggests this incident is part of a larger pattern at Google of paying lip service about diversity without making actual changes, adding that "there is no way more documents or more conversations will achieve anything."

But according to Jeff Dean, head of Google’s AI unit, he told staff in an email that Gebru had threatened to resign unless she was told which of her colleagues deemed a draft paper she wrote was unpublishable, a demand Dean rejected.

“We accept and respect her decision to resign from Google,” Dean wrote in the email, adding, “we all genuinely share Timnit’s passion to make AI more equitable and inclusive.”

Responding to the company’s rejection of her work, Gebru tweeted:

Immediately, more than a hundred employees expressed their support for Gebru, demanding Google to strengthen its commitment to academic freedom and explain why it chose to “censor” her paper.

Born and raised in Ethiopia, Gebru not only received support from Google employees past and present, as she also received support from tech workers industrywide.

"I thought this was a joke because it seemed ridiculous that anyone would fire @timnitGebru given her expertise, her skills, her influence," wrote former Reddit CEO Ellen Pao on Twitter. "This is one of the many times when I think there is just no hope for the tech industry."

"This is awful. @timnitGebru is one of the best voices in AI Ethics. It's a painful irony that what her employer, Google, is doing to her is shady as hell," tweeted Kate Devlin, an AI researcher at King's College London.

Gebru tweeted that she wants to seek an employment lawyer to settle this matter with Google.

Gebru’s incident with Google adds yet another issue inside Google's working environment over diversity, questioning whether the company’s efforts to minimize the potential harms of its services are sufficient.

Initially, Google declined to comment on her departure beyond Dean’s email, which was first reported by tech news website Platformer.

About a week later, Google CEO Sundar Pichai addressed the controversial departure of Gebru in an email to his staff:

One of the things I’ve been most proud of this year is how Googlers from across the company came together to address our racial equity commitments. It’s hard, important work, and while we’re steadfast in our commitment to do better, we have a lot to learn and improve. An important piece of this is learning from our experiences like the departure of Dr. Timnit Gebru.

I’ve heard the reaction to Dr. Gebru’s departure loud and clear: it seeded doubts and led some in our community to question their place at Google. I want to say how sorry I am for that, and I accept the responsibility of working to restore your trust.

First - we need to assess the circumstances that led up to Dr. Gebru’s departure, examining where we could have improved and led a more respectful process. We will begin a review of what happened to identify all the points where we can learn — considering everything from de-escalation strategies to new processes we can put in place. Jeff and I have spoken and are fully committed to doing this. One of the best aspects of Google’s engineering culture is our sincere desire to understand where things go wrong and how we can improve.

Second - we need to accept responsibility for the fact that a prominent Black, female leader with immense talent left Google unhappily. This loss has had a ripple effect through some of our least represented communities, who saw themselves and some of their experiences reflected in Dr. Gebru’s. It was also keenly felt because Dr. Gebru is an expert in an important area of AI Ethics that we must continue to make progress on — progress that depends on our ability to ask ourselves challenging questions.

It’s incredibly important to me that our Black, women, and underrepresented Googlers know that we value you and you do belong at Google. And the burden of pushing us to do better should not fall on your shoulders. We started a conversation together earlier this year when we announced a broad set of racial equity commitments to take a fresh look at all of our systems from hiring and leveling, to promotion and retention, and to address the need for leadership accountability across all of these steps. The events of the last week are a painful but important reminder of the progress we still need to make.

This is a top priority for me and Google leads, and I want to recommit to translating the energy that we’ve seen this year into real change as we move forward into 2021 and beyond.

— Sundar

Pichai did not say Gebru was fired as she and many others have claimed, as he promised to review the steps that led to her leaving the company. Pichai also apologized for how Gebru’s departure had led some in our community to question their place at Google."

With thousands signed the petition by Google Walkout Medium account protesting Gebru’s firing, Gebru tweeted that Pichai's statement was far from just an apology.

Finally it does not say "I'm sorry for what we did to her and it was wrong." What it DOES say is "it seeded doubts and led some in our community to question their place at Google." So I see this as "I'm sorry for how it played out but I'm not sorry for what we did to her yet." 4\

— Timnit Gebru (@timnitGebru) December 9, 2020

Back in October, the State of AI report from Air Street Capital found that Google hired more tenure or tenure-track professors from U.S. universities than any other company between 2004 and 2018.

Many AI researchers maintain working relationship with academic institutions. But Gebru's case here highlights the fact that big corporates do have influencer over academic research.

If this fact continues, intellectual talents will start to refrain from working with big tech companies, major businesses or startups. This is because they will trust their employers less.

More questions will demand answers if again this continues.

Do academic achievements are meant to only benefit companies commercially? Does it mean that researchers are restricted from what they do best, just to please their employers?

Questions like these can be a problem, especially for Google, knowing that the company is one of the largest contributors in the world of AI research and conferences.