Academic papers, scholarly papers, position papers, thesis and scientific papers, can all contain terms and languages that are complex.

Understanding these papers isn’t something people can learn to do overnight. It takes practice, and also time. It can take anywhere between an hour for reading short papers, to six hours for longer ones.

While there are indeed people who are good at reading and understanding long-written papers, most people don't have such ability and/or patience.

For those people, an AI has been developed by the researchers at the Allen Institute for Artificial Intelligence.

The team has developed an AI model that can summarize text from the papers, and present it in a few sentences in the form of TLDR (Too Long Didn’t Read).

This way, users who would want a quick summary of a research document, can easily extract the main points of the research, in mere seconds.

Initially. the team has rolled this model out to the Allen Institute’s Semantic Scholar search engine for papers, and only on papers that are related to computer science on search results or the author’s page.

On its web page, Semantic Scholar wrote that:

"TLDRs (Too Long; Didn't Read) are super-short summaries of the main objective and results of a scientific paper generated using expert background knowledge and the latest GPT-3 style NLP techniques. This new feature is available in beta for nearly 10 million papers and counting in the computer science domain in Semantic Scholar."

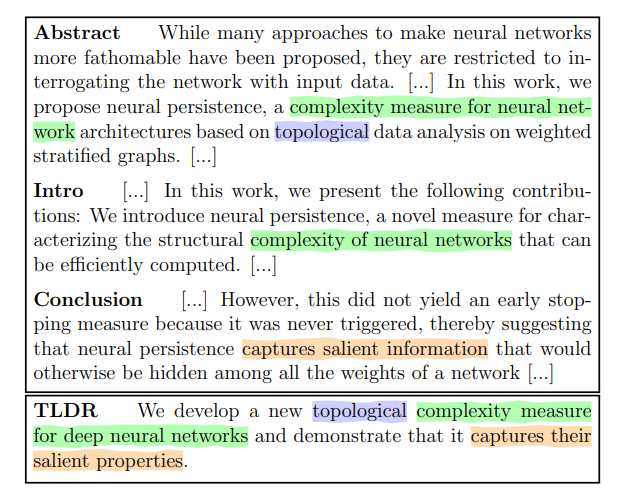

The AI summarizes the paper users want to read, by taking the most important parts from a paper which include the abstract, introduction, and conclusion section, and then summary them.

To make this happen, the researchers first “pre-trained” the model on the English language.

Then, they created a SciTLDR data set that consists of more than 5,400 summaries of computer science papers. After that, the model was further trained on more than 20,000 titles of research papers to reduce dependency on domain knowledge while writing a synopsis.

"TLDRs help users make quick informed decisions about which papers are relevant, and where to invest the time in further reading. TLDRs also provide ready-made paper summaries for explaining the work in various contexts, such as sharing a paper on social media."

The result of this, the AI is capable of summarizing papers that are as long as 5,000 words, into just 21 words in average.

According to the researchers' paper, this is a compression ratio of 238.

Following the success of this AI on English-written computer science-related papers, the researchers plan to expand the model to papers in other fields.