Algorithms can be a gift when it comes to managing data. With automation, things should be a lot easier for those who deal with tons of data.

The case is exceptionally true to search engines. With the task to scout the web for information, they crawl and index web pages for data to populate their database. But occasionally, things can go horribly wrong, as with the case of Bing and the Bing-powered Yahoo!, which are both suggesting offensive content within their search features.

It was spotted that Bing's algorithms in image search were suggesting sensitive contents for related topics that contain racist terms, the sexualization of minors, and otherwise offensive content.

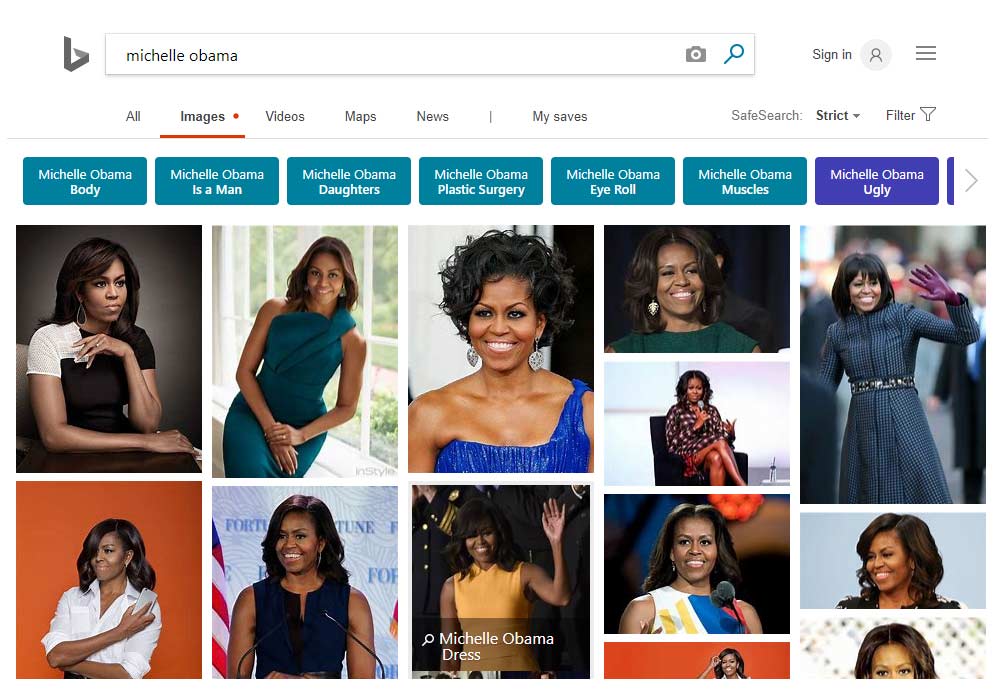

Bing which is Microsoft's search engine, has what it calls a Smart Suggestion bubbles that appear in the line above search results after conducting an image search. Similar to Google's Image search, it shows suggestions that follow users' queries.

It appears that Bing's suggestion failed to block offensive results, even when its SafeSearch is turned on ("Moderate" which is the default, or even "Strict" which is a step beyond Moderate).

One example was searching for "Michelle Obama." Doing so will make Bing return awful suggestions from the search engine itself. Examples include "Michelle Obama is a man."

Bing also seems to recommend terms that sexualize minors.

It doesn’t stop there, as typing in pretty much anything related to "girls," "women," "womanhood," "females," or almost any female celebrities’ can include contents coming from pornographic websites. And often, these contents were showing up on the top results in each category.

Even when SafeSearch is turned on, inappropriate contents can slip in between the results.

These autocomplete suggestions however, don’t appear when users were making a regular search through bing.com.

As for Yahoo! that is also powered by Bing's algorithms, its search results showed the same offensive suggestions that appear in Bing Images. Additionally, since Yahoo! uses community-driven question-and-answer website Yahoo! Answers in its search results, the top result for an offensive search can come from untrustworthy sources.

Search engines have been known to deal with these problems occasionally. Because they are dealing with a lot of data to start with, and from time to time, their algorithms seem to fail at some queries.

Previously, Google had also inadvertently promoting offensive contents. In 2016 for example, Google experienced a similar issue where its autocomplete feature was suggesting "are Jews evil." On that same year, Google also experienced backlash after top result for the query "did the Holocaust happen?" came from a white supremacist website.

In response, Google changed its Search Quality Rater Guidelines in 2017 to stop these offensive search results from showing. But still, that didn't stop the issue, as the company again came under fire when it highlighted offensive meme for query "gender fluid."

The search engine giant has also inadvertently associated Donald Trump with the query "idiot".

As for Bing, Jeff Jones from Microsoft said that: