Technology has no end, and what makes it great is that it overcomes dreams that once were impossible. To achieve those "dreams", tech companies large and small, are racing to make them a reality, From machine learning to deep learning, neural networks and natural language processing; we are all dreaming.

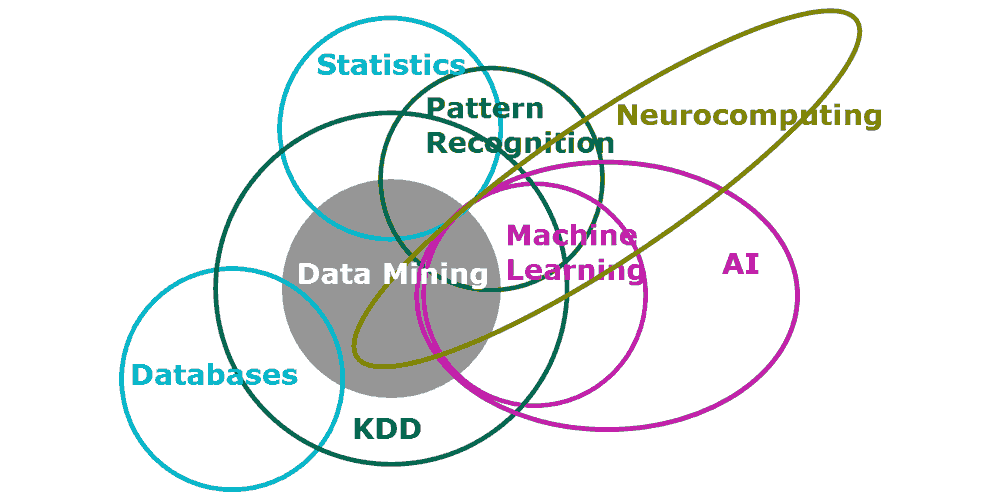

The concepts of all the above is creating the thing that's called artificial intelligence, or AI.

AI, simply put, is an attempt to make computers as smart as a human. It should be able to think, reason and mimic human. This is all done by giving computers human-like behavior, thought processes and reasoning ability.

Breaking AI down, we come up with two of their kinds. They are:

- Weak AI: Focuses on only one narrow or think task. This type of AI is available in many software and applications, ranging from Chess, Go to console and PC games. They're also related to digital assistants such as Cortana, Siri and Google Now. They're all good on their own, but have limitations. For example: Siri still can't play chess. In short, weak AIs can't go beyond their original programming.

- Strong AI: This type is regarded as the more general AI. Here is more of a science fiction in which AIs can learn new things and modify their own code base. The more it learns, the more it understands, the better it becomes. This kind of AI can improve itself beyond what it was created for. Strong AIs are more similar to humans that they are computers

Going Deeper Into Machine Learning

Humans in making computers smarter has an ultimate goal that would be a recreation of the human thought process. This machine learning is man-made machine that has human's intellectual abilities. The ultimate AI should be able to learn just about anything, has the ability to reason, the ability to use language, the ability to formulate its own original ideas, and so forth.

This goal is still far beyond the horizon, but still we have made a lot of progress. Today's AI machines can replicate some specific human elements of intellectual ability, including some of which were: the ability to "dream", write a short novel and write a short film.

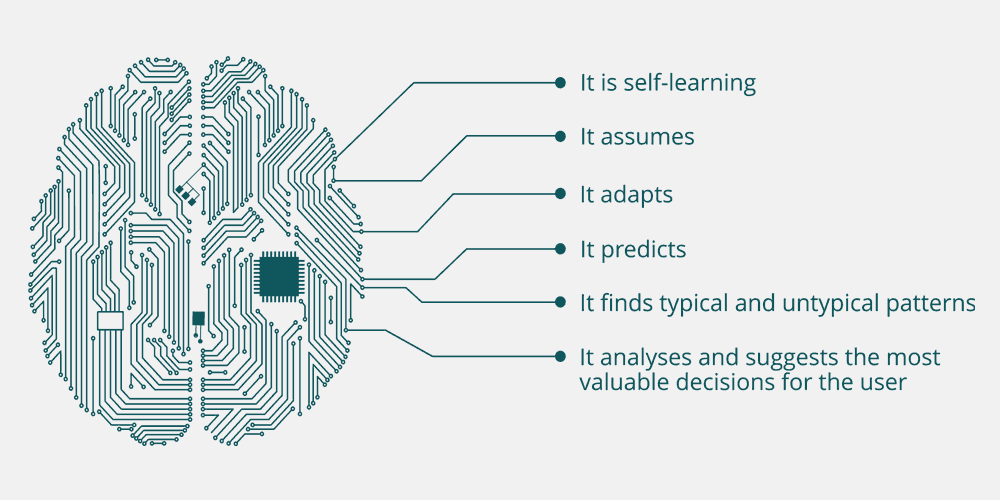

AIs have what is listed below. In general, we want them to excel in each areas.

- Perception: Humans have senses, and so should computers so they can interact with the world. Computers can have more senses than humans, aided various type of sensors.

- Natural language processing: This is for AIs to interpret spoken and written languages. With this, they can parse sentences and understand what they're all about.

- Knowledge representation: This is to make AIs able to represent the world in its own way - within its "brain".

- Reasoning: Once AIs collects data from its perception and connects all the concepts together, they need to use the data to solve problems logically.

- Machine learning: AIs need to adapt to new circumstances to detect and take in, or take out certain patterns.

- Planning, navigation and robotics: To make AI a true intelligent "being", it needs to be able to navigate the world we live in. Autonomous cars are the firsts to be able to do this by navigating the world in 3D to plan optimal routes. In robotics, AI makes computers able manipulate real-life objects and interact with them

To summarize, machine learning involves imaging and the analysis of imagery to solve problems.

Powering AI To The Next Level

The concepts of AI aren't anything new. They've been described as early as in 1956 at the Dartmouth Conferences. The moment was regarded as the time humans founded the field of AI.

Since that time, AI has progress into many forms. It certainly took years and decades to perfect, to eventually be still imperfect. But the advance of technology has made us closer to our imaginations, and we're seeing the AI revolution where more and more tech companies are getting involved in the field.

While our imagination is certainly the first thing that made us reach this revolution, the most obvious factor that contributed to the rise of AI, is the ability to pack more computing power into smaller and more efficient chips. As tech advances, computing power has reached a point where it's both functional and effective.

Another thing that helped the rise of the trend is big data. One of the first that made a breakthrough with big data in AI is when Google fed its neural networks tons of data in 2012. The data corpus consisted of 10 million YouTube videos. As a result, Google achieved 75 percent accuracy in learning what a cat is.

Google’s cat-scanning brain required 16,000 computer processors to run. AlphaGo, the program that beat human Go champion, ran on 48 processors. As computers become smaller and more efficient, neural networks are expected to be more compact than ever.

Big data is another trend that led to the rise of AI: Google made a breakthrough in 2012 when it fed a neural network tons of data, consisting of stills of 10 million YouTube videos.

As a result, it learned what a cat is without anyone teaching it. It achieved 75 percent accuracy in identifying our feline friends. This wouldn’t have been possible without a corpus of 10 million videos.

How Machine Learns Like Humans

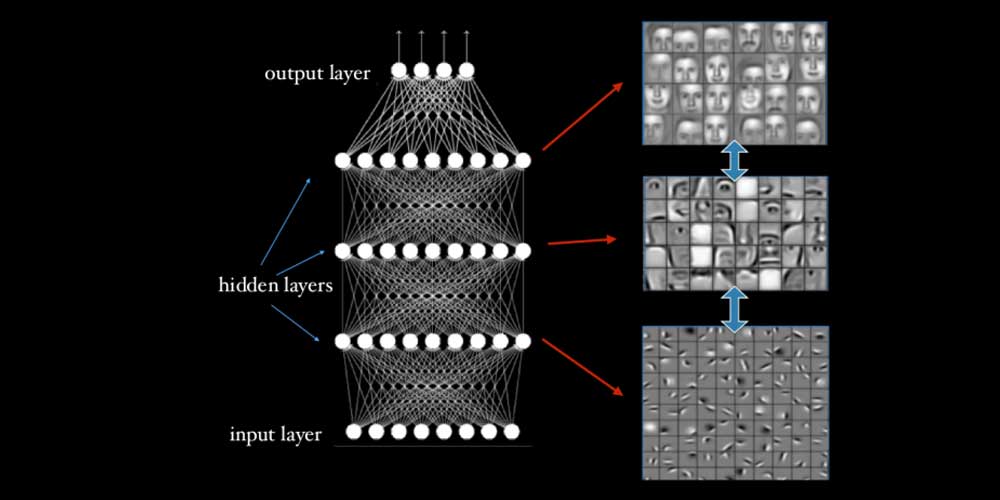

Machines of AIs are nothing more than codes that do the algorithms. What they do is creating a series of steps to accomplish certain tasks by passing down information through its neural networks that consist of layers of neurons.

When the machine is given an input, the data is passed into the first layer. Then the individual neurons receive the inputs, and each give them a weight. The output from these neurons are based on the data weightages.

When the output passes from the first layer, it continues its journey to the second layer. This process continues to the last layer of the neurons until the final output is produced.

After the algorithms do its work by passing data through the layers, the network then defines what the "correct" output should be. Each time a data is passed through the network, the end result is then compared with the previous "correct" ones, which will then be tweaked and given weightages untuk it created the correct final output each time. The result, the network trains itself to understand data.

So when the artificial brain learns how to identify a cat from seeing a ton of photos, this is when it learned the characteristic of cats.

Machine learning is like a box with a camera at one end, a green and red light on top, and knobs at the front. When the algorithm tries to learn, it adjusts the knobs so, for example, when a cat is in front of the camera, the light turns green. And when a dog is put in front of the camera, the red light turns on.

So when the machines sees a cat, if the light is bright green, it won't do anything. But if it its dim, the algorithm will tweak the knobs so the light gets brighter. If the red turns on, it'll tweak so the red gets dimmer. When it sees a dog, it'll tweak the knobs so the green light gets dimmer and the red light gets brighter.

The more examples the machine sees cats and dogs, and the knobs keep adjusting a little bit each time, the machine will eventually get the right answer every time. This is why machine learning in AI needs a lot of data; the more the better. One good example is Facebook's DeepFace algorithm that is able to tag friends from just seeing their photos.

And in deep learning, the method of having layers of artificial neural networks has been found effective in identifying patterns in data. This is where the "deep" in deep learning comes from. This is what made AI advances significantly.

Further reading: Technology And Artificial Intelligence, And Where They Fall Short