Computers are what power most of what we do today. As tech companies race to achieve newer things, humans hunger for more knowledge.

Computers are what power most of what we do today. As tech companies race to achieve newer things, humans hunger for more knowledge.

Google and Facebook are two tech companies that are boasting their resources to build enormous neural networks that could think like how human thinks. And now things are just getting a bit more creepier.

Both Google and Facebook thrives on the internet. Building their power in their own ways, the two have a similar interest in creating artificial brains. Besides than just powering their services, artificial intelligence can be taught to instantly recognize faces, buildings and other objects, including things inside photos.

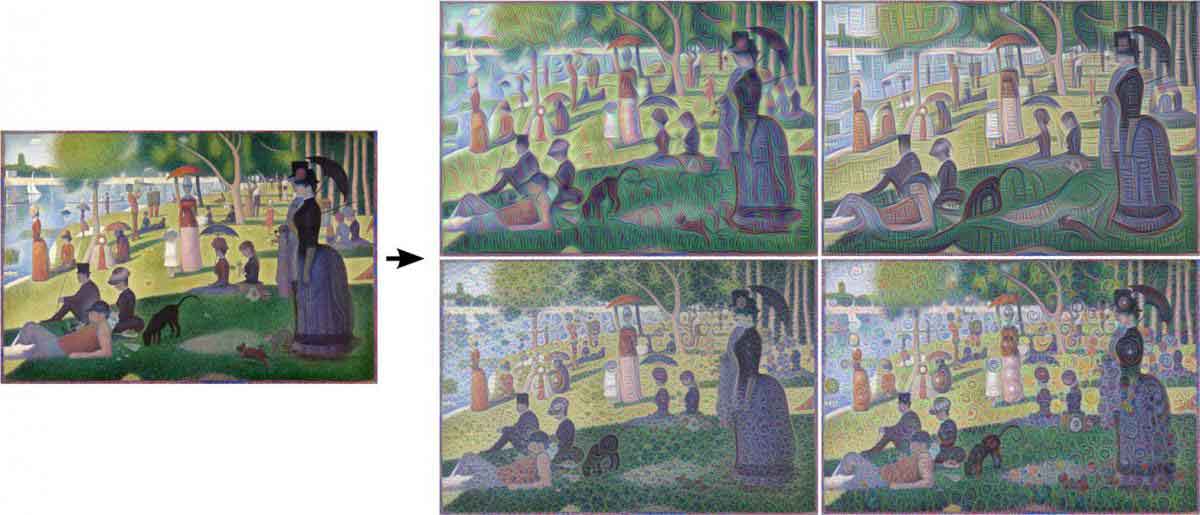

Facebook is teaching its neural networks to create small things out of nothing. The social network giant is making its AI to automatically make something up in its "mind", like airplanes, automobiles, and animals.

And what it did was fooling humans into believing that what they see was a reality, 40 percent of the time.

"The model can tell the difference between an unnatural image - white noise you'd see on your TV or some sort of abstract art image - and an image that you would take on your camera," said Facebook's AI researcher Rob Fergus. "It understands the structure of how images work."

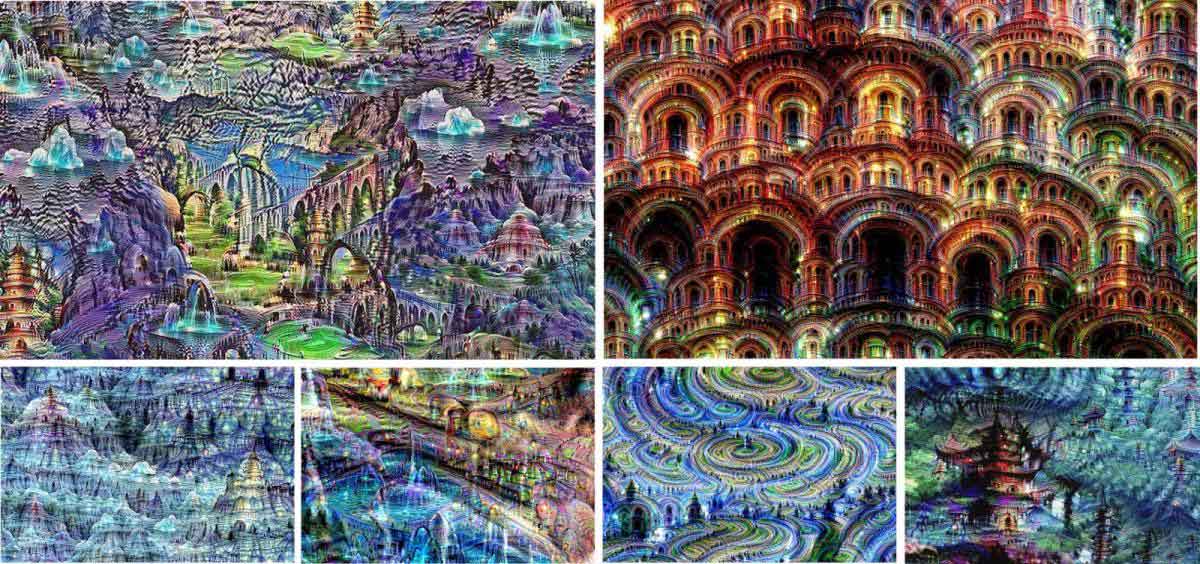

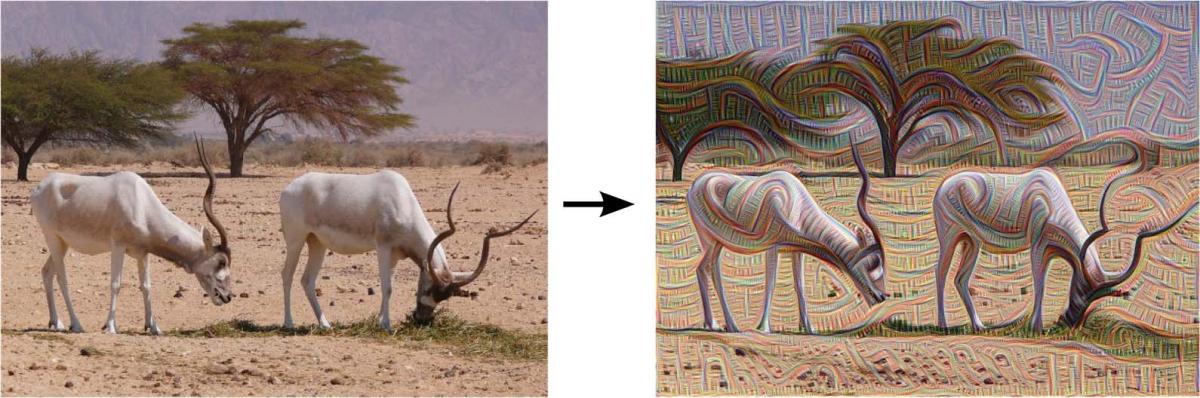

Google on the other hand, has taken things a bit further. The search giant has gone into the extreme by turning real photos into something intriguingly unreal. Google is teaching its AI to look for familiar patterns in a photo, then enhance those patterns, followed by repeating the process by feeding the machine with the output image.

In a more technical explanation, the first layer of the neural network takes a look, then it talks to the next layer, which will then repeat the process. This goes on to 10-30 times, with each layer identifying key features and isolating them until it has figured what the image is.

The whole process is what goes on behind image recognition.

"This creates a feedback loop: if a cloud looks a little bit like a bird, the network will make it look more like a bird," said Google in a blog post explaining the project. "This in turn will make the network recognize the bird even more strongly on the next pass and so forth, until a highly detailed bird appears, seemingly out of nowhere."

This gave a conclusion that AI is becoming more sophisticated and smart. Despite working on small images, AI has gone from dumb to a bit smarter when humans made improvements on its neural networks, moving AI closer to human-like intelligence.

What AI can actually do, paint, or dream, is just the utmost ordinary and basic thing humans can do. And what humans did was making computers able to better visualize the world we're living in.

When finally computers can be created to dream, they can be both creative, and disturbing.

Unsupervised Machine Learning

Neural networks created by Google and Facebook span many layers of artificial neurons, each working in harmony. Working in concert, a layer is making one complement while other's intelligence is fooling another. The outcome of this model is realistic images that can also fool humans.

This kind of AI can be the thing that could help us restore photos that have degraded. The AI works by recognizing what it sees, amplifying it, then repeat the process all over again. The larger outcome of the process is that AI can take another step forward into what can be called "unsupervised machine learning."

In other words, machines can be made to learn without needing guidance of human researchers.

By turning neural networks to generate images, tech companies can better understand how AI really operates. It would be just a matter of time before machines can really "dream".

Dream Happens

Neural networks in computing has come a long way. In 1943, two pioneers in cybernetics, neurophysiologist Warren S. McCulloch and the logician Walter Pitts, demonstrated that neurons could be equivalent to running programs on Turing machines. The two showed that the human brain could be simulated by a computer.

Since that moment, the most promising approach towards AI has been the development of the artificial brain that mimics how the human brains work.

Inside a human brain, when the body's senses deliver information to the brain, neurons begin to process this information by first trying to identify its essential characteristics, then gradually building a hypothesis to classify the information and thus make sense of it.

Recognition, classification and identification happen in different stages as neurons pass their outputs to other higher neurons to process the information further. Beside the processing part, the memory plays an important role by "guiding" the neurons to quickly respond the sensory inputs by taking "shortcuts".

In this hierarchy, humans are trying to replicate what their brains can do. Humans has put this information-processing idea from biological to computers. neural computing in AI has taken less than a decade from introduction to "seeing the world".

What the researchers did was feeding the neural network with "white noise", or also means noise of "nothing". After giving that task, that interesting thing happened. The neural networks started to "dream" because they see nothingness as an input.

The dreamlike images is somehow similar to how the human brains work during sleep. With senses turned off, the brain has no source of external information to process. But this don't stop the neurons from working. And that is during REM sleep, when the brain processes information about nothing, dream happens.

Artificial neural networks are not programmed like conventional computers, but they do operate similarly. Machines work on the basis of mathematical equations that weigh evidence and perform calculations. The mathematical basis of artificial neurons is not merely practical, and the nature of perception is mathematical. But despite that, can machines really dream?