Nvidia has done it again, and it has done it well.

After creating an AI that can create 3D scenes from 2D photos, and an AI that can turn 2D images to 3D objects among others, Nvidia is stepping things up a bit.

And that is by developing what it calls the 'GET3D'.

GET3D, which gets its name from its ability to 'Generate Explicit Textured 3D meshes,' can generate characters, buildings, vehicles and other types of 3D objects, NVIDIA said.

But unlike previous AI models that can also create 3D objects, GET3D is able to work in a more restrained environment.

In an example, the company said in a blog post, that GET3D can generate around 20 objects per second, even when using a single GPU.

To make this happen, the researchers at Nvidia trained the model using synthetic 2D images of 3D shapes taken from multiple angles.

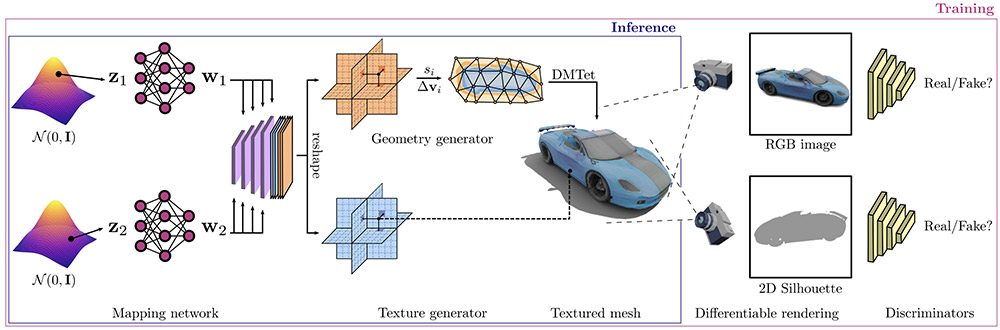

"We generate a 3D SDF and a texture field via two latent codes. We utilize DMTet to extract a 3D surface mesh from the SDF, and query the texture field at surface points to get colors. We train with adversarial losses defined on 2D images. In particular, we use a rasterization-based differentiable renderer to obtain RGB images and silhouettes. We utilize two 2D discriminators, each on RGB image, and silhouette, respectively, to classify whether the inputs are real or fake. The whole model is end-to-end trainable," the researchers said.

Nvidia said that it took it two days to feed the model with around 1 million images, with system running A100 Tensor Core GPUs.

The end result is that, GET3D can create objects with "high-fidelity textures and complex geometric details," as explained by Nvidia's Isha Salian in a blog post.

The shapes GET3D makes "are in the form of a triangle mesh, like a papier-mâché model, covered with a textured material," Salian added.

In an example, the company said that, based on a training dataset of car images, GET3D was able to generate 3D objects of sedans, trucks, race cars and vans. It can also create 3D objects of foxes, rhinoceros, horses and bears after being trained on animal images.

Nvidia noted that the larger the datasets, and the more dense the training data is, GET3D should be able to create "more varied and detailed the output."

For users, they should be able to easily export 3D objects generated by the AI to their own projects, which can include game engines, 3D modelers and film renderers for editing, for example.

For convenience, GET3D is able to export 3D objects it generate in various compatible formats.

In a research paper, the team said that:

And with the help of another AI tool from Nvidia, like the StyleGAN-NADA, it's possible for users to apply various styles to an object with text-based prompts.

For example, users can apply a burned-out look to a car, or to convert a model of a house into a haunted house or, apply tiger stripes to any animal, and so forth.

What this means, it should be much easier for developers to create objects for games and the 3D world, like for the hyped metaverse.

But in one particular example, Nvidia cited robotics and architecture as other use cases.

The Nvidia Research team that created GET3D believes that future versions of the AI could be trained on real-world images instead of synthetic data.

It may also be possible to train the model on various types of 3D shapes at once, rather than having to focus on one object category at a given time.