There are so many bad things on the internet, and those can spread wildly on Facebook.

For years, Facebook have had Community Standards which explain what is allowed and what isn't. And after publishing its internal guidelines for the first time, Facebook is also releasing the numbers in a Community Standards Enforcement Report so users can see what Facebook has been doing all this time.

The company's VP of Data Analytics, Alex Schultz, explained how exactly Facebook measures what's happening on its platform. But here, Guy Rosen, VP of Product Management, stresses out that the work is still in progress, and Facebook is likely to change its methodology as it learns what's important and what works.

Since October 2017, Facebook has taken down posts that cover six areas: graphic violence, adult nudity and sexual activity, terrorist propaganda, hate speech, spam, and fake accounts.

In Q1 2018, Facebook has removed 837 million pieces of spam. This is nearly 100 percent of which Facebook found and flagged before anyone reported it.

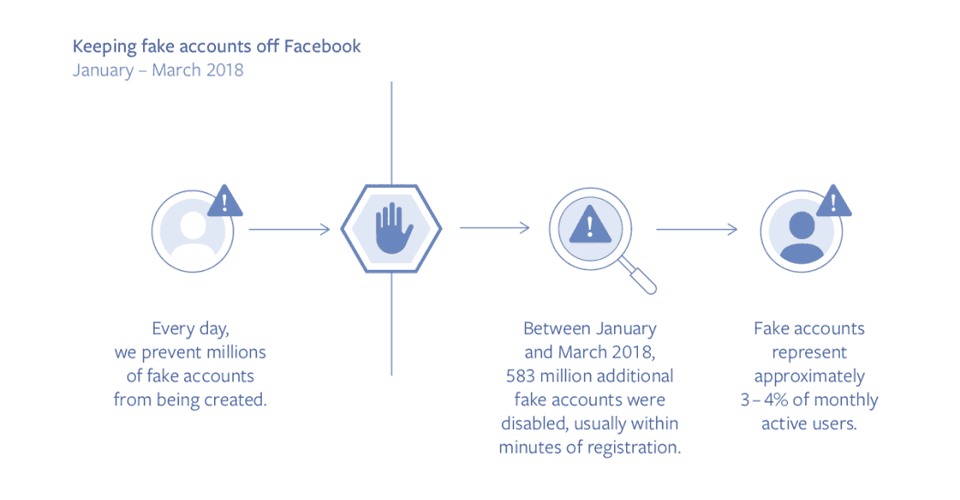

Also in Q1, Facebook has disabled about 583 million fake accounts which are mostly disabled within minutes of registration. This is in addition to the millions of fake account Facebook is attempting to remove every day.

"Overall, we estimate that around 3 to 4% of the active Facebook accounts on the site during this time period were still fake," explained Rosen. "The key to fighting spam is taking down the fake accounts that spread it."

And in terms of other types of violating content, Facebook has taken down 21 million pieces of adult nudity and sexual activity in Q1 2018, which 96 percent of them were found and flagged by its AI before it was reported. "Overall, we estimate that out of every 10,000 pieces of content viewed on Facebook, 7 to 9 views were of content that violated our adult nudity and pornography standards."

For graphic violence, Facebook took down or applied warning labels to about 3.5 million pieces of violent contents. 86 percent of them were identified before they were reported to Facebook.

And for last, it is hate speech. Facebook said that its technology doesn't work that well, and it needed manual reviews from its teams. But still, the platform was able to remove 2.5 million pieces of hate speech in Q1 2018 - 38 percent of them were flagged by its technology.

Facebook's technology leverages Artificial Intelligence (AI). While promising, the technology is years away from being effective.

"For example, artificial intelligence isn’t good enough yet to determine whether someone is pushing hate or describing something that happened to them so they can raise awareness of the issue."

To make AI better, the technology needs a large amount of training data to recognize meaningful patterns. What's more, people are getting better in their tactics; changing their strategies frequently to avoid Facebook's ban hammer.

This is the reason why "We must continuously build and adapt our efforts. It’s why we’re investing heavily in more people and better technology to make Facebook safer for everyone."

"We believe that increased transparency tends to lead to increased accountability and responsibility over time, and publishing this information will push us to improve more quickly too. This is the same data we use to measure our progress internally — and you can now see it to judge our progress for yourselves."