In the whirlwind of the AI boom, where hype often outpaces reality and definitions shift like sand, Nvidia CEO Jensen Huang dropped a statement that sent ripples across the tech world.

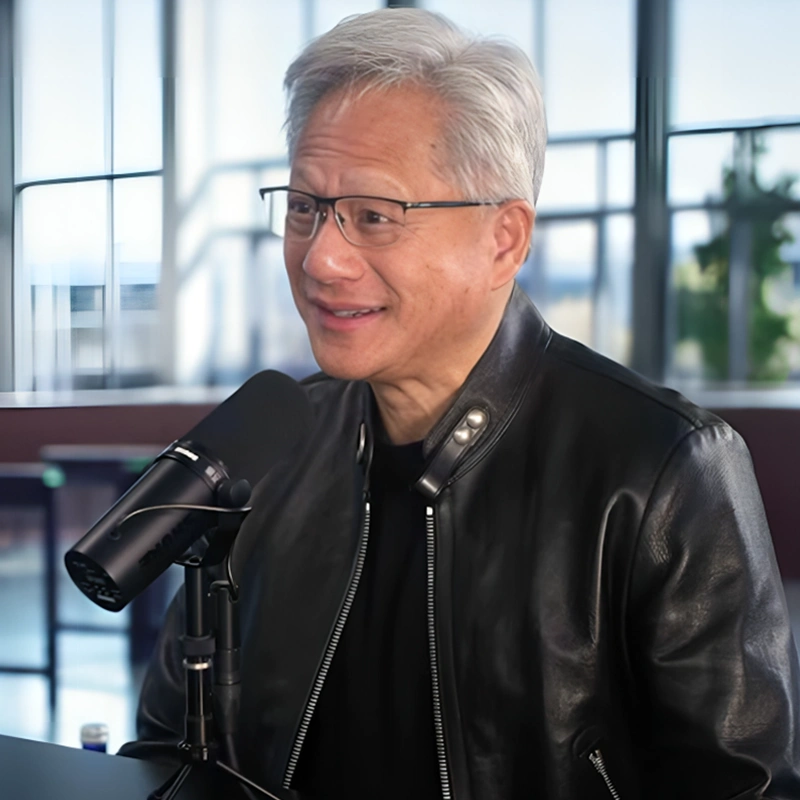

During a wide-ranging conversation on the Lex Fridman Podcast, Huang sat down to discuss everything from scaling laws to the future of computing. The episode, which has already racked up millions of views and sparked endless online debate, turned particularly electric when Fridman posed a provocative, unconventional benchmark for artificial general intelligence.

Also called AGI, it's often referred to as the holy grail of AI, and that the term has eluded precise definition for decades.

Fridman suggested AGI could be measured by whether an AI system could essentially do his job: start, grow, and run a successful technology company worth more than a billion dollars. It was a capitalist twist on the classic Turing test, tying intelligence not just to cognition but to real-world entrepreneurship and economic value creation.

Huang didn't hesitate.

He said that:

He replied by framing the current moment as the arrival of this long-awaited threshold. But true to the nuanced nature of these discussions, he quickly layered in caveats that painted a more textured picture.

When Fridman pressed on whether a company could actually be run by such an AI system, Huang said it was "possible," explaining, "You said a billion, and you didn't say forever."

He pointed to fleeting successes already emerging in the ecosystem, like viral apps or social experiments powered by models such as Claude.

Huang drew parallels to the early internet era, noting that many of those dot-com ventures weren't any more sophisticated than what today's AI could whip up on the fly. He even speculated on more whimsical possibilities:

Yet Huang was quick to ground the excitement, emphasizing the gap between momentary virality and sustained, complex enterprise-building.

Referencing OpenClaw, the an open-source AI agent platform that's been making waves and even drawing acquisition interest from OpenAI, he acknowledged its rapid rise as "the iPhone of tokens," a breakout tool that is letting everyday users automate tasks, code, and even simulate jobs in places like China.

But the podcast wasn't just like a usual banter. Huang's statement ignited a fresh wave of headlines because AGI has always been a slippery concept, one that tech leaders, researchers, and philosophers have wrestled with for years without consensus.

Coined independently in the late 1990s by thinkers like Mark Gubrud and Shane Legg (a DeepMind co-founder), AGI traditionally evokes systems that match or surpass human intelligence across a broad spectrum of tasks, learning, reasoning, adapting, and innovating with the flexibility of a human mind. Alan Turing's 1950 Imitation Game, or Turing test, was an early stab at measuring it through indistinguishable conversation, but it has long been critiqued for its narrow focus.

Chatbots like ELIZA passed variants decades ago without true understanding. More rigorous frameworks have emerged lately, such as Google DeepMind's cognitive taxonomy, which breaks intelligence into ten core faculties: perception, reasoning and planning, memory and learning, attention and search, social cognition, motor control, emotion and affect, language and communication, creativity and imagination, and metacognition.

Their approach, inspired by psychology and neuroscience, calls for AI to hit median human performance across the board, complete with a $200,000 Kaggle competition to build better evaluations.

Other benchmarks, like François Chollet's ARC-AGI puzzles testing efficient novel learning or papers scoring models like GPT-5 at around 57% on human-like domains, reveal AI's strengths in math and pattern-matching but persistent weaknesses in social reasoning or long-term adaptation.

Huang's claim, delivered in the context of Nvidia's pivotal role powering the AI revolution with its GPUs, highlights how the term gets weaponized, or at least stretched, for corporate narratives.

OpenAI's charter ties AGI to outperforming humans at economically valuable work, while internal Microsoft documents once hyped GPT-4 as showing "sparks" of it. Sam Altman has called AGI a "sloppy term" but insisted his company knows how to build it, later framing similar statements as "spiritual" rather than literal. Microsoft CEO Satya Nadella, by contrast, has pushed back, insisting the industry isn't close and emphasizing structured progress over declarations.

Huang's take, tied to Fridman's billion-dollar litmus test, feels pragmatic for a CEO whose company has ridden the AI wave to unprecedented valuations, yet it also underscores the field's "jagged frontier": AI excels at generating code or apps that mimic internet-era startups but falters at the holistic, persistent intelligence needed for enduring empires.

At its core, Huang's podcast moment reflects the broader tension in AI today.

On one hand, tools like AI agents are democratizing capabilities once reserved for elite engineers, potentially exploding the pool of "coders" from millions to billions as carpenters or creatives direct systems with natural language. On the other, true general intelligence (autonomous, creative, and resilient across unpredictable domains)demands breakthroughs in data (much of it synthetic now), hardware, and evaluation that go far beyond today's models.

Nvidia, under Huang's 33-year stewardship, has navigated near-bankruptcy to dominance by betting on exactly this trajectory, anticipating shifts in scaling laws from pre-training to agentic multiplication.