Artificial Intelligence is the future of technology, and it's becoming one of the most important topic that has been debated and argued more than many times.

As computers become smarter and able to decide things on their own, we are steadily seeing a shift of where us humans aren't anymore the most intelligent beings.

Humans are progressing, and technologies are evolving faster as time goes on. This is because the more advanced our societies have become, the more we have the power to progress faster than those that are less advanced. In short, modern humans can accomplish much more in a year than our ancestors were capable of doing in a decade.

And this isn't science fiction as many scientists and engineers are becoming better and better on their field, delivering much more knowledgeable results that what we've had in our history.

One of our instincts tell us to progress and keep on progressing. We want to know more; we want to achieve what people have never done before; we want to do things that weren't possible before. As the most advanced species on Earth, humans evolve for the better. Not just for survival, but also to revolutionize our dreams.

With that in mind, we leap forward to a point where us humans can alter life - going to an extent where intelligence goes beyond our brains. We want to be the smartest, but we know our weaknesses. In the hope to create something to aid us, we're creating artificial brains.

From a mere calculator to a PC, we think that they are smart. That is true to some extent. Although with computation power exponentially faster than us humans, they are actually dumb. They do only the things they're told: they have no imagination, no feeling, no sense of gratitude, self-awareness or competitiveness.

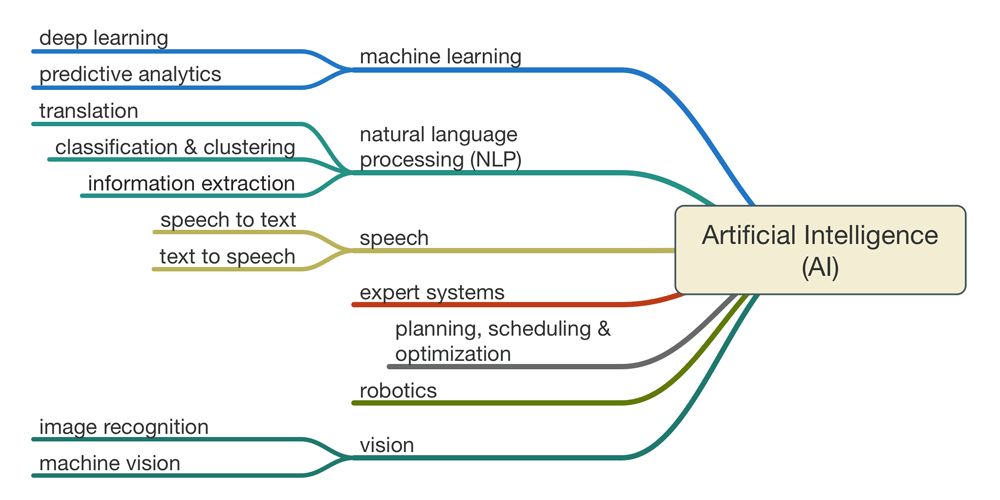

This is where we extend them by creating Artificial Intelligence (AI) to make them learn.

Artificial Intelligence, The Concept That Confuses People

When we hear the word "AI", we quickly resemble it to a science-fiction thingy in movies. But the more we dig in, the more we understand that AI represents a much wider topic that ranges from a simple calculator to self-driving cars, the internet's search engine to even terms that can change the world dramatically.

AI, in short, is a confusing term.

Most of us use at least a bit of AI in our daily lives but never realize its existence. AI does not have a form, not like a robot that physically has a dimension. AI is the computer - the brain that don't need a body to function. One example is digital voice assistant on smartphones. While the body is indeed the device, the AI is what lies under it.

Breaking AI down, they can be categorized into three different beings, each with different capabilities:

- Artificial Narrow Intelligence (ANI): Often referred to a Weak AI, it's an AI that can only do one narrow task. Most AI are in this state, and one example is Siri. The voice assistant from Apple operates within a limited pre-defined range, having no genuine intelligence and no self-awareness. Another example is an AI that can beat humans in a game of Chess, and another AI that surpasses humans in playing Go.

- Artificial General Intelligence (AGI): Also regarded as a Strong AI, it's an AI that refers to a computer that has a Human-Level AI. It has the capability to perform any intellectual task that a human being can. It defines the distinction between simulating a mind and actually having a mind.

- Artificial Superintelligence (ASI): This type of AI possesses intelligence far surpassing that of the brightest and most gifted human minds in practically every field, including scientific creativity, general wisdom and social skills..

The Roads To Create Smarter AIs

The simplest form of AI is the Artificial Narrow Intelligence (ANI), and this type is already available in many products.

From cars to smartphones, ANI is used to accomplish certain tasks. Then there is that ANI to fight spam emails by reading and understanding how the words match together to form sentences. Search engines are also putting ANI to a good use to show relevant answers to different queries.

One ANI is good for one reason, and it has limited capabilities and understandings of what goes beyond its knowledge.

To create Artificial General Intelligence (AGI) or even Artificial Superintelligence (ASI), AI needs a lot more than just doing what it currently does best.

The Challenges

To create AI that is smart, we need to make it learn about the diversity of the world, and how things change or react when something is applied to it. We need to figure how can the everchanging circumstances can affect a brain, and how information should be processed using machine learning.

So far, humans think using their brains, and that organ is the most sophisticated and complex object known to us. To make ANI an AGI, we need to artificially create a digital mind that works just like the grey matter we have in our skulls.

Computers can do computations a lot faster than us humans. It can calculate numbers incredibly fast and think logically better than us. Computers in this field, surpassed us by miles. But when computers are presented with things that are easy according to our knowledge, such as knowing the differences between a cat and a dog, the artificial mind can get confused.

Computers have difficulties in understanding our surroundings. They have a hard time in understanding movements, vision, motion and perception.

Simply put, AI has succeeded in doing essentially everything that requires ‘thinking’ but has failed to do most of what people and animals do ‘without thinking.'

Hardware And Software

AI can't be separated from its hardware and software. While it doesn't practically need a body to live, it needs both hardware to home its software.

On the hardware part, to create a smarter AI, we need to increase the power of computer hardware. If an AI system is going to be as intelligent as human brain, it'll need a larger and more capable raw computing power. One way to describe this, is to increase the capacity of total calculation per second.

The next thing we need to deal with, is size and storage. While a human brain is basically limited in its physical size, computers aren't restricted. We can put more and more RAM, for example. We can also plug them to hard drive storage for long-term memory that has greater capability of storing information that our own brain.

Still on the hardware side, after speed and storage, we need to make sure that the system is stable, reliable and durable.

Both for human and artificial intelligence, any hardware improvements will increase the rate of future hardware improvements.

On the software side, we need to artificially plagiarize our brain. This reverse engineering simply figures out how time evolved our brains, and how we can replicate nature with our own technology. One example is the artificial neural network that consists of transistors as neurons that are connected with each other with both inputs and outputs.

This artificial brain knows nothing until it learns.

The way it learns is by doing repetitive tasks to get answers. For example, when it guesses something right, it's given a feedback that strengthen its pathways' connection. A human brain works similar to this, but a lot more complex. The more we understand how our own brain works, the we take advantage of that neural circuitry knowledge to replicate it with our technologies.

This is how we can emulate biology, artificially.

Still on the software side, we need to make sure about the software's editability and upgradability in order to achieve a broader breadth of possibility. The next thing to consider is the collective capability, and how computers can make use of the information it has learned and sync it regularly with others so that anything one computer knows, could be uploaded to other computers.

Singularity

If achieving AGI is not already that difficult, ASI goes beyond what AGI can do. Achieving AGI can open a multitude amount of possibilities that once before was impossible. The first and the most possible, is intelligence explosion.

In 1965, I. J. Good speculated that as humans create AGI and computers increase in power, it becomes possible for people to build a machine that is more intelligent than humanity; this superhuman intelligence possesses greater problem-solving and inventive skills than current humans are capable of. And his "intelligence explosion" theory predicted that a future superintelligence (ASI) would trigger a singularity.

If ASI is to be invented, through any means, it would bring to greater problem-solving and inventive skills than current humans are capable of. This is because the AI is created with engineering capabilities that matched or surpassed those of its human creators.

As this type of AI can design an even more capable machines, or re-writes its own software to become even more intelligent; an ASI has the capability to keep on going and design future machines of yet greater capability, and so on. According to Moore's Law, it was suggested that if the first doubling of speed took 18 months, the second would take 18 subjective months; or 9 external months, whereafter, four months, two months, and so on towards a speed singularity.

This self-improvement will go on until any upper limits imposed by the laws of physics or theoretical computation set in. It is unclear how high this would be.

In theory, the invention of ASI will abruptly trigger runaway technological growth, resulting in changes to human civilization. According to this hypothesis, with each new and more AGI generations appearing more and more rapidly, an intelligence explosion can happen and will result in an ASI. This is where John von Neumann first uses the term "singularity".

Science fiction author Vernor Vinge said in his 1993 essay The Coming Technological Singularity that this would signal the end of the human era, as the new superintelligence would continue to upgrade itself and would advance technologically at an incomprehensible rate.

But again, that is still a speculation.

When Vinge, John von Neumann and Ray Kurzweil defined the concept in terms of the technological creation of superintelligence, they argue that it's difficult or impossible for present-day humans to predict our lives would be like in a post-singularity world.

What is about to happen in the near future is that, we humans are going to hit that trip wire that could change everything. AI is still young and still at its infancy. As it grows up, it may change how we see technology and the world itself. And when that moment happens, there better be a way for us to put them into a good use, not the other way around as by that time, we're not dealing with something inferior to us, but something that is superior. At that time, we can be standing at a fork on the road between 'Immortality' or 'Extinction'.

Stephen Hawking once said that "Success in creating AI would be the biggest event in human history. Unfortunately, it might also be the last, unless we learn how to avoid the risks."