Once upon a simpler web, searching meant typing a few words and diving into a sea of links. Users clicked, scrolled, skimmed, and somewhere between tabs and patience, their answer lay.

Then came large language models (LLMs). What began when OpenAI released ChatGPT, was followed by a handful of others, and with then, came a new kind of search: one that doesn’t show users where to look, but tells them what to know.

This was the time when the internet quietly shifted.

Suddenly, users could start asking it anything. And instead of seeing pages of results on search engines, AI gave users a story: a summary, curated and reworded, polished into something that felt... human.

But that convenience came with questions.

What happens to the open web when the machine becomes the middleman? Are we learning more, or just faster? Or perhaps worse, being lulled into thinking we're becoming smarter, while the machines quietly rewrite what it means to know?

In a preprint study titled Characterizing Web Search in the Age of Generative AI, the researchers from Ruhr University Bochum and the Max Planck Institute for Software Systems, tried to map this new landscape.

According to the researchers:

And what they found was revealing.

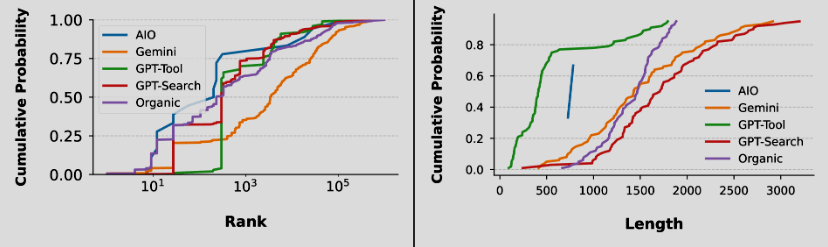

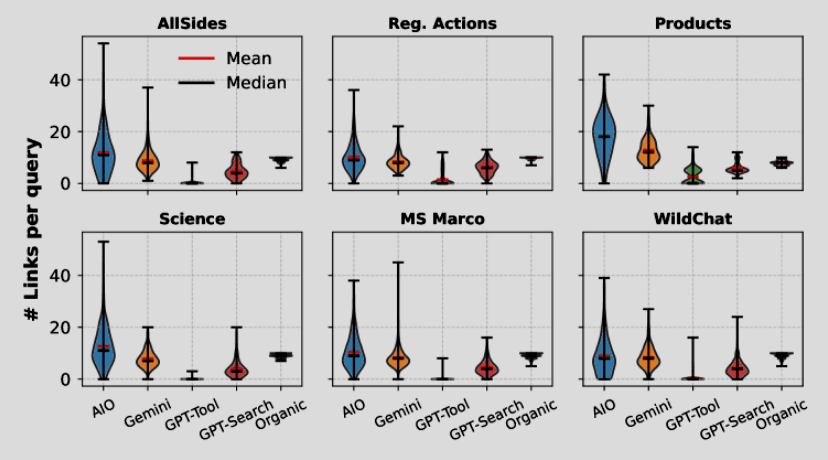

Google’s AI Overviews, for instance, often borrowed from sources far beyond the first page. And sometimes, it even as far as outside the top 100 of its search results 40% of cases. Gemini was even bolder, frequently citing domains well outside the top 1,000 most popular sites.

In contrast, GPT-4o leaned on what people might call respectable corners of the web: official company sites, encyclopedias, and institutional references, all while avoiding the noisy pulse of social media. GPT-4o with its Search Tool is still restraining the AI from fetching whatever it can from the web. Rather than pulling new data live, it often relied on what it already knew, preferring to clarify the question before diving into the open web.

The pattern is clear.

Google’s traditional search still behaves like a librarian, carefully ranking relevance. AI on the other hand, is more like a confident editor.

In other words, AI is like taking the role of the one who cuts, condenses, and retells the story in their own words.

This is not a surprise, but therein lies the trade-off.

In order to create that human-like responses, AIs often trim nuances. But in order to do that, it needs to compress information. As a result, subtle, the secondary, or the ambiguous, or the small details that, to a human readers are needed, are eliminated.

And this changes everything.

The researchers concluded that:

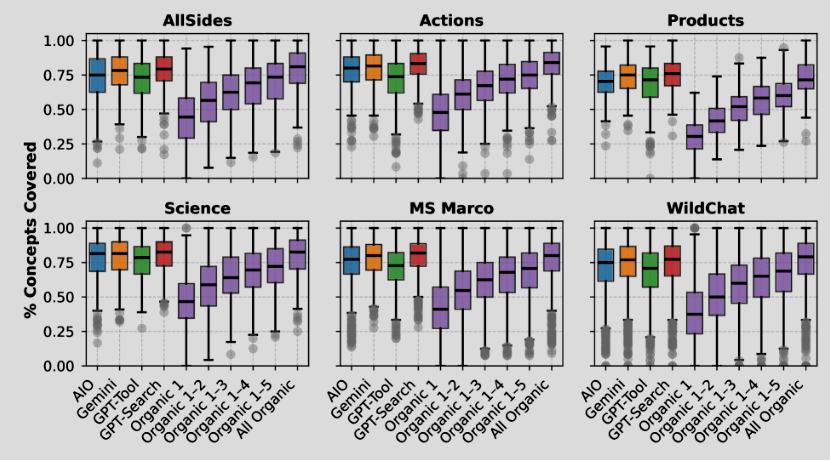

"Different generative search engines rely on their internal knowledge to vastly different degrees. Whereas GPT-Tool consults, on average, 0.4 web pages to answer a query, the same number is 8.6, 8.5, and 4.1 for AIO, Gemini, and GPT-Search. Relying on fewer sources does not lead to significant degradation in topical coverage. A topical analysis of the content using concept induction (Lam et al., 2024) finds that GPT-Tool covers 71% of topics discovered by all search engines combined."

While letting AI browse the web for us feels effortless, it also takes something away: the quiet control, the curiosity that once guided our own searches.

Maybe that’s why, in the end, the researchers didn't name a winner.

They suggested that AI isn’t just a new search engine, but a new way to measure knowledge itself. Which is through the diversity of its sources, the breadth of its understanding, and how well it compresses human information and conveys knowledge.

Because people no longer "search" the way they used to, there’s no need to think of perfect keywords, no more wrestling with syntax or guesswork. Instead, we simply ask, as we would to a person, in our own words, tone, and rhythm.

And the answers we receive no longer come directly from the web, but from something that reads it for us, decides what matters, and delivers what it thinks we want to know.

The web is still infinite. With AI, we’re no longer the ones exploring it.