There was a time when fake news can go nowhere halfway across the globe before being spotted. Thanks to the internet, things can get quite difficult.

The internet enables computers to connect to a vast array of networks, allowing them to exchange data in real time. One source of information can spread like wildfire, and before anyone realizes it, some news can be heard all around the world in a blink of an eye.

Fake news is the modern world's problem. With AI, fake news can go a lot worse. Machine learning opens a new level of violation that invades privacy and things beyond belief.

AI-powered tools to create fake videos, imagery and audio, have become increasingly capable in fooling humans.

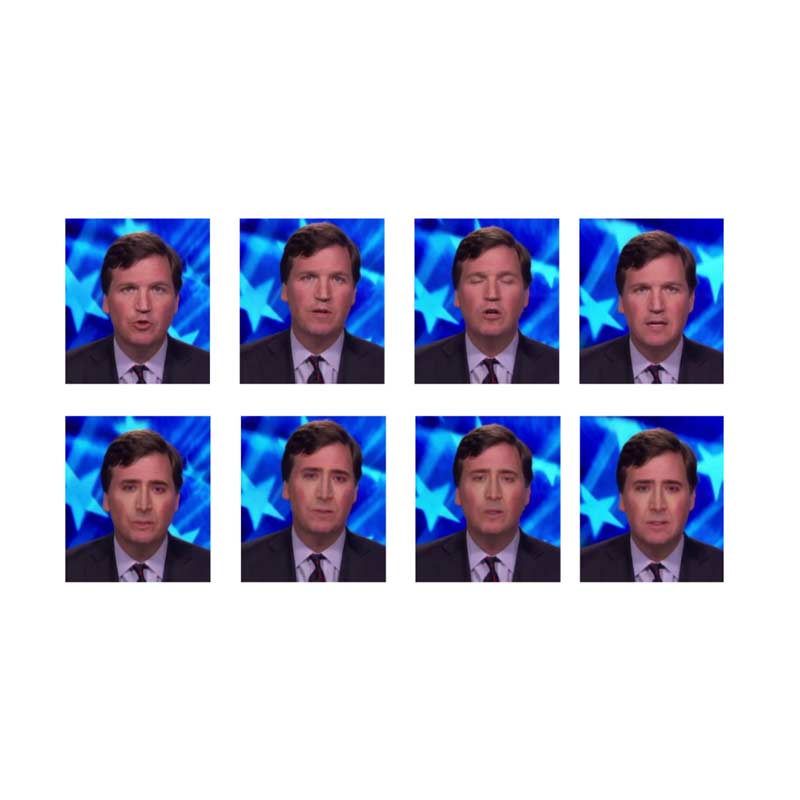

Then there are deepfakes which swap one person's face onto another person's body. The resulting videos are simple to make, but can be surprisingly realistic, making them particularly difficult to spot automatically.

And here, the U.S. Defense Department has created an AI-powered forensics tools to spot them.

Forensics experts have scrambled to find ways of detecting videos synthesized and manipulated using machine learning.

Many people on the web have generated a number of counterfeit celebrity porn videos, initially starring Gal Gadot. To make matters worse, the method can also be used to create fake videos of politicians saying or doing something outrageous, and this can be troublesome.

What drives technologists from the U.S. Defense Advanced Research Projects Agency (DARPA) to create the tools, is the concern about AI techniques that could make AI fakery almost impossible to spot automatically.

Known as generative adversarial networks (GAN), video creators can use deep neural network architectures comprised of two AIs, pitting one against the other to create more and more convincing fakes with stunningly realistic artificial imagery.

On the hands of experienced video editors, those fake videos can be made even more real.

To fight these type of forgery, DARPA use tools called Media Forensics. Originally, the program was intentionally created to automate existing forensics tools, but with some added enhancements, the program can be used to spot AI-made forgery.

At first, a team led by professor Siwei Lyu from the University of Albany. developed a simple technique.

"We generated about 50 fake videos and tried a bunch of traditional forensics methods. They worked on and off, but not very well," said Lyu.

After studying several deepfakes, Lyu and the team realized that the faces rarely blink. Even when they do blink, the eye movements are not natural. Lyu saw this as a common flaw because those videos are created by AI which learned from still images.

Still images, tend to show people with their eyes open.

After knowing this, the team at DARPA continued to explore human traits and psychology on AI-made fake videos, and saw that the videos tend to also have strange head movements, odd eye color and so on.

"We are working on exploiting these types of physiological signals that, for now at least, are difficult for deepfakes to mimic," says Hany Farid, a leading digital forensics expert at Dartmouth University.

However, the team then realized that the psychological signals can also be forged. As Lyu explained, a skilled video editor could create deepfake videos to have the person's eyes blinking by simply adding images that show the person blinking, to the AI's learning materials.

According to Matthew Turek at the Media Forensics program, "we've discovered subtle cues in current GAN-manipulated images and videos that allow us to detect the presence of alterations."

Lyu and his team developed the more effective technique, but wants it to be a secret, at least initially.

With the revelation of the forensics tools, the DARPA may signal the beginning of an AI-powered arms race between fake video creators and the government.

DARPA has also funded a project that puts digital forensics experts together for an AI forgery contest. They compete to generate the most convincing AI-generated fake video, imagery and also audio, to then create tools to catch those counterfeits automatically.