Searching online has always been about words. Now, that's no longer the case.

For years, people typed queries into a little box, hoping that keywords would somehow match the vision in their minds. Sometimes, it works. When looking something undeniable, like the capital of a country, the answer is plain and clear. But at other times, especially when searching for inspiration or style, words aren’t enough.

People might be imagining a bedroom aesthetic that’s "moody but maximalist," or a jacket that feels both bold and understated, and typing a few descriptors rarely captures that exact vibe.

This gap between imagination and results is what Google is trying to close with its latest update to AI Mode in Search.

The company is giving users a way to search more naturally.

Here, the update introduces a way for users to interact with AI Mode with more than just words, but also with images, combinations of visuals and descriptions, and conversational refinements along the way.

So instead of having users forcing themselves into the rigid structure of filters and categories, provided by algorithms and the never-ending SEO war, AI Mode now lets users search the way they normally talk to a friend.

AI Mode can help turn your ideas into a clear vision, and you can ask follow up questions to help refine your search. Each image result will have a link, so you can click out. And because the experience is multimodal, you can also start your search by uploading an image. pic.twitter.com/ZeazrQGg7j

— Google (@Google) September 30, 2025

The experience starts conversationally.

Users can simply type or speak what they're thinking and willing to search, like "show me cozy Scandinavian living room setups," or "find barrel jeans that aren’t too baggy."

AI Mode will then respond by generating a range of visual results, each one clickable, so users can explore further. If what users see isn’t quite right, they don’t have to start over with a new query; they just refine. Maybe you want "darker tones," or "more wooden textures," or probably "ankle-length denim."

The AI understands the nuance, listens, and updates the visuals accordingly.

In other words, it’s less like typing commands into a machine and more like having a back-and-forth conversation with an assistant who can translate imagination into images.

And it’s not limited to text.

If words fail, the next good thing is that users can upload a picture, like something they’ve snapped on their phone or found elsewhere, and use that as a starting point. Users can even combine text and visuals, creating layered, hybrid queries. For example, users can ask AI Mode "designs like this photo but with more muted colors" or "additional storage."

For shopping, this is especially powerful. Instead of digging through endless filters for size, style, or brand, users can let the AI show them options in context, tailored to their description, and then refine until they land on something worth buying.

When you want to shop in this new experience, you can simply describe what you’re looking for — like the way you’d talk to a friend — without having to sort through filters, and you’ll see visual shopping results and links to purchase directly from the retailer. pic.twitter.com/eUYuEw68PZ

— Google (@Google) September 30, 2025

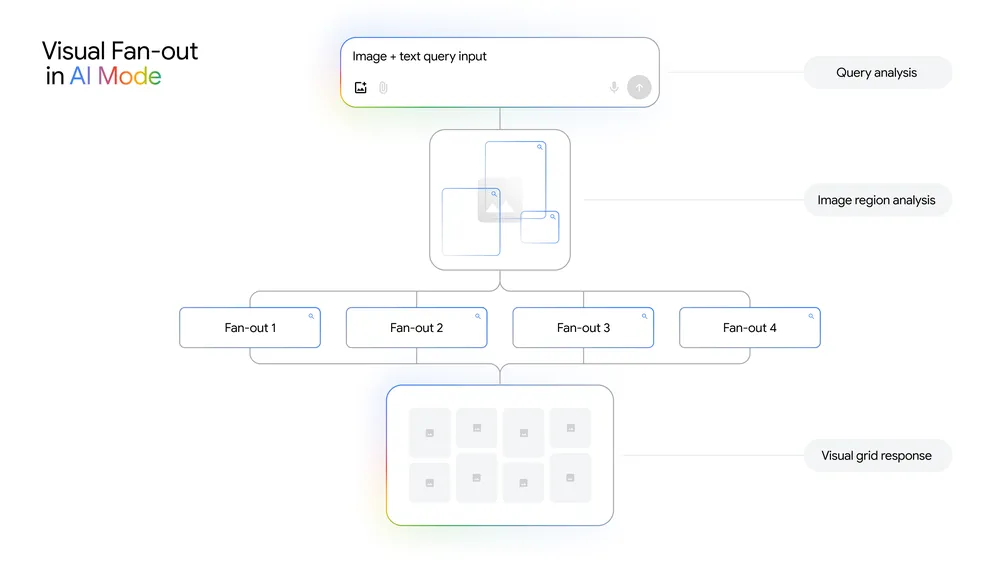

Behind all of this magic is a fusion of Google Lens, Image Search, and Gemini 2.5, Google’s multimodal AI model.

The system uses what the company calls "visual search fan-out," a technique that breaks down not just the main subject of an image but all the subtle details in it (the patterns, the background elements, even secondary objects), and runs multiple queries in parallel to understand the full context. This makes results feel sharper, more aligned with intent, and more dynamic than traditional image searches.

It’s also deeply integrated with Google’s Shopping Graph, which contains more than 50 billion product listings that refresh constantly, with more than two billion updates every hour, according to Google.

The update also enhances mobile use.

This rollout reflects a broader shift happening in how people interact with information.

For decades, the internet has been built on keywords and links. The famous “ten blue links” that defined Google Search.

But as generative AI becomes embedded in everyday tools, the way people look for answers is changing. Queries are no longer about fitting into rigid boxes; they’re about expressing intent, however imperfectly, and letting AI do the heavy lifting in translating that into useful results.

For Google, the stakes are high.

OpenAI’s ChatGPT and other competitors have redefined how people seek knowledge, threatening the dominance of traditional search.

By integrating AI into Search, and having AI Mode with this enhanced abilities, Google is no longer in fear of losing its relevance. This, is Google’s way of evolving beyond its old model.

The update is rolling out in the U.S. in English first, with more regions expected to follow. For now, users there will be the first to experiment with what feels like a fusion of inspiration board, shopping assistant, and search engine all in one. If it succeeds, it could redefine not only how we search but also how we shop, design, and create online.

In the end, Google’s new AI Mode doesn’t just answer questions. It helps people see possibilities.

It bridges the gap between the abstract picture in your mind and the real-world options waiting to be discovered. Searching isn’t just about typing anymore — it’s about imagining, conversing, and refining until your vision takes shape.