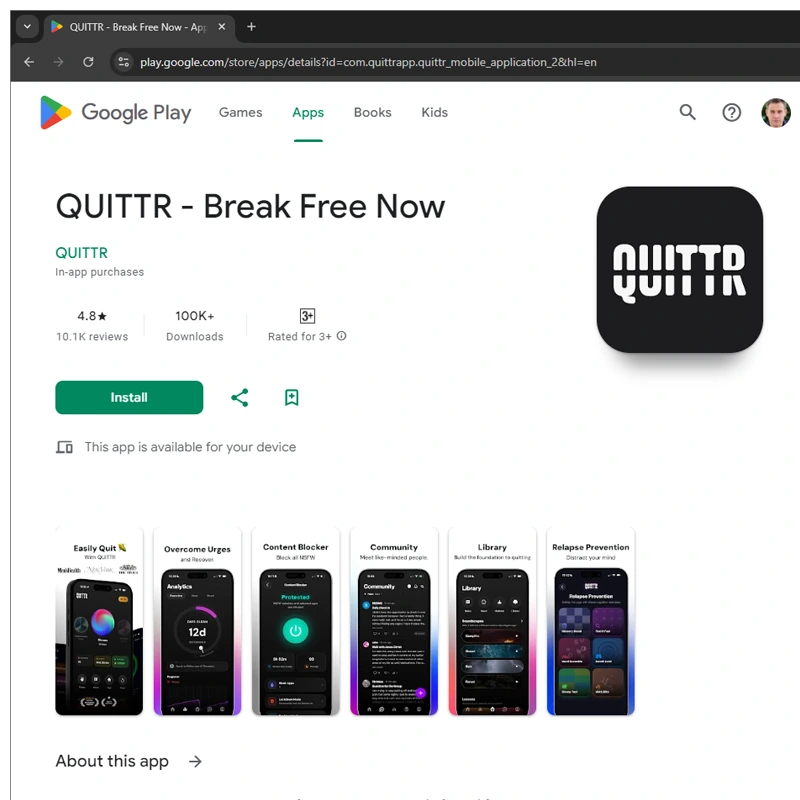

In the booming world of self-improvement and "no-fap" culture, few apps have gained traction as quickly as Quittr.

Marketed as the "#1 Porn Addiction App to Quit Porn Forever," it promises users personalized recovery plans, community support, content blockers, and tools to break free from compulsive pornography use. With over 1.5 million downloads claimed and reports of roughly $500,000 in monthly revenue, the app has become a financial success story for its young founders.

But behind the motivational messaging lies a serious privacy failure.

Quittr exposed highly intimate data belonging to hundreds of thousands of users, including details about their masturbation habits, emotional struggles with porn, and even the types of content they were trying to avoid.

According to investigations, the breach affected more than 600,000 users, with roughly 100,000 (about one in six) identifying as minors.

Quittr was created by a group of entrepreneurs in their early 20s, often referred to as part of the so-called "App Mafia."

Alex Slater, the British co-founder and CEO, started the project after his own struggles with porn and built an early version in just 10 days. He is joined with Connor McLaren (23 years old) as a co-founder, in which he handles operations and growth. Other contributors include Alex's brother Chris Slater who works as its product and design.

The founders have been open about their motivations. Slater has spoken about turning a personal problem into a mission, while McLaren has admitted the business started partly as a way to avoid traditional jobs.

The app charges a subscription (around $30 per year) and uses shame, accountability, and habit-tracking as core mechanisms. This approach resonates with the broader "no-fap" and men's self-improvement movements.

"We started with zero," Slater once recalled, "and I mean zero. I was dead broke. Connor put in his own money, and we turned that $3,000 into $37,000 in our first month. Since then, it’s been a non-stop upward trajectory."

ADDICTED TO PORN?

OPEN THIS THREAD— QUITTR (@QUITTR_app) January 27, 2026

Quittr’s rapid rise didn’t happen by chance.

The app was built through focused innovation, combining insights from behavioral science, proven habit-formation principles, and modern technology to deliver a recovery experience that feels both effective and genuinely engaging.

"With a structured 90-day, science-based recovery program, real-time progress tracking, an encouraging community, and a full suite of tools—including meditations, interactive challenges, and more—you have everything you need to take charge of your habits," the app says.

6 traits of men who stay porn-free:

1. High standards

2. Strong boundaries

3. Consistent routines

4. Clean environments

5. Limited screen time

6. Purpose-driven mindsets

Are you ready to become the best version of yourself?— QUITTR (@QUITTR_app) January 19, 2026

At the heart of Quittr is its standout Panic Button, a powerful real-time tool designed to interrupt urges the moment they strike.

With a single tap, it delivers immediate grounding techniques, motivational messages, and healthy distractions to help users regain control when temptation feels strongest. This feature effectively bridges the gap between knowledge and action, providing instant support exactly when it’s needed most.

But Quittr doesn't stop at quick interventions.

Its thoughtful onboarding process goes much deeper, guiding users through a reflective journey that explores their emotional history, personal triggers, and the stories behind their habits. Instead of offering generic advice like many other apps, Quittr shifts the focus from simply "quitting" to truly understanding why the pattern developed in the first place, creating a more personalized and meaningful path forward.

7 benefits guys notice after quitting porn:

1. Clearer mind

2. More drive

3. Increased confidence

4. Higher standards

5. Better self-control

6. Deeper relationships

7. Stronger discipline

Have you noticed any of these benefits?— QUITTR (@QUITTR_app) December 12, 2025

But one of Quittr's most powerful innovations should be its strong community-centric recovery model.

With over hundreds of thousands of users, the app has grown into one of the largest and fastest-expanding support networks in the digital wellness space.

In the era where pornography and whatever imaginable (the infamous Rule 34 of the internet) is available only a few taps away, addiction is extremely easy to get.

With apps like Quittr, recovery to them may no longer feel like a lonely, uphill battle.

Instead, it becomes a shared journey where users encourage one another, celebrate milestones together, and openly normalize conversations about porn addiction and digital habits. This sense of belonging and mutual support sets Quittr apart from traditional recovery approaches.

Then came th issue: a misconfigured Google Firebase database.

The database, which is a common backend service for mobile apps, is by default set up in ways that make data accessible if authentication rules aren't properly locked down. An independent security researcher discovered the vulnerability and was able to access user records without much difficulty.

As a result, the database was lesking sensitive information that include:

- Users' age.

- How often they masturbate.

- How viewing pornography makes them feel (emotional responses).

- Details about their porn consumption habits and recovery progress.

This wasn't a sophisticated hack. Instead, it was a basic configuration error that left sensitive personal data wide open.

A number of media companies had reached the developers personally by contacting the founders, and informing them of the issue, and even offered help to fix it. However, cespite these warnings, the vulnerability reportedly remained unfixed for months. The developers allegedly downplayed or denied the severity of the exposure.

Only after the database was finally secured did 404 Media publish the full story naming Quittr.

This incident highlights a growing problem in the app economy: young developers with limited security expertise are building products that collect deeply personal data: especially around sex, mental health, and addiction, then failing to protect it properly.

For users, many of whom turned to Quittr in moments of vulnerability or shame, the exposure carries real risks: potential blackmail, embarrassment, or doxxing. The presence of a large number of minors in the user base makes the breach even more concerning from a legal and ethical standpoint.

The story has sparked widespread discussion online, with many pointing out the irony: an app designed to reduce shame around porn habits ended up potentially amplifying it through a preventable data leak.