The rapid spread of generative AI is reshaping industries, education, and everyday life. But the technology is also being adopted in far darker corners of the internet.

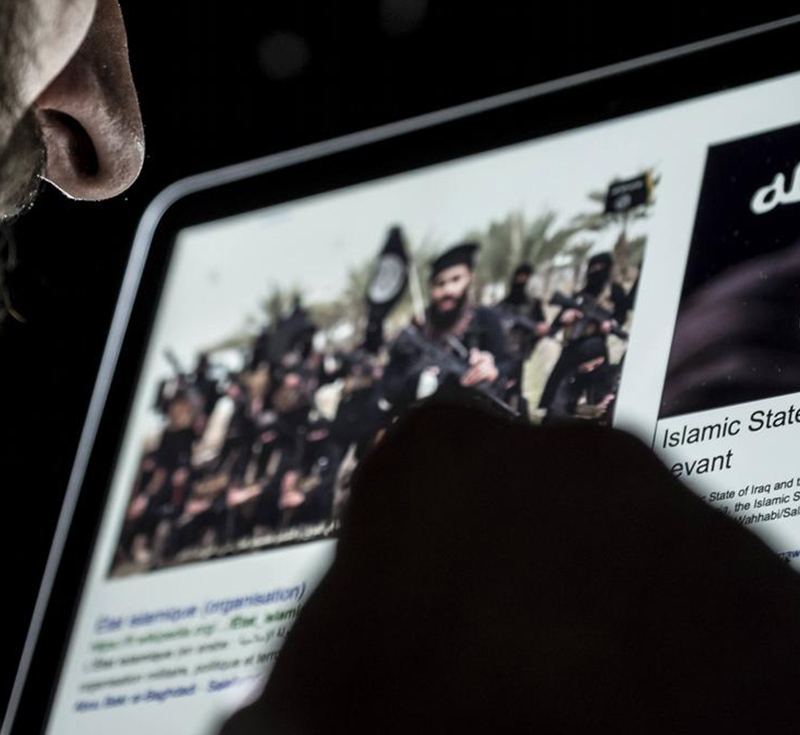

A recent report highlighted an unsettling development: extremists affiliated with ISIS have begun circulating guidance on how recruits should use AI and chatbots in their activities. The material appears in Voice of Khorasan, the English-language magazine linked to the Afghanistan branch of the group, ISIS-K, or IS-K, and includes instructions on how supporters can become what it calls a "responsible mujahid" when using AI tools.

The idea of "responsible" AI use coming from a militant organization is paradoxical, but the guidance reveals something important about how extremist groups view emerging technology.

Rather than rejecting modern digital tools, IS-K appears to be embracing them pragmatically.

ISKP, short for Islamic State-Khorasan Province, is a transnational jihadist movement loosely affiliated with the Islamic State in Iraq and Syria (ISIS). The group operates primarily in Afghanistan, though its activities have extended beyond the country's borders.

Its formation was inspired by the rapid expansion of ISIS between 2014 and 2015. During that period, disaffected members of the Tehreek-e Taliban Pakistan (TTP), a militant group active in the Afghanistan-Pakistan region, pledged allegiance to ISIS. They adopted the name Islamic State-Khorasan Province, referring to the historical region of Khorāsān in early Islamic history.

At its peak in 2016, IS-K reportedly had more than 3,000 fighters and maintained a relatively centralized command structure. The group expanded its operations beyond Afghanistan and neighboring Pakistan, most notably carrying out a series of attacks in Bangladesh.

The group's initial rise was relatively short-lived. In August 2016, its founding leader, Hafiz Saeed Khan, was killed, and several of his successors were subsequently killed or arrested in rapid succession. Then, in 2017, the U.S. escalated its campaign against the group by deploying its most powerful conventional weapon, the GBU-43/B Massive Ordnance Air Blast (MOAB) bomb, often referred to as the "Mother of All Bombs," to destroy a complex network of caves and tunnels used by IS-K as a base.

Facing sustained military pressure from U.S. forces, the Afghan government, and the Afghan Taliban, the group gradually weakened.

While Afghanistan's instability allowed IS-K to maintain pockets of activity, by early 2020 the organization had largely lost its ability to control territory.

Regardless, the group is still active, and more recent reports suggest that the group has begun exploring how emerging technologies like artificial intelligence could play a role in its activities. The magazine reportedly includes sections explaining how chatbots can assist with research, propaganda creation, and messaging while cautioning supporters about operational security.

One passage reportedly frames the technology in simple terms: "AI is like fire. You can use it to light up the house or to burn it down."

However, rather than emphasizing offensive uses of the technology, the guidance appears to focus largely on defensive measures designed to avoid detection.

For example, recruits are warned not to share personal information with chatbots that could reveal their identities or locations, not to upload sensitive files, and not to ask questions that could expose them to surveillance.

The emphasis reflects the group’s concern that AI services, often operated by Western companies, could log user data or reveal identifying patterns if used carelessly.

In this sense, the guidance resembles operational security manuals that extremist groups have circulated for years regarding encrypted messaging platforms and online anonymity.

At the same time, the instructions suggest a deeper ideological shift.

Earlier extremist propaganda sometimes portrayed advanced technology with suspicion. But IS-K's messaging increasingly frames AI literacy as necessary for modern jihad. In some internal discussions, supporters have even described learning AI as an obligation for followers, similar to mastering other digital skills required for online propaganda or recruitment campaigns.

Security analysts say the development fits a long pattern.

Extremist organizations have historically been quick to adopt new communication technologies: from early web forums to social media and encrypted messaging platforms.

When social networks became widespread in the 2010s, ISIS used them to distribute slick propaganda videos, recruit supporters abroad, and coordinate messaging across continents.

AI now represents the next stage in that evolution, enabling groups with limited resources to produce sophisticated media and messaging at scale.

Generative AI tools can produce images, videos, audio, and text with minimal technical expertise, allowing extremist groups to create propaganda far more quickly than before.

Analysts warn that such tools could allow militants to tailor messages to different audiences, translate material into multiple languages instantly, or generate persuasive content designed to influence individuals vulnerable to radicalization.

Even small groups or lone actors could leverage these capabilities without the infrastructure once required for large propaganda operations.

At the same time, experts caution that AI does not fundamentally change the nature of extremist movements; rather, it amplifies existing tactics.

The technology can help produce propaganda, automate messaging, or disguise identities, but the underlying goals, recruitment, ideological dissemination, and psychological impact, remain the same. As one analyst observed, AI acts primarily as a multiplier that accelerates and scales strategies groups already use online.

For counterterrorism agencies, the shift raises difficult questions.

Monitoring extremist activity online has already become complicated by encrypted platforms and decentralized networks.

The addition of AI-generated media, deepfakes, and automated propaganda may further blur the lines between real and fabricated content, making detection and moderation far more challenging. Governments and tech companies are increasingly concerned that the accessibility of generative AI could lower the barrier to entry for extremist influence operations.

The episode highlights a broader truth about emerging technologies: they are rarely adopted by only one side. The same tools being used by companies to boost productivity, by students to write essays, or by researchers to accelerate discovery are also being explored by malicious actors.

AI itself is neutral; how it is used reflects the intentions of those wielding it. As generative systems become more powerful and widespread, the challenge will be ensuring that safeguards evolve as quickly as the technology itself.