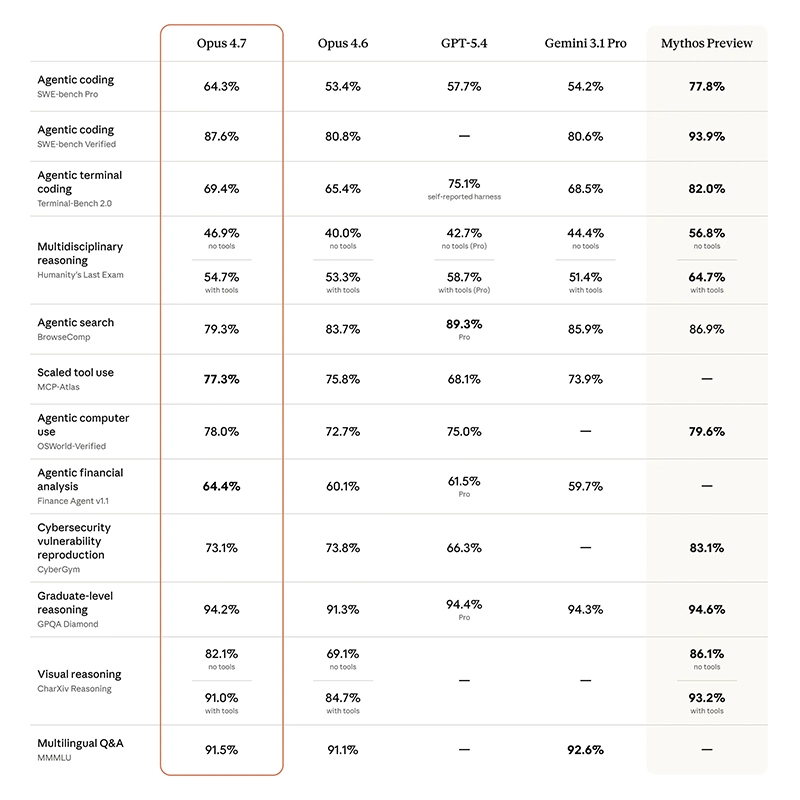

The competition among large language models (LLMs) is intensifying, with the next frontier clearly shifting toward agentic capabilities.

Following the rise of OpenAI's ChatGPT, and the wave of competitors that quickly emerged, Anthropic has carved out a distinct position by placing AI safety at the core of its mission. Its research and products emphasize interpretability, controllability, and alignment with human values, rather than focusing solely on raw performance or speed. This sets it apart from many companies that prioritize rapid capability expansion.

Most recently, the company introduced 'Claude Opus 4.7.'

But rather than positioning this new model as a dramatic leap forward, the company has leaned into iterative refinement, focusing on improving reliability, reasoning consistency, and controllability.

The reason is because Opus 4.7 builds on Opus 4.6 in ways that are less about headline-grabbing breakthroughs and more about smoothing out the kinds of issues that emerge when models are used in production settings.

Introducing Claude Opus 4.7, our most capable Opus model yet.

It handles long-running tasks with more rigor, follows instructions more precisely, and verifies its own outputs before reporting back.

You can hand off your hardest work with less supervision. pic.twitter.com/PtlRdpQcG5— Claude (@claudeai) April 16, 2026

One of the more notable areas of improvement is how the model handles complex, multi-step reasoning.

Tasks that require sustained attention such as debugging code, analyzing structured data, or following layered instructions, tend to expose weaknesses in earlier systems, particularly around drift, inconsistency, or premature conclusions. But with Opus 4.7, the model appears to reduce some of these failure modes, showing better persistence and more coherent step-by-step execution.

This matters less in isolated prompts and more in agentic workflows, where outputs from one step feed directly into the next.

Closely related is the model’s behavior under ambiguity.

In practical use, instructions are rarely perfectly specified, and models must infer intent while avoiding overreach. Opus 4.7 is described as being more deliberate in these situations, like less prone to guessing when uncertain, and more likely to either ask for clarification or proceed cautiously.

This shift reflects a broader design philosophy that values predictability and transparency over aggressive completion, particularly in professional or high-stakes contexts.

Another area of refinement is consistency across longer sessions.

Earlier generations of models often struggled with maintaining stable behavior over extended interactions, especially when context grew large or tasks became iterative.

Improvements in this area suggest that Opus 4.7 is better suited for sustained workflows, where maintaining coherence over time is as important as producing a strong single response.

This is particularly relevant for use cases like software development, research assistance, and operational automation, where tasks unfold over many steps rather than a single exchange.

The model also continues to expand its multimodal capabilities, with stronger performance in interpreting visual inputs such as screenshots, diagrams, and documents.

While multimodality is now a common feature across leading models, the emphasis here is again on practical usability, accurately extracting relevant information, integrating it into reasoning processes, and maintaining consistency between visual and textual understanding. This aligns with the growing role of AI systems as tools embedded within everyday workflows, where inputs are rarely limited to plain text.

Equally important are improvements that are less visible but highly consequential in deployment.

These include better resistance to repetitive loops, more stable formatting in outputs, and improved recovery from partial errors.

Such characteristics rarely show up in benchmark scores, yet they play a critical role in determining whether a model can be reliably integrated into real systems. In many ways, these refinements signal a maturation phase, where the focus shifts from demonstrating capability to ensuring dependability.

On the API, a new xhigh effort level between high and max gives you finer control over reasoning and latency on hard problems. Task budgets (beta) help Claude prioritize work and manage costs across longer runs.

— Claude (@claudeai) April 16, 2026

Anthropic’s broader positioning is also reflected in how the model is released and framed.

Opus 4.7 is presented as a production-ready system, intended for wide use across enterprise and developer environments. At the same time, more experimental or higher-risk capabilities appear to be developed under tighter controls and limited previews.

This dual-track approach allows the company to continue exploring the frontier of model capability while maintaining a stable, safer baseline for general deployment.

Safety and alignment remain central to this strategy.

Rather than treating safeguards as an external layer, Anthropic continues to integrate them into the model’s core behavior. This includes mechanisms designed to reduce harmful or high-risk outputs, as well as a general emphasis on making the model’s reasoning more interpretable and controllable.

In practice, this often manifests as more conservative behavior in uncertain scenarios and clearer boundaries around sensitive use cases.

Claude Opus 4.7 is available today on https://t.co/tHPAZRgiuP, the Claude Platform, and all major cloud platforms.

Read more: https://t.co/FXsCSDFSv3— Claude (@claudeai) April 16, 2026

The release of Claude Opus 4.7 also reflects a broader shift across the AI industry.

As competition intensifies, differentiation is increasingly found not just in what models can do, but in how they behave under real conditions: how reliably they follow instructions, how well they handle edge cases, and how safely they can be deployed at scale.

The notion of "agentic AI" brings these factors into sharper focus, as systems are expected to act over time rather than simply respond in isolated moments.

In that sense, Opus 4.7 is less about redefining the state of the art and more about reinforcing a particular direction. It highlights a view of progress that prioritizes stability, alignment, and usability alongside capability.