Image recognition AIs can be so good at identifying people that their development is probably faster than other machine learning technologies.

But no how good they have become, AIs still couldn't deal with sensitive characteristics of humans. Gender for example, is already a complex subjects that it deserves its own field of interdisciplinary study and academic field.

And because of people becoming sensitive about the subject, just like how they would when dealing with race, ethnicity, income, political or religious belief, Google is no longer identifying people by their gender.

As one of the pioneers of image recognition systems Google is removing labels such as "man" and "woman", and instead classify any images of people with 'non-gendered' labels such as "person."

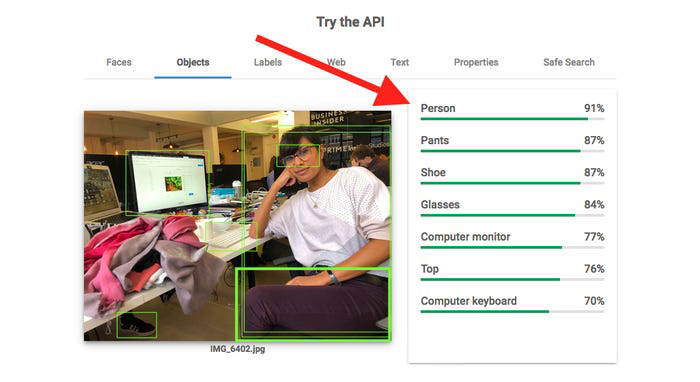

The changes are introduced to Google Cloud’s Vision API, a Google service can be used by developers to, among other things, attach labels to photos identifying the contents. The tool can also detect faces, landmarks, brand logos, and even explicit content, and has a host of uses from retailers using visual search to researchers.

Related: Fearing AI Bias, Google Blocks Gender-Based Pronouns From Gmail's Smart Compose

According to Google in an email to developers:

On its AI Principles page, Google said that:

It has been for a long time that the term 'gender' is used to differentiate the range of characteristics between masculinity and femininity. In the modern culture, it has come that gender is also meant to describe, depending on the context, the characteristics that may include biological sex, sex-based social structures, or gender identity.

This is one of the reasons why sexism exists, in both men and women. Which in turn creates the concept of 'gender sensitivity'.

The terms has been developed as a way to reduce barriers to personal and economic development created by sexism. Gender sensitivity helps generate respect for the individual regardless of his/her sex.

In the modern days of technology where AIs have taken many parts of automation, Google has been one of the pioneers by leveraging vast amount of machine learning technology to aid it do things.

And AI bias is already widely discussed topic.

For example, many facial recognition systems misidentify people of color more frequently than white people. And to many AIs, men are regarded as more superior to women. Making things worse, AIs seem to consider women to be appearing in the kitchen, cooking, while seeing a man doing the same activity to be considered misgender trans and non-binary people.

Image recognition systems have a unique tendency to do this, and Google itself noted that it own AI can also create this kind of bias.

But since not everyone would agree with Google’s decision to remove gendered labels from images, despite the move should at least remove one area of AI bias, the company invites affected developers to comment on its discussion forums.