The development of large language models is accelerating fast, and nothing is stopping it from getting better, if not more useful.

After OpenAI’s release of ChatGPT in late 2022 shook the technology industry, the entire AI landscape shifted almost overnight. ChatGPT’s meteoric rise demonstrated just how powerful conversational AI could be, prompting tech giants, especially Google, to accelerate their own efforts to compete.

At the time, Google had been working quietly on advanced AI research under DeepMind and Google Brain, but the public debut of ChatGPT forced it to move faster and more boldly.

This urgency led to the launch of Bard in early 2023, which, while functional, was kind of botched. By late 2023, Google rebranded Bard to Gemini, a new generation of large language models designed not just to match ChatGPT’s abilities but to surpass them by leveraging Google’s deep knowledge graph, search dominance, and integration with its vast suite of services.

Then came Gemini Live in May 2025, which represented a significant leap in how people could interact with AI. Instead of just typing or speaking to a chatbot in a static window, Gemini Live allowed users to have real-time, voice-driven conversations with the assistant while sharing their device’s screen or using the camera to provide visual context.

What this means, users who were looking at a web page, a document, a schedule, or even a real-world object, could ask Gemini about it instantly. The assistant could interpret what it “saw” and respond accordingly, blurring the line between traditional search, productivity tools, and live AI assistance. Early on, the feature’s capabilities were promising but limited—mainly revolving around answering questions about visible content or performing simple tasks.

But Google made it clear that deeper integration with its ecosystem was on the roadmap.

That vision is now becoming reality.

Gemini Live now connects with your favorite apps from @Google - just share your camera or screen to get instant help, anytime.

— Google Gemini App (@GeminiApp) August 11, 2025

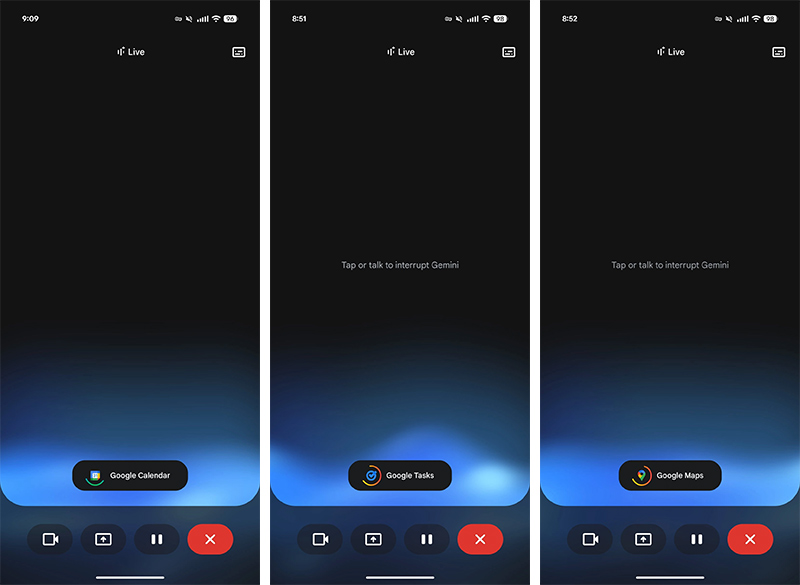

In one of the largest expansions of Gemini Live’s capabilities to date, Google has begun rolling out real-time integration with four of its most widely used apps: Calendar, Keep, Maps, and Tasks.

Available on both Android and iOS, this update allows Gemini Live to not only retrieve information from these apps but also create and modify entries without leaving the conversation.

For instance, users can simply ask Gemini Live things like, “What’s on my schedule today?” and have it instantly pull the details from Google Calendar, displaying them in the chat with an option to open the full event if the users wish. If users point their phone’s camera at a flyer, an invitation, or even a sports schedule, they can instruct the assistant to add those events to the calendar, where the AI will intelligently parse details like date, time, and location.

In one test scenario, Gemini Live was even able to recognize which football games were home matches and add them accordingly, complete with opponent names and start times.

The integration with Maps brings another layer of convenience.

Users could say, “Guide me to my next appointment,” and Gemini Live will check their calendar, find the relevant event, determine the location, and then generate a link to Google Maps for navigation.

While it doesn’t automatically launch Maps in full navigation mode yet, it streamlines the process into a single tap.

Similarly, the Tasks integration allows users to quickly check their to-do lists, add new items, or mark tasks as complete.

As for the Keep integration, it lets users dictate or type notes directly into their existing Keep notebooks without manually opening the app.

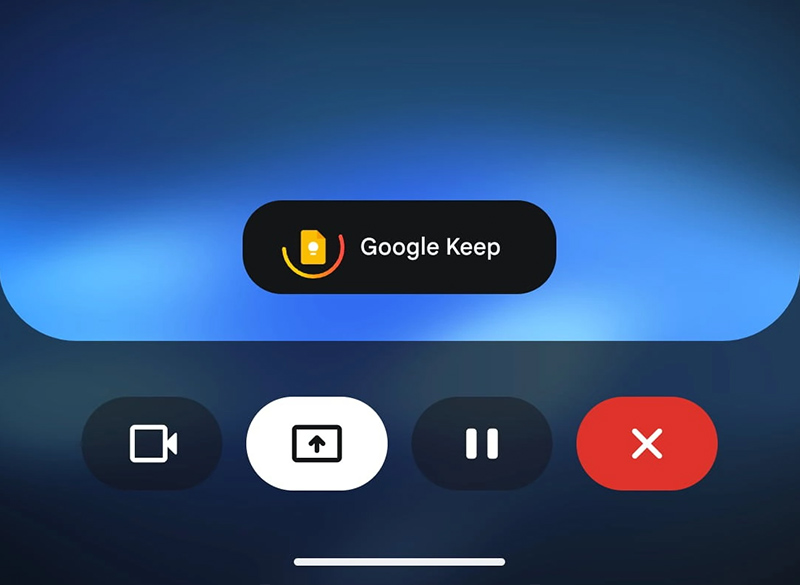

One of the most impressive aspects of this update is how fluid the interactions feel.

Based on Gemini Live, users can interrupt the AI mid-task, change the query, or jump to a completely unrelated request without breaking the session. A small notification appears when Gemini Live is connected to a specific app, along with a rotating indicator to show it’s retrieving or updating information. Once the action is completed, the assistant provides a preview.

Whether it’s a newly created calendar event, a route suggestion, or a to-do list update, users can tap them to open in the corresponding app for further edits.

On Samsung Galaxy devices, the feature goes even further, extending integration to Samsung Notes and Reminders in addition to Google’s own apps.

This tightens the synergy between Gemini Live and the productivity tools built directly into certain Android devices. Although Google has yet to enable rumored features like “context cards” or extend Gemini Live’s reach to third-party apps, the company’s approach is clearly moving toward making the assistant a central hub for both personal and work-related tasks.

All of this builds on earlier improvements to Gemini Live, such as real-time captions for its spoken responses.

Combined with its screen-sharing and camera-based context awareness, Gemini Live is steadily evolving into more than just an AI chatbot. It’s becoming a persistent, context-aware companion that can act on users' behalf across the apps they already use.

With this latest update, Google is not only closing the gap with competitors like OpenAI’s ChatGPT voice mode and Apple’s upcoming AI features but also playing to its biggest strength: deep, native integration with the Google ecosystem that so many users already rely on.

If the company continues on this trajectory, Google may be able to eventually make Gemini Live the first truly hands-free, all-in-one mobile assistant that feels like part of users' phone core functionality rather than just another app.

In the rapidly evolving AI assistant race, this move makes it clear that Google intends for Gemini Live to be at the center of how users interact with their devices in real time.