Dealing with the spread of child sexual abuse material (CSAM) on the web has been a priority for many big internet companies.

Usually, most automated solutions to this problem involves checking on images and videos against a catalog of previously identified abusive materials. This method uses crawlers to identify those contents, and stop people sharing known previously-identified CSAM again.

But the problem of using this method is that the system can’t catch material that hasn’t already been marked as illegal. For this reason, human moderators should step in and review them first.

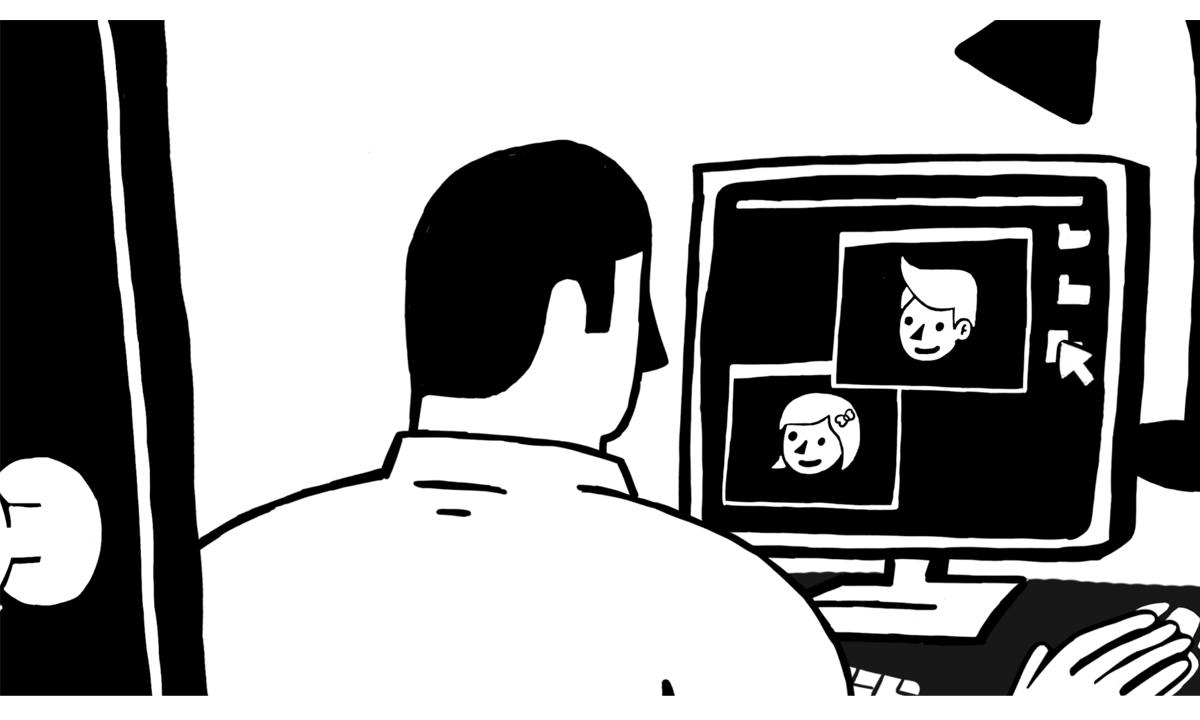

Apparently, the job of manually filtering those contents can be difficult and daunting. It can cause a serious psychological toll and a harrowing experience for those human moderators.

As part of Google's Content Safety API, Google is automating the process using image recognition AI, capable of sniffing through images, helping those on the frontline with the job. This can reduce the number of people required to be exposed to those explicit materials.

Google’s tool still require human review for confirmation. But with the company's expertise and experience in machine vision, the AI only presents the reviewer with the material most likely to be abusive, rather than requiring him or her to go through each item manually.

What this means, the AI can assist moderators by sorting flagged images and videos, to then prioritize "the most likely CSAM content for review."

In one trial, Google said that the AI has helped moderators "take action on 700 percent more CSAM content over the same time period."

According to Fred Langford, deputy CEO of the Internet Watch Foundation (IWF), the software would "help teams like our own deploy our limited resources much more effectively."

Based in England, the IWF is one of the largest organizations dedicated to stopping the spread of CSAM on the internet, funded by contributions from big international tech companies, including Google. It has a team of human moderators to identify abuse imagery, and operates in more than a dozen countries, allowing internet users to report suspect material.

Besides that, IWF also has its own investigative operations, which identifies websites where CSAM is shared, and working with the authorities to shut them down.

Given that the IWF has found nearly 80,000 sites hosting CSAM in 2017, the organization is taking this matter very seriously.

IWF is testing Google's image recognition AI thoroughly to see whether it can perform well and fits with the moderators' workflow. Langford added that tools like this is a step towards fully automated systems that can identify previously unseen material without human interaction at all.

"That sort of classifier is a bit like the Holy Grail in our arena."

But there is one problem about automating this filtering method. Langford said that such tools should only be trusted with "clear cut" cases to avoid letting abusive material slip through the internet.

If things go well as expected, Google wants the tool to be available for free, to help companies and organizations that monitor child sexual abuse material. This should make the both emotionally and taxing job, more efficient.