In the fast-evolving world of PC gaming and graphics technology, Nvidia is pushing the boundaries of what's possible with AI.

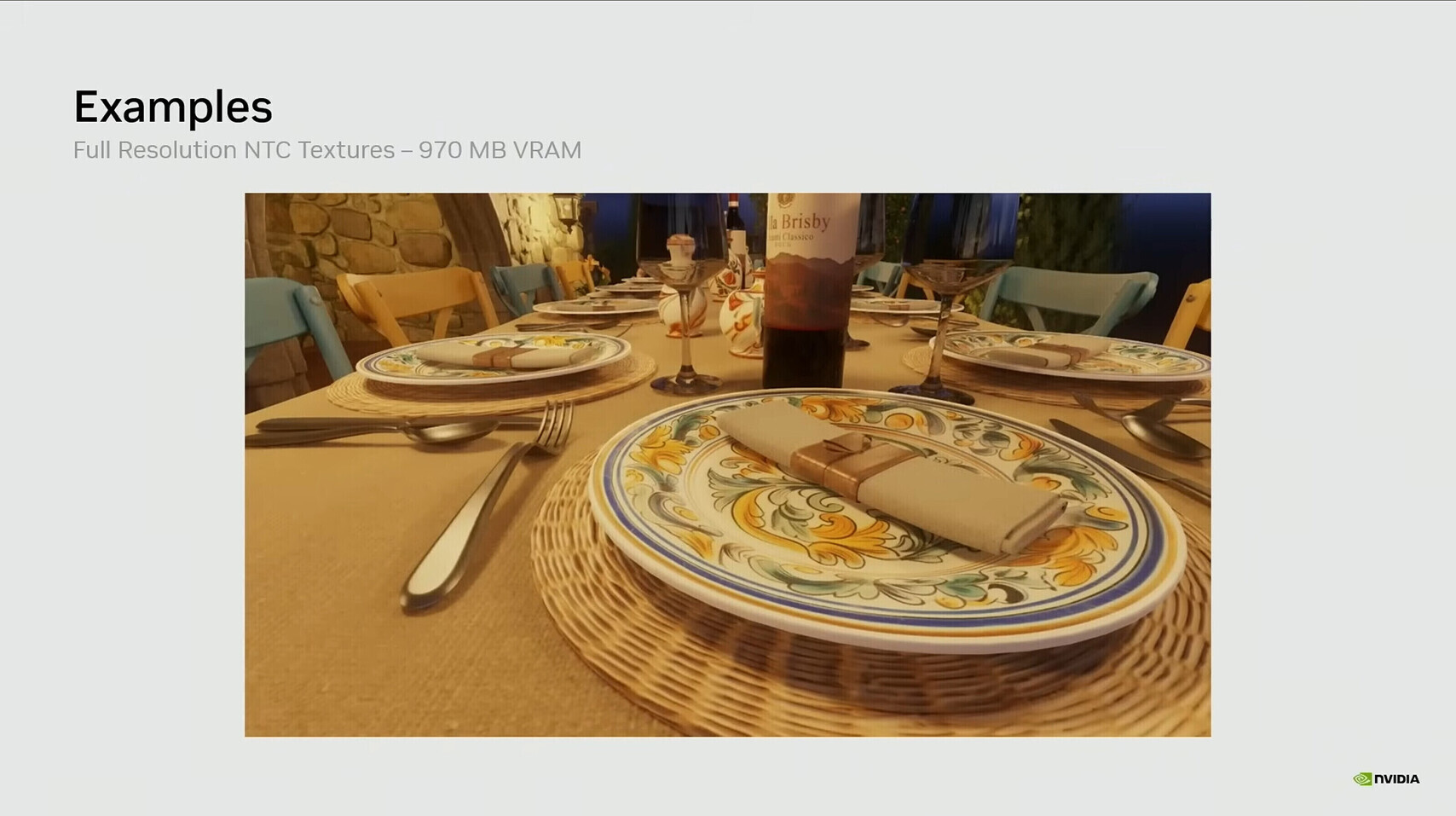

In this case, during a GTC 2026 session focused on neural rendering, the company unveiled a practical demonstration of 'Neural Texture Compression,' or NTC, that left developers and enthusiasts buzzing. In one striking Tuscan Villa scene (complete with intricate exteriors, detailed interiors, and ornate tableware), the traditional block-compressed textures using standard BCn formats devoured a hefty 6.5 GB of VRAM.

But wwitching to NTC slashed that requirement down to just 970 MB, an 85% reduction, while delivering visuals that were indistinguishable from the original in side-by-side comparisons.

The textures looked crisp, the materials retained their full fidelity, and there was no perceptible quality loss.

Even more impressively, when held to the same tight 970 MB memory budget as downscaled BCn textures, NTC actually preserved more fine detail, outperforming the conventional approach in both clarity and realism.

This isn't some pie-in-the-sky research demo. Instead, it's a direct response to one of the biggest headaches in modern game development and hardware demands: the insatiable appetite for VRAM.

As computers become more powerful and graphic cards become increasingly capable, games are able to lean towards photorealism with ever-higher resolution textures, complex material maps, and massive open worlds.

The result is inevitable: texture data has ballooned to consume the lion's share of GPU memory, often 70 to 90% in demanding titles.

Traditional block compression formats like BC7 have served well for years, packing 4x4 pixel blocks into compact representations that GPUs can decompress on the fly.

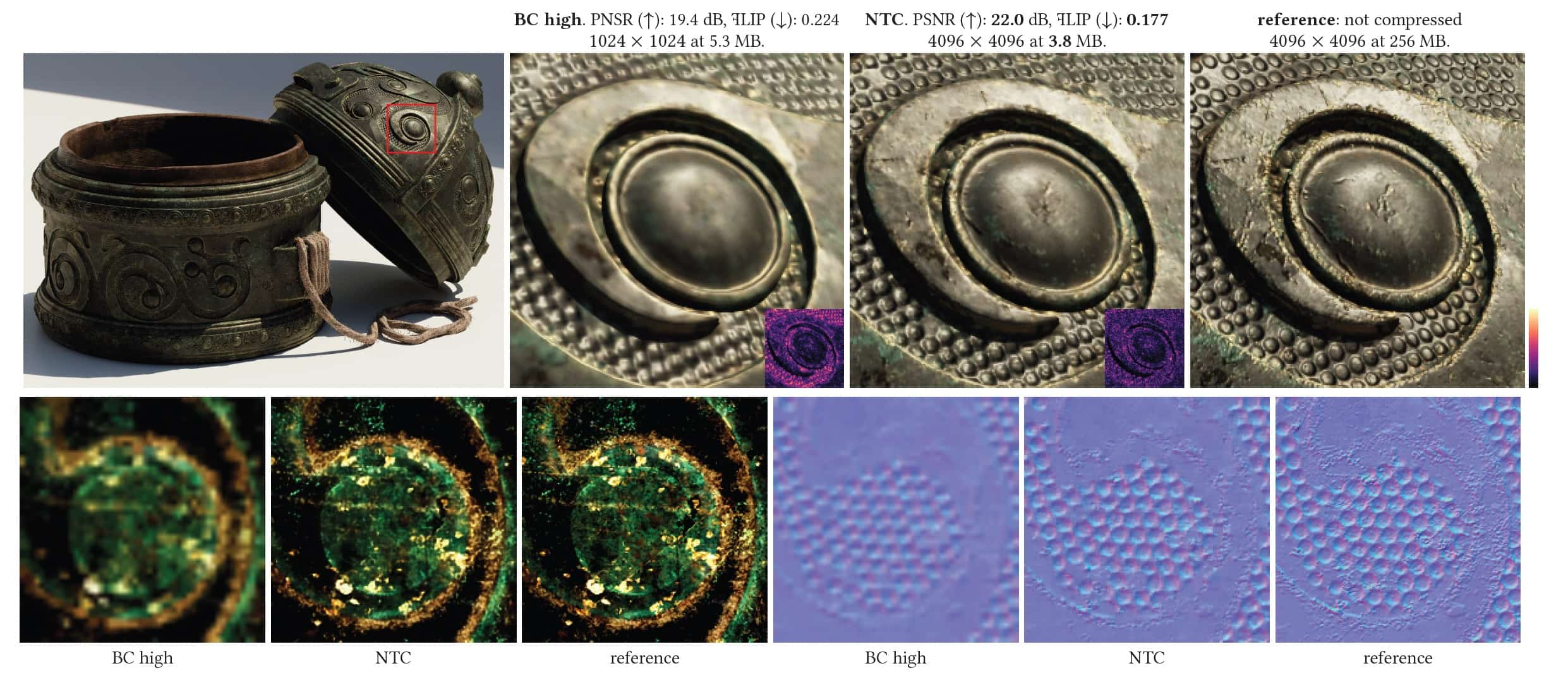

But they're hitting diminishing returns, especially as artists push for 4K and beyond textures that still suffer from compression artifacts when squeezed too hard.

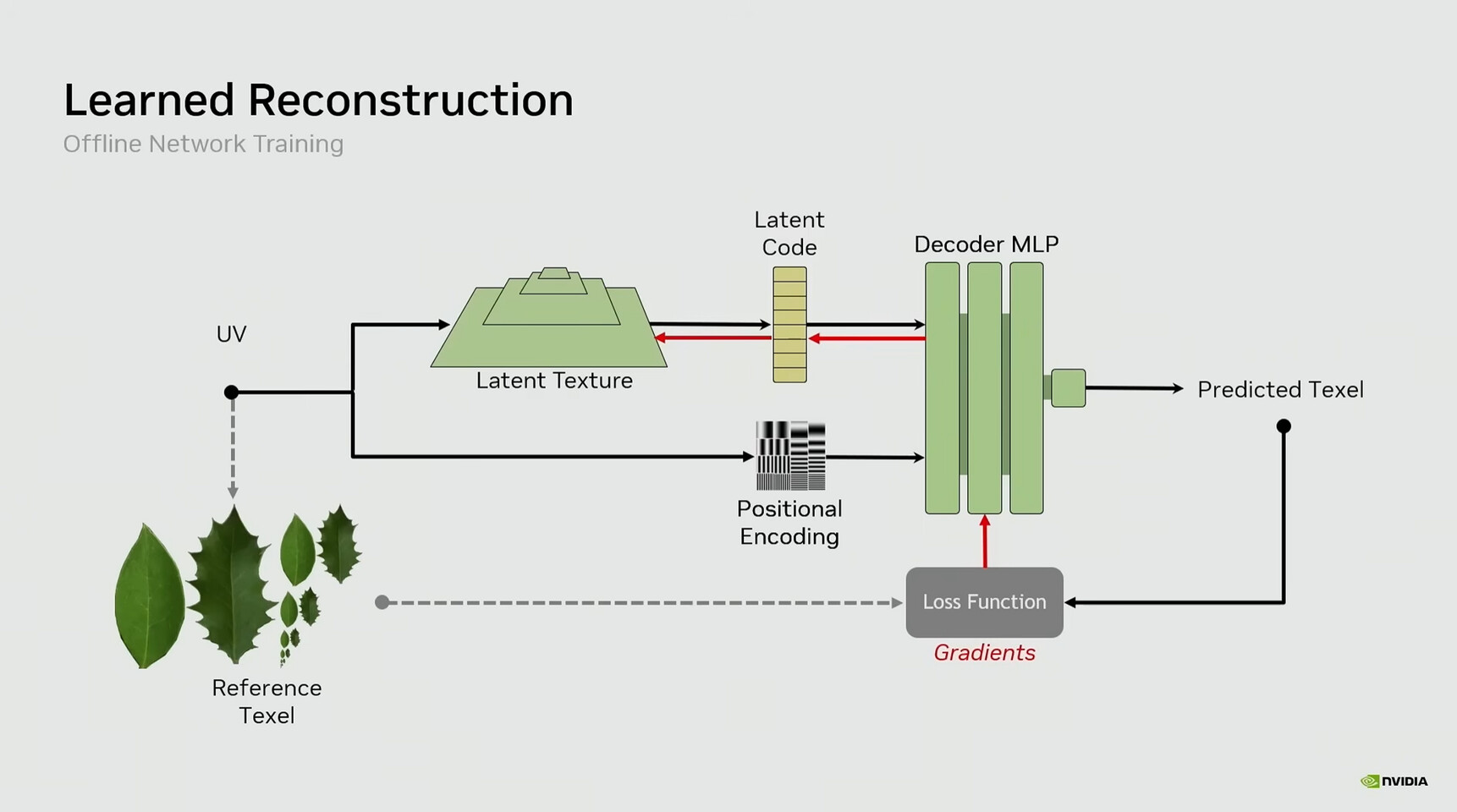

Nvidia's NTC flips the script by replacing those rigid block-based methods with small, specialized neural networks trained specifically on the game's own texture sets and materials. These networks learn the unique patterns of each texel (the fundamental pixel unit in a texture map), and reconstruct them in real time using the GPU's Tensor Cores, the same hardware accelerators that power features like DLSS.

The magic lies in how NTC is designed for efficiency and fidelity.

Unlike generative AI that might hallucinate details, NTC is a highly targeted compressor: the neural network is optimized per material during a preprocessing step, encoding the full texture and mipmap chain into a compact latent representation.

At runtime, it offers two main inference modes. "Inference on Load" transcodes the compressed data into standard BCn format once loaded into VRAM, providing solid savings with minimal ongoing overhead. But the real innovation comes from "Inference on Sample," where decompression happens dynamically per texture sample during shading.

This mode delivers the full 85%, or even up to 8x, VRAM reduction because the GPU never stores the full uncompressed data at all.

In benchmarks, this approach not only slashed memory usage dramatically but also produced images closer to the high-quality reference than BCn equivalents at the same footprint.

Accompanying NTC in Nvidia's neural rendering toolkit is Neural Materials, a complementary technique that takes things even further.

Traditional physically based rendering often relies on a stack of up to 19 separate texture channels (albedo, normals, roughness, displacement, and more) to define how light interacts with a surface. Neural Materials compresses this down to as few as eight channels, then lets a tiny neural network evaluate the material properties on the fly.

In the same 1080p Tuscan Villa demo, this yielded rendering speedups ranging from 1.4x to a staggering 7.7x with zero quality compromise, freeing up not just memory but also compute resources for more complex scenes.

Together, these technologies address the pipeline at its roots rather than just slapping an upscaler on the final output like DLSS.

The result? Smaller game install sizes. Since textures often make up the bulk of a 100 GB title, so slashing them could drop that to a fraction. And in return, gamers are greeted with lighter patches, reduced download times, and the headroom to stuff even more high-fidelity assets into existing hardware without forcing players to upgrade their GPUs prematurely.

Of course, no breakthrough comes without trade-offs, though the overhead here appears remarkably light.

Early independent benchmarks on RTX 50-series cards, including the 5070, show a modest 18% performance hit in some cases when running full inference-on-sample mode at 1080p, largely because it leverages Tensor Cores that might otherwise sit idle. But in many scenarios, the reduced memory traffic actually leads to net gains, and pairing it with DLSS can mask any minor costs.

Nvidia has made the RTX Neural Texture Compression SDK available now via GitHub as part of the broader RTX Kit, complete with tools for developers to integrate it into engines supporting Shader Model 6 and beyond.

It's not locked to Nvidia hardware.

While other concepts exist, like Intel with its Texture Set Neural Compression and by AMD, while Microsoft's DirectX Cooperative Vectors standard aims to make these neural techniques cross-vendor friendly, NTC is particularly unique because of its quiet revolution in how people think about AI in games.

While flashy upscalers grab headlines, this is AI doing the unglamorous but vital work of optimization: shrinking assets, accelerating shading, and unlocking greater complexity without dictating artistic vision.

Game creators retain full control over the look and feel; the neural nets simply store and reconstruct their work more intelligently.

NTC is more about smarter, leaner data handling that makes every byte and every cycle count. In a world where VRAM hunger shows no signs of slowing, NTC feels less like a gimmick and more like the practical evolution gaming desperately needs.