As the search engine giant of the web, Google has helped billions of people in getting the information they need. But when it comes to answering questions, that's a different story.

Google has long relied on algorithms to understand the meaning of people's queries, by breaking sentences down into keywords.

Analyzing these keywords, the algorithms can work out the meaning of users' intention, match the information to what it has in its massive database of indexed web pages, to then find the relevant the most relevant sources of information.

As a result, Google is great when responding to words. But by focusing on keywords only, this makes it difficult for Google to understand phrases

This for example, can be experienced by users who are in doubt.

When using Google Search and input a question, they may use some vague words. This is because people having questions can sometimes be uncertain, and lack the ability to describe their intentions.

According to Pandu Nayak, Google’s VP of search, on his blog post announcing BERT-powered Google Search:

Nayak called this kind of searching “keyword-ese,” or “typing strings of words that they think we’ll understand, but aren’t actually how they’d naturally ask a question.”

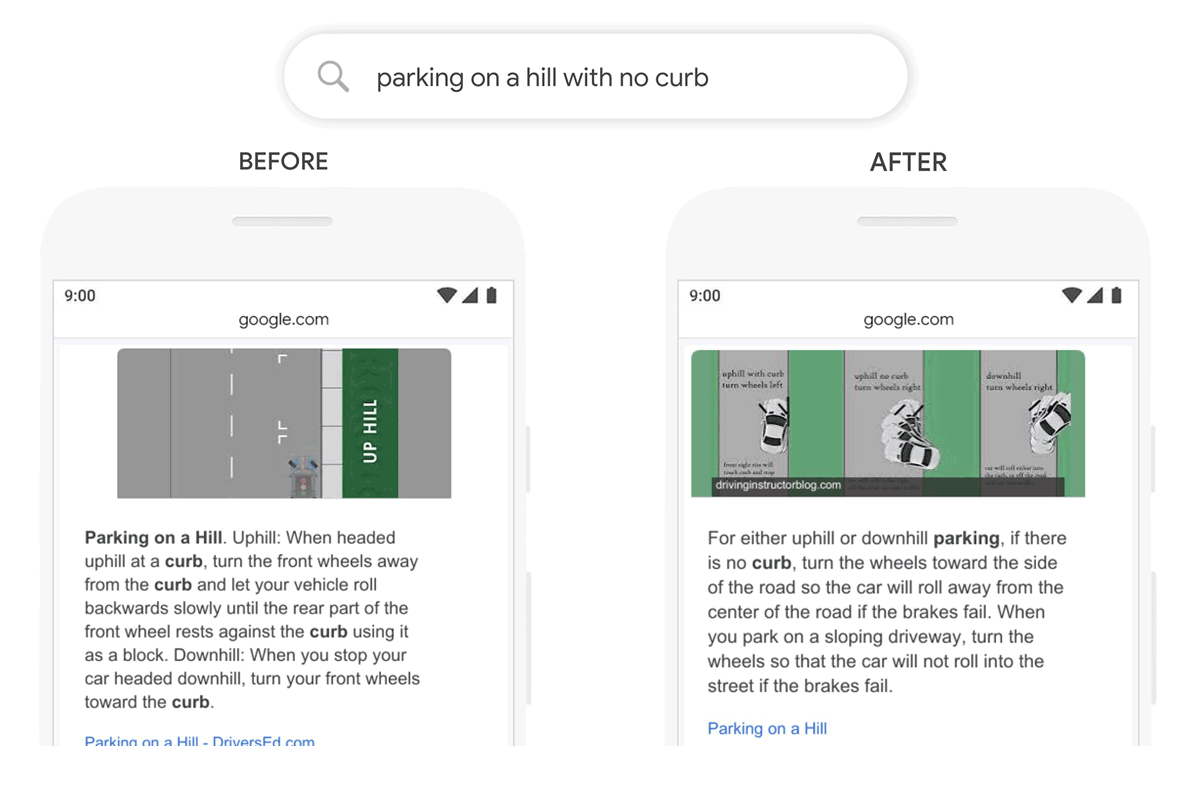

Because understanding keywords isn't the best way to understand questions, Google announced that it is rolling out a machine learning-based language understanding technique called Bidirectional Encoder Representations from Transformers, or BERT.

This should help Google in deciphering users' search queries based on the context of the language used, rather than individual words.

According to Google, “when it comes to ranking results, BERT will help Search better understand one in 10 searches in the U.S. in English.”

Nayak said this “[represents] the biggest leap forward in the past five years, and one of the biggest leaps forward in the history of Search.”

Google Search in using BERT is a subtle change. But there is no doubt that it can make users' lives a bit easier.

And as for websites, Google Search in understanding questions better won't affect how their web pages are ranked. However, it should give those websites with answers the potential traffic they deserve.

BERT was first introduced as an open-sourced neural network-based technique for natural language processing (NLP) pre-training. This technology enables anyone to train their own state-of-the-art question answering system.

This breakthrough is by making models process words in relation to all the other words in a sentence, rather than one-by-one in order.

This way, BERT models can therefore consider the full context of a word by looking at the words that come before and after it—particularly useful for understanding the intent behind search queries.

But applying it to Google Search isn't an easy task, as the company needed new hardware so it can build with BERT using Cloud TPUs to serve search results, and make it to return relevant information quickly.

"What does it all mean for you? Well, by applying BERT models to both ranking and featured snippets in Search, we’re able to do a much better job helping you find useful information," said Nayak.

"In fact, when it comes to ranking results, BERT will help Search better understand one in 10 searches in the U.S. in English, and we’ll bring this to more languages and locales over time."

Related: Google Is Not A "Truth Engine," But People Think It Is