Wikipedia is where the internet goes to settle arguments, research random facts at 2 a.m., and double-check everything from celebrity ages to obscure wars.

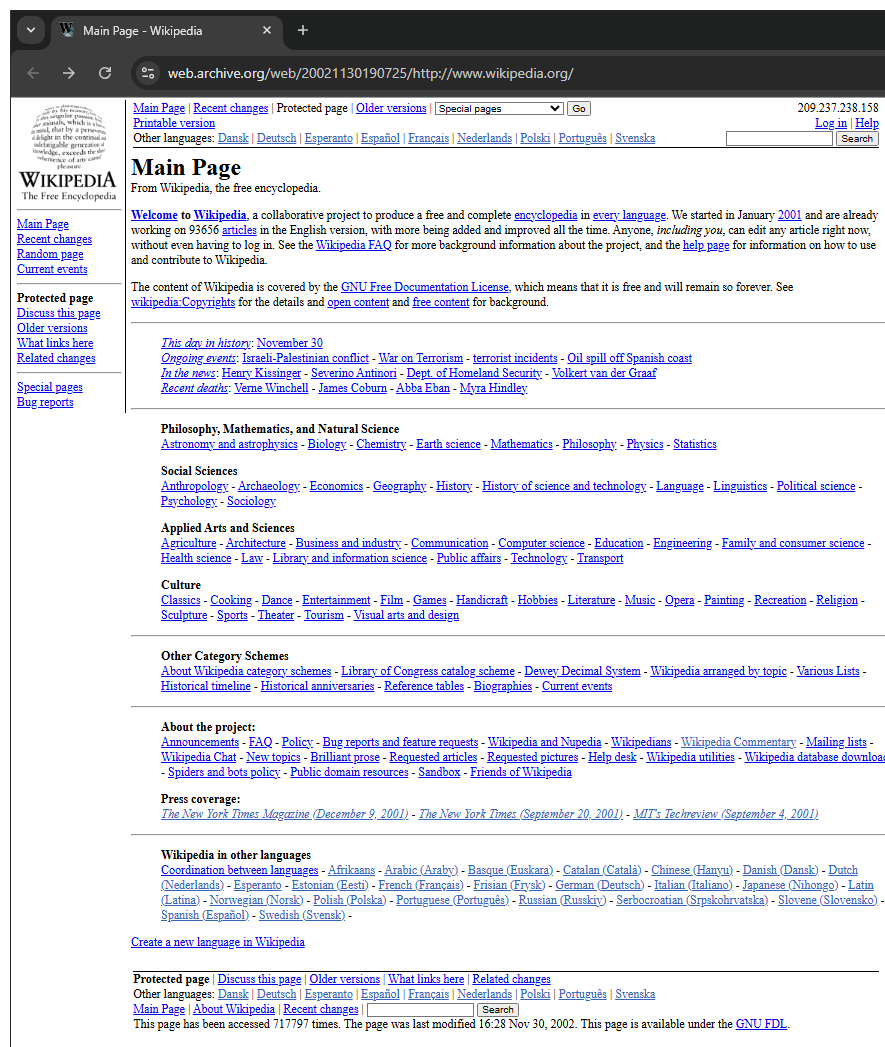

Launched in 2001 by Jimmy Wales and Larry Sanger, it was originally intended to be a more formal online encyclopedia with expert-written and peer-reviewed articles. But since that approach was slow, they introduced a new wiki-based site where people could contribute.

Practically anyone with an internet connection, regardless of their knowledge and background, can create and edit pretty much anything on Wikipedia.

That wild experiment became... Wikipedia.

Now a free, collaborative online encyclopedia, Wikipedia’s popularity stems from the fact that it's created and maintained by a global community of volunteers through open-source editing, governed by strict policies, moderation systems, and editorial hierarchies.

Not only did this dramatically accelerate Wikipedia’s growth, but it also reshaped how knowledge is shared online—making it one of the most visited websites in the world, and arguably the largest repository of crowd-sourced human knowledge ever created.

And this time, it's fighting against AI after having an editor backlash.

In the long timeline of technological progress, few innovations have stirred such unease as AI.

From uncanny chatbots to hallucinating algorithms, AI's reach now extends into people's workplaces, media, and even the most trusted information platforms. But one of those platforms—Wikipedia—just proved that AI’s encroachment isn’t inevitable.

Wikipedia that is literally powered by passionate, unpaid community of humans, drew a line in the digital sand and said: "No."

This happened after Wikimedia Foundation, owner of Wikipedia, quietly rolled out an experimental feature: AI-generated summaries.

Created by Cohere’s Aya model, the feature was initially launched earlier this 2025 for a small portion of Wikipedia’s mobile users with the goal of making articles easier to understand for a wider audience.

"Between July and December 2024, the Web team conducted experiments checking how to make it easier for readers to discover information on the wikis. This was part of our annual plan focused on content discovery for readers," Wikipedia said in a dedicated page.

The summaries were opt-in, affecting roughly 10% of mobile traffic for two weeks.

It sounded helpful in theory—until the community found out.

Volunteer editors, the very people who built Wikipedia into what it is today, reacted with shock and fury.

A deluge of complaints flooded Wikimedia’s public discussion boards. Some called the idea “yuck.” Others called it “ghastly.”

But underneath the harsh comments, reside a far more legitimate, deeply felt concerns.

From its very inception, Wikipedia was designed to collect and crowdsource human knowledge, making it freely accessible to everyone.

It matured after successfully building a hard-earned reputation as a neutral, fact-based resource—shaped by two decades of relentless dedication from a global network of volunteers committed to accuracy and consistency.

Introducing AI-generated content—especially from systems known to produce factual hallucinations—is seen by many as a direct threat to that legacy.

Editors were quick to highlight glaring errors in early AI-generated summaries, particularly on complex and politically sensitive topics such as dopamine and Zionism. These aren’t obscure pages tucked away in digital corners—they’re among the most visited articles on the platform, read by millions worldwide.

Wikipedia already grapples with the inherent biases of its human contributors, who come from diverse cultural, linguistic, and ideological backgrounds. The addition of generative AI risks amplifying those biases and introducing new layers of misinformation—errors that could extend far beyond Wikipedia’s pages.

One contributor warned that allowing AI to generate summaries could inflict “immediate and irreversible damage” to Wikipedia’s credibility—harm that no footnote or post-edit correction could repair.

And even if the AI managed to get its facts straight, many editors felt it still didn’t belong.

Wikipedia articles aren’t just about information; they’re about tone. The encyclopedia’s famously neutral voice isn’t easy to replicate. AI, by contrast, tends to lean into wordy, exaggerated prose—overusing adjectives and meandering sentence structures.

In short, it didn’t sound like Wikipedia. And for the editors, that was a dealbreaker.

But the biggest controversy wasn’t just what the Wikimedia Foundation did—it was how it did it. The AI project was launched with minimal community input. For a platform built on consensus and collaboration, that felt like a betrayal.

One editor even speculated that the AI initiative was “resume padding” for foundation staff eager to join the AI gold rush. Others criticized the internal discussions cited as justification, noting that only one person—a foundation employee—had participated.

Wikipedia, after all, is not a tech company. It's a nonprofit, governed by a delicate balance between paid staff and volunteer contributors. Most pages are written and monitored by people who do it purely out of passion, not profit. Decisions typically follow long debates, talk-page consensus, and—when needed—community votes.

This time, those traditions were bypassed.

Faced with overwhelming resistance, the Wikimedia Foundation backed down. The AI summary trial was suspended almost immediately. Officials acknowledged that they had failed to consult the community and pledged not to proceed without broader involvement in the future.

At this time, the door for AI to enter Wikipedia is firmly closed under the watchful eye of Wikipedia’s contributors.