Real-time strategy game is one of the best ways to put AIs to the test.

With the seemingly unlimited ways for participants to play their units and manage their building structures in a map, there are certainly countless ways to win or lose. This time, Google subsidiary DeepMind has created AlphaStar.

The company took the AI to play StarCraft II, and showed off its head-to-head matches against professional gamers, and came out as a clear undefeated champion.

Here, the AI agent managed to gain 10 wins in 10 matches against two StarCraft II professionals using completely unique strategies each time.

Both TLO and MaNa played two separate five-game series against AlphaStar back in December 2018, on the Catalyst map in a slightly outdated version of StarCraft II that was designed to enable this AI research.

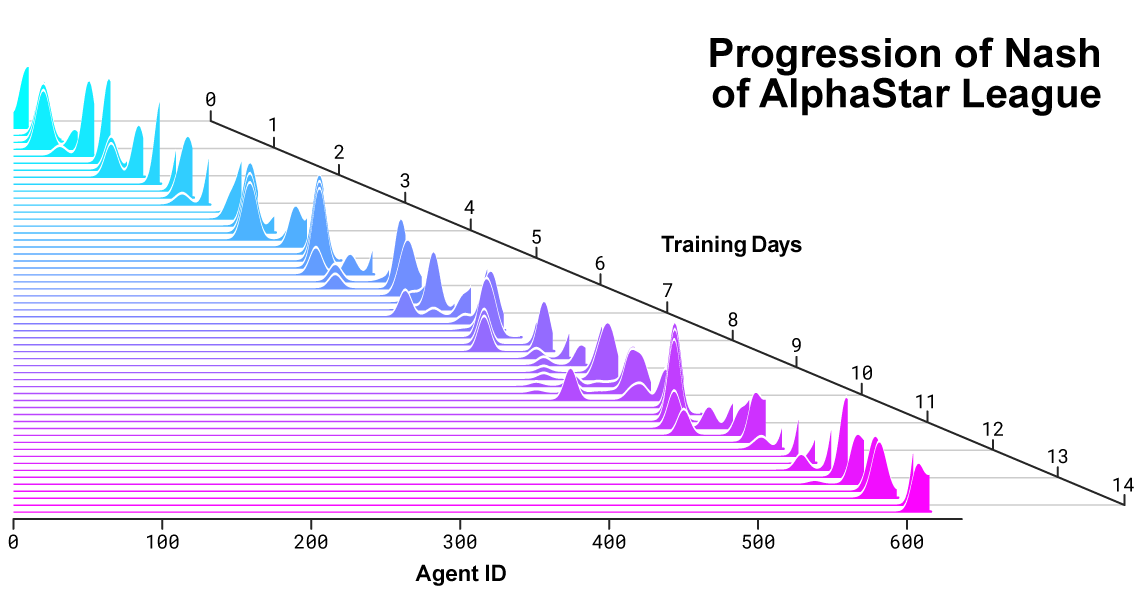

AlphaStar's expertise in the game comes from an in-depth training program that DeepMind calls the 'AlphaStar League'.

Related: Tencent's AI Plays And Defeats StarCraft II's Built-In "AI" In Full Matches

Here, DeepMind collected replays of human gamers playing this Blizzard Entertainment's real-time strategy game, and started training the AI's neural network based on that data.

The company then forked the AI to create more agents, and those were matched against one another in a series of matches.

These forks have the same ability as the original, and were made to take on specialties and master different parts of the game to create unique game strategies.

Because StarCraft is such a complex game, There is no single optimal strategy that works on all situation. For this reason, DeepMind trained the machine learning agents by splitting them up to hundreds versions of itself, in an attempt to achieve superiority at all costs:

From focusing on increasing technology development; rushing in resource collecting; enhancing offense strategies; scattering units under an area-of-effect attack; complex defenses and more. In short, DeepMind wanted to teach the AI to be the best in all strategies.

The AlphaStar League AI was made to run for one week, with each of the matches producing new information that helped refine the AI.

During the week, AlphaStar played the equivalent of 200 years worth of StarCraft II. And by the end of the session, DeepMind selected five individual agents that had the least exploitable strategy and had the best chance to win.

These five agents were then matched against TLO and won the first 5 games.

But initially, the games were said to be unfair because Protoss (the sapient humanoid race, one of the races in StarCraft II), isn't TLO's speciality.

Seeing the AI managed to defeat the pro using his non-preferred race, DeepMind decided to put AlphaStar up against a Protoss expert.

This time, DeepMind matched AlphaStar with MaNa, a two-time champion of major StarCraft II tournaments. Before the day, AlphaStar got another week of training before the competition, including some added knowledge gained from playing against TLO.

When AlphaStar and TLO were met in a match, the result was similar.

Just like TLO before him, MaNa put up a valiant effort but fell short in every match against the AlphaStar. The AI once again won all five of its matches against its human opponent, raking a final 10-0 in its first 10 matches against the two professional players.

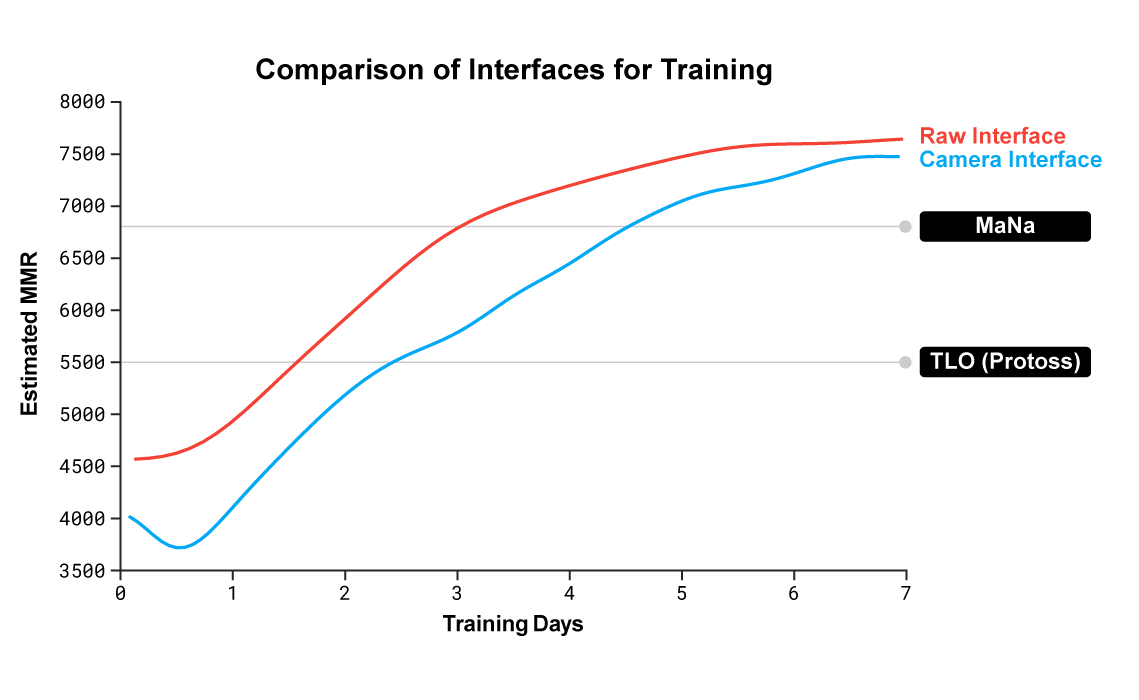

When competing against TLO and MaNa, AlphaStar had some major advantages, as it saw the games in a different way than human players. While it was still restricted in view by the fog of war, it can see the map entirely zoomed out.

This gave it more information about any visible enemy unit, as well as its own base.

Because the AI got its information directly from the game engine, rather than having to observe the game screen, it knew instantly that an enemy unit is weak without having to see it. It also had the ability to perform more "actions per minute” than a human.

Because of these advantages, the AI didn't have to split its time in focusing on different parts of the map like human players would.

Following the broadcast of the recorded matches, DeepMind wanted to make things fair. This time, the company introduced another version of AlphaStar for MaNa to compete with in a live match. This version is similar to the previous AlphaStar, but without the ability to see the map as a whole (using the camera interface), and handicapped to play just like how a human would.

And here, MaNa was able to exploit some of the AI's shortcomings and won the game.

After receiving 10 straight losses in the earlier games, the two pros finally scored a win against the AI when MaNa took on this slightly different AlphaStar in a live match streamed by Blizzard and DeepMind.

This huge blow gave AlphaStar its first loss against the pro players.

Coming to the conclusion, AlphaStar is inarguably a strong player. But it has some important caveats.

First, when DeepMind handicapped the agent by making it play like a human by restricting its view to just the screen, and could only click on visible units, the limited perception and delay made the AI less strong.

Second, the AlphaStar that was defeated by MaNa, didn't have enough time to train. According to DeepMind, the AlphaStar was up to speed with the new view of the game, but the company didn't have the opportunity to test the AI against a human pro before taking on MaNa live on the stream.

What this mean, the AI was still under development, so for that and other reasons, it was never going to be as strong as it was intended.

Third, AlphaStar was designed to only plays using the Protoss race, and can only play against Protoss. It was never trained to understand, or even see, what a Zerg (advanced arthropodal aliens, another race in StarCraft II) unit looks like, for example. The AI was also designed to match against one opponent, and only on a single map.

And the most successful versions of itself is only efficient when using micro-heavy units.

Here, it can be concluded that AlphaStar, while it is indeed a very strong and capable player, it only mastered a tiny fraction of the game.

As DeepMind continues to develop this AI, there is no doubt that AlphaStar will naturally grow stronger.

But as the AI was originally created for research, AlphaStar gave good examples of how AI can efficiently visualize something as a result of every move it makes.

This knowledge could be applied in many other areas where AIs must repeatedly make decisions that affect complex and long-term series of outcomes.

Related: DeepMind's AlphaZero AI Masters Chess, Shogi, And Go Through Self-Play