AI makes computers smarter. Trained on data, AI can make decisions by itself based on what it learned, and do them in lightning speed, automatically.

This can improve the process of delivering experiences on the web. And in Google's case, it can bring a lot of improvements to how its search engine works.

In fact, Google said that with recent advancements in AI, "we’re making bigger leaps forward in improvements to Google than we’ve seen over the last decade."

During Its 2020 Search On livestream, Google has shared how the company is bringing AI into its products.

The company said that it has invested deeply in language understanding research, and introduced BERT language to help deliver more relevant results to Google Search.

And this time, Google said that BERT is already unleashed to almost every query in English.

This a huge step up from 2019, when Google said that BERT was only used on 10% of English queries.

On the event, Google made loads of other announcements too.

Besides the update that makes Google Search is capable of understanding users' hums, there are also changes in the way how it understands spellings.

On its blog post, Google said that it has a new spelling algorithm that uses "a deep neural net to significantly improve our ability to decipher misspellings."

Google said this change "makes a greater improvement to spelling than all of our improvements over the last five years."

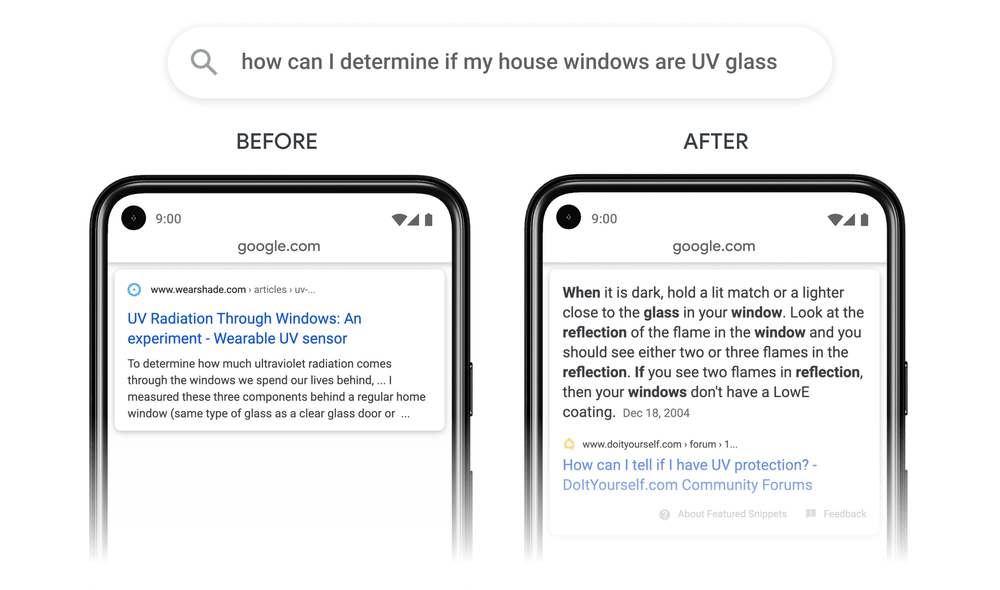

Then there is a way Google can understand passages.

In an update to its AI, Google can index only parts of a page, or a passage within all of the page's content, instead of indexing all of the content.

Before, Google said that it cannot do that, but with the update, Google said that "we've recently made a breakthrough in ranking and are now able to not just index web pages, but individual passages from the pages."

With the update, Google said that it can help its search engine improve search queries globally, in all languages, by 7%, so it can "find that needle-in-a-haystack information you’re looking for."

Additionally, Google said that it "applied neural nets to understand subtopics around an interest, which helps deliver a greater diversity of content when you search for something broad."

Google said by the end of the year it will "understand relevant subtopics, such as budget equipment, premium picks, or small space ideas, and show a wider range of content for you on the search results page."

Google then said that it has even more deepening understanding of data.

On the web, sources of data can often be buried inside large data sets that are not easily accessible. Google has been working on the Data Commons Project, which is an open knowledge database of statistical data, since 2018. With it, Google could start bringing data sets together.

And with the update, Google Search is making the information more accessible.

"Now when you ask a question like 'how many people work in Chicago,' we use natural language processing to map your search to one specific set of the billions of data points in Data Commons to provide the right stat in a visual, easy to understand format. You’ll also find other relevant data points and context—like stats for other cities—to help you easily explore the topic in more depth," Google explained.

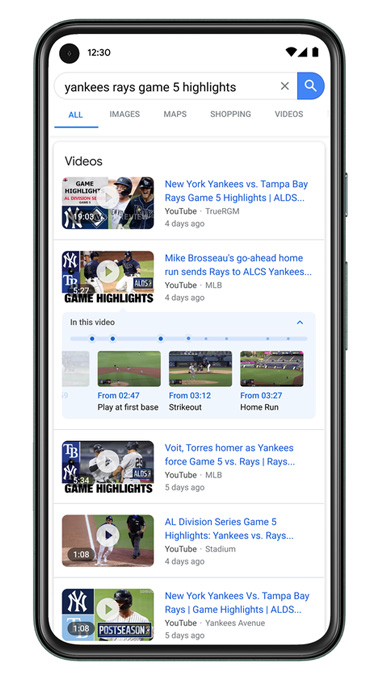

The next, is Google in understanding key moments in videos.

Using AI, Google can understand "the deep semantics of a video and automatically identify key moments." This allows it to tag moments inside videos, allowing users to navigate them like chapters of a book.

This feature was first announced for more than a year now, and Google said that it will used in "10 percent of searches on Google will use this new technology."

"With investments in AI, we’re able to analyze and understand all types of information in the world, just as we did by indexing web pages 22 years ago."

"We’re pushing the boundaries of what it means to understand the world, so before you even type in a query, we’re ready to help you explore new forms of information and insights never before available."

Going beyond pampering ordinary users, Google is also giving some updates that journalists can benefit.

The company has launched a suite of tools to help them work more efficiently, securely, and creatively through technology. The company has also launched Pinpoint, a tool that can quickly sift through documents.

And for researchers and learners, there is also an update that makes use of Google Lens and Augmented Reality (AR). This brings information to Google Search, in 3D.

And last, Google is also improving users access to quality information during 'COVID-19' coronavirus.

Google is in fact known to make thousands of improvements to its search engine every single year. But not rarely does Google detailed the improvements it makes.

During the Search On 2020, Google is deliberately showcasing how AI is improving its search engine, "to ensure people find them helpful."

"We aim to help the open web thrive, sending more traffic to the open web every year since Google was created," said Google.