Deep learning is to make computers to understand something based on learning data representations, as opposed to task-specific algorithms.

This has revolutionized our approach to make machines understand and learn a vast array of processes. But when teaching AI systems, researchers usually use annotations that describe how words work. Traditionally, language-learning computer systems are trained on sentences annotated by humans that describe the structure and meaning behind words.

This method has been used by all if not most language-learning AIs, like those available on search engines, chatbots, natural-language database querying, and virtual assistants to name a few.

While the approach has been proven capable, the data annotation process is often time consuming. It can also raise some complications around how to correctly label images, and accurately reflect natural speech patterns.

In many ways, the strategy is also somehow unnatural.

For this reason, researchers from MIT's Center for Brains, Minds, and Machines (CBMM) and Computer Science and Artificial Intelligence Laboratory (CSAIL) have developed another way to make computers learn a step further like humans.

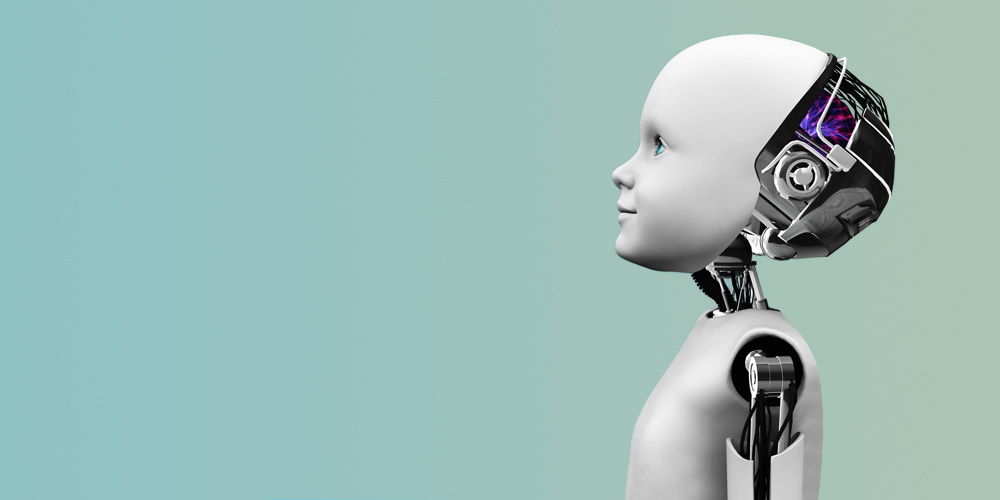

The strategy is by creating a semantic parser that makes machines learn through observation. This is like making computers to learn like a human child, in which it observes scenes and making connections by itself.

Just like how a human child would when learning a new language, the strategy has the potential to greatly extend computing’s capabilities.

How it works involves the system in studying captioned videos, and to make the AI links the words to objects and actions determining the accuracy of a description. The AI can then turn the potential meanings into logical mathematical expressions, automatically picking the expression that most closely represents what it thinks is going on.

With this strategy, the AI can start creating its own list of potential meanings and little idea as to what it's seeing. By further training, the AI will gradually narrow down the possibilities, and could then use what it had learnt about the structure of the language to accurately predict a new sentence’s meaning, but this time, without any video.

Eventually, the AI can understand what's what.

According to the researchers, this approach could expand the types of data that can be used and reduce the effort required to train parsers. The strategy is considered a "weakly supervised" approach that enables a few annotated sentences to be combined with more easily acquired captioned videos to boost performance.

Annotations can help speed the process, but the strategy doesn't really need annotations to make the AI learn.

This makes the approach more flexible, as the system is on its own observing its environment. If can, for example, learn things based on how people actually speak, not just formal language.

The childlike method could indeed speed up AI's learning process, and make it capable of handling uncommon languages that rarely get AI-friendly annotations.

MIT envisions robots that could adapt to the linguistic habits of the people around it, even with sentence fragments and other signs of informal dialog. The process could also improve natural interaction between humans and personal robots in the future, allowing robots to constantly observe, and learn from, the interactions going on around them.

As explained by co-author Andrei Barbu, a researcher in the CSAIL at MIT:

Furthermore, the parser could also help researchers to better understand how young children learn language, as explained by co-author Boris Katz, a principal research scientist and head of the InfoLab Group at CSAIL:

MIT is one of the birthplace for technologies and brilliant minds. It has been focusing on AI and machine learning for quite some times, and has even dedicated a $1 billion worth of project to reshape its academic program and open college dedicated to the AI field.