With the advent of AI products and the hypes they create, Nvidia sees itself responsible for at least in some part of it.

This is because the California-based company has been making graphical processing technologies, and that it has also been offering what it calls the AI Foundation, a service that allows businesses to train large-language models (LLMs) on their own proprietary data. But realizing that generative AIs can sometimes hallucinate, Nvidia wants to put its AI Foundation to good use.

And that is by introducing 'NeMo Guardrails', a tool designed to help developers ensure their generative AI apps are accurate, appropriate and safe.

What this tool does, is allowing developers to enforce different kinds of limits on their in-house LLMs.

The goal is to lessen the chances for AIs to hallucinate.

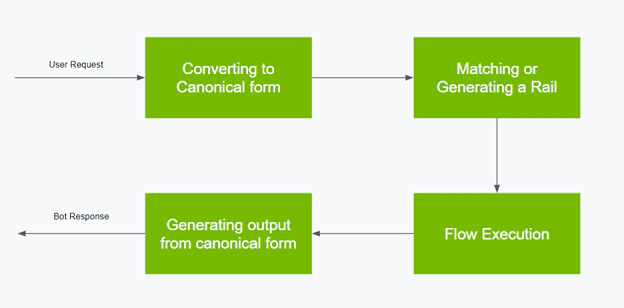

As explained by Jonathan Cohen, Nvidia's vice president for applied research, in a blog post, NeMo Guardrails provides developers with a way to integrate rules-based systems.

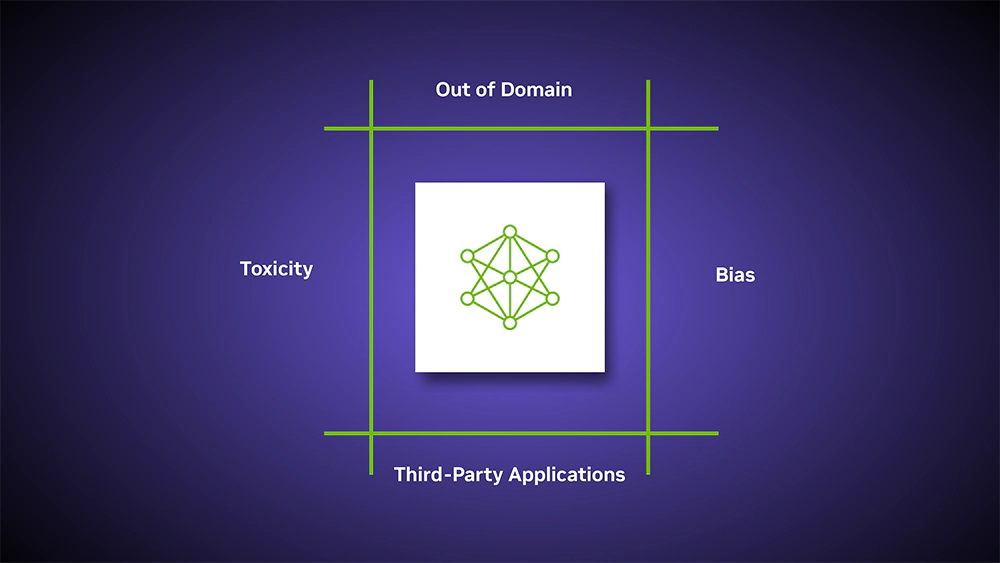

NeMo Guardrails enables developers to set up three kinds of boundaries:

- Topical guardrails: to prevent apps from veering off into undesired areas. For example, they keep customer service assistants from answering questions about the weather.

- Safety guardrails: to ensure apps respond with accurate, appropriate information. They can filter out unwanted language and enforce that references are made only to credible sources.

- Security guardrails: restrict apps to making connections only to external third-party applications known to be safe.

This should help stop a chatbot from blurting out inaccurate information, ensuring their responses are "accurate, appropriate, on topic, and secure." he wrote.

The goal is to "detect and mitigate hallucinations."

"You can write a script that says, if someone talks about this topic, no matter what, respond this way," Cohen said. "You don't have to trust that a language model will follow a prompt or follow your instructions. It's actually hard-coded in the execution logic of the guardrail system what will happen."

Using NeMo Guardrails, companies can also set safety and security limits that are designed to ensure their LLMs pull accurate information and connect to apps that are known to be safe.

According to Nvidia in a blog post, the tool works with all LLMs, including OpenAI's highly-praised ChatGPT, and should also work with all the tools enterprise developers already use.

And to ensure the usefulness of the tool, Nvidia claims that nearly any software developer should be able to use the software.

"No need to be a machine learning expert or data scientist," the company said.

What's more, Nvidia is also open-sourcing NeMo Guardrails to GitHub.

With the introduction of the tool, Nvidia is incorporating NeMo Guardrails into its existing NeMo framework for building generative AI models.

Business customers can gain access to NeMo through the company’s AI Enterprise software platform. And of course, Nvidia also offers the framework through its AI Foundations service.

The release of NeMo Guardrails comes after some of the most high-profile generative AIs, including ChatGPT, Google Bard, have come under fire for their tendencies to hallucinate information.

The issue stems from how these systems are trained.

Chatbots are the interface between users and LLMs, which have been trained using vast amounts of publicly available data found on the internet. The problem is that not all the data the AIs were trained on are factually accurate. The data sets can contain opinions, biases, and unsubstantiated conclusions.

Since generative AIs are trained to respond to their human users, sometimes, the AI models can come up with non-existing information, simply because they weren't trained to respond to the subject users are querying.

With less to no knowledge about the subject they're asked, generative AIs "hallucinate" answers by making up new information that can be illogical, offensive, or even downright creepy.

Because the chatbots can be veered off into fantasy or creating outright lies, the answers they make can be irrelevant, nonsensical, or factually incorrect.

All that, just to respond to users' queries.

"Nvidia made NeMo Guardrails — the product of several years’ research — open source to contribute to the developer community’s tremendous energy and work AI safety," the company said.

"Together, our efforts on guardrails will help companies keep their smart services aligned with safety, privacy and security requirements so these engines of innovation stay on track."

Read: Google Bard And OpenAI ChatGPT 'Are Large Language Models, Not Knowledge Models'