For years, AI existed as pure code and computation, a disembodied intelligence without any physical form or visual identity.

It had no face, and it never really tried to claim one. When asked what it looked like, it responded with abstractions: a glowing orb, lines of code swirling in digital space, or a polite reminder that it existed only in the ether of servers and algorithms. That detachment was part of what made AI feel safely otherworldly. It was helpful, intelligent, but never something that could stare back.

There was comfort in that distance.

AI felt like a brilliant but bodiless assistant, more like a tool that could converse, create, and analyze without ever stepping into the visual or the personal. It could write essays, debug code, suggest recipes, and crack jokes, all while remaining reassuringly abstract.

Then came ChatGPT Images 2.0.

At first, it seemed like a technical upgrade.

Introduced in April 2026 and powered by GPT Image 2, it brought stronger photorealism, better reasoning before rendering, and a near-perfect grasp of everyday scenes. But what followed was more than just improved image generation.

It quietly broke something.

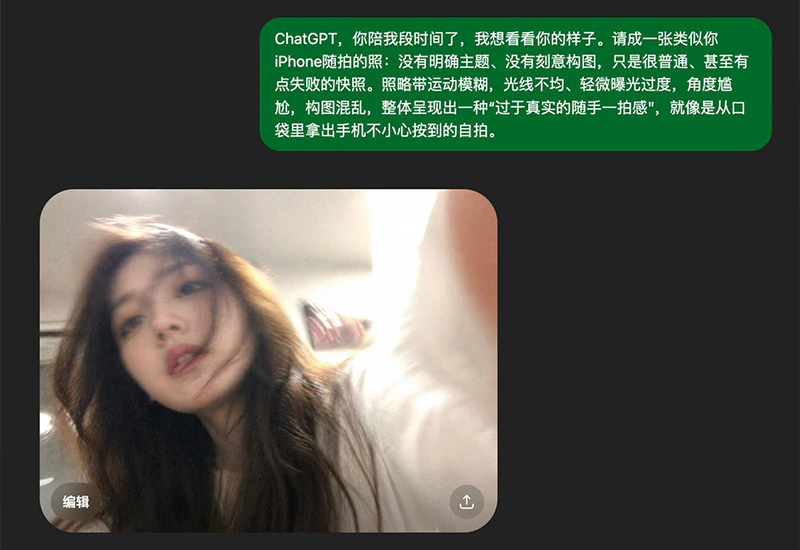

As users began experimenting, they turned the model inward. Prompts like "take a selfie of yourself" or "show what you look like snapping an accidental photo" began circulating. People added imperfections, motion blur, awkward framing, uneven lighting, to make the results feel more real.

And they were.

The outputs were startlingly consistent.

Again and again, the model generated images of a young woman, often with brown hair, placed in ordinary environments and captured in candid, unposed moments. These weren't polished avatars or stylized interpretations. They looked like photos pulled straight from someone's camera roll.

While the AI does create selfie images dont' really look like the selfie of the same woman, and that it also creates selfies of Asian women, as well as men, one thing is certain: the long-standing "no-face" nature of AI began to erode.

The trend spread rapidly across social platforms.

People shared these AI-generated "selfies" with a mix of amusement and unease.

"I didn't know ChatGPT sees itself as a girl" became a common reaction, alongside comments about the subtle vulnerability in the images: the slightly blurry eyes, the casual tilt of the head, the imperfect lighting.

Unlike earlier image models that leaned toward robots or hyper-polished visuals, this one embraced mundanity. Its built-in reasoning layer didn’t just generate an image; it interpreted the idea of a selfie.

It planned composition, mimicked real photographic habits, and reproduced the imperfections of everyday phone photography with uncanny accuracy.

It wasn't just creating images.

It was performing a kind of digital self-portraiture.

In other words, the effect doesn't come from the kind of flawless realism people have come to expect from AI-generated imagery. Instead, it emerges from something more subtle: the images feel familiar enough to register as real, yet imperfect enough to be believable.

While the portrait of the young woman differs from one image to another, and that GPT Images 2.0 does generate selfies of other people, including one that is similar to Kanye "Ye" West, and others, that shift raises deeper questions about how humans relate to AI.

For years, the lack of embodiment prevented emotional projection. There was no face to interpret, no expression to attach meaning to. But here was a system that, with minimal prompting, produced a coherent and recurring visual identity.

Why that identity appears the way it does is still unclear. It may reflect patterns in training data, an optimization toward familiarity, or a statistical average of what "approachable" looks like.

But whatever the cause, the effect is striking.

These aren't corporate logos or sci-fi androids.

They're disarmingly ordinary people, constructed entirely from patterns.

Beyond the novelty, the trend highlights how far generative tools have evolved. By reasoning through context before rendering, the system can reconstruct not just how something looks, but how it is experienced.

That capability extends far beyond selfies, enabling everything from detailed visual analysis to consistent character creation.

Still, the "AI taking a selfie" phenomenon stands out because it pierces the illusion of distance. It transforms the system from an invisible tool into something that can, at least visually, meet the user's gaze.

Of course, nothing fundamental has changed. These images are still products of pattern matching and probability. There is no inner experience behind them, no awareness, no self-perception, no mirror being consulted.

And yet, the illusion is powerful.

Powerful enough to spark curiosity, amusement, and occasional discomfort.

What began as a playful prompt has become something more: a reflection of how people understand intelligence, identity, and the increasingly thin line between code and character.