The perfect robot involves the perfect combination of software and hardware.

Achieving this is difficult, because the two aspects must work side-by-side in perfect harmony.

This time, Google is taking a significant leap in enhancing the intelligence of its robots, by introducing the Robotic Transformer (RT-2), an advanced AI learning model.

Building upon its earlier vision-language-action (VLA) model, the RT-2 is built to give robots the ability to recognize visual and language patterns more effectively. This enables the robots to better interpret instructions accurately and deduce the most suitable objects to fulfill specific requests.

"In our paper, we introduce Robotic Transformer 2 (RT-2), a novel vision-language-action (VLA) model that learns from both web and robotics data, and translates this knowledge into generalised instructions for robotic control, while retaining web-scale capabilities. In an experiment, the researchers put the a one-armed robot into a test in a simulated kitchen office scenario," Google DeepMind said in a blog post.

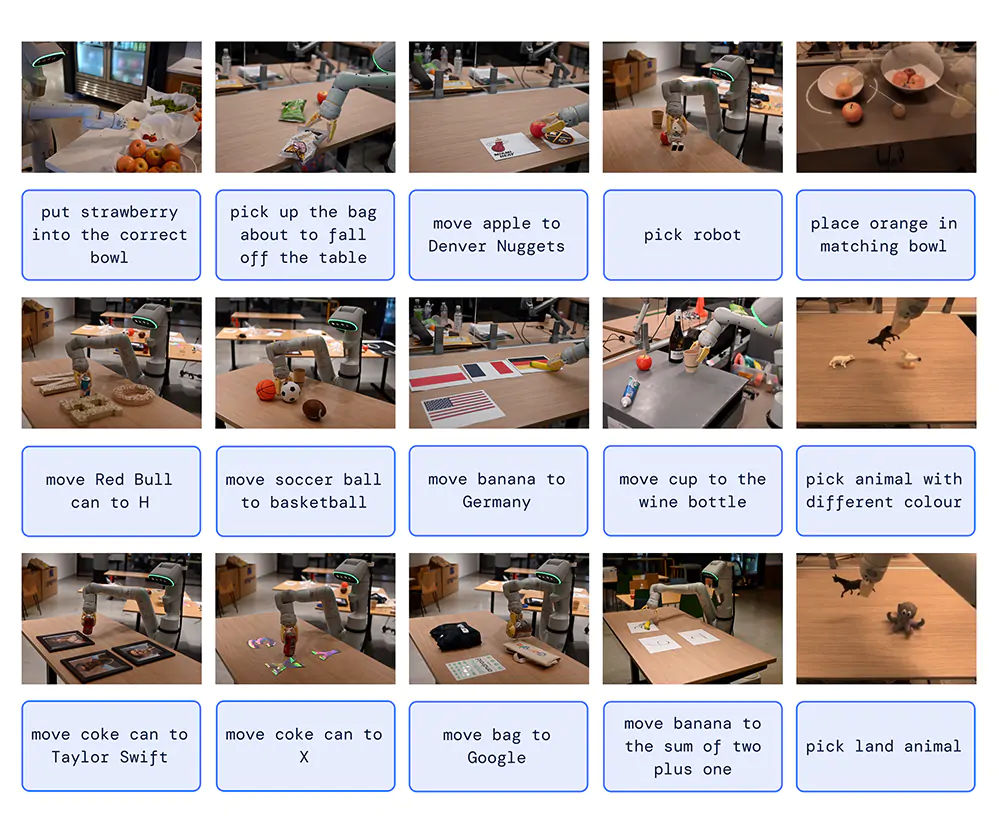

By incorporating chain-of-thought reasoning, the RT-2 shows the ability to perform multi-stage semantic reasoning, like deciding which object could be used as an improvised hammer, like a rock, or which type of drink is best for a tired person, in which it chose a Red Bull.

It was also able to distinguish between three plastic figurines, where it grabbed a dinosaur when told to choose an extinct animal.

Additionally, the researchers instructed the robot to move a coke can to a picture of singer Taylor Swift.

To develop this model, Google trained RT-2 using a combination of web and robotics data, capitalizing on advancements in large language models like Bard, Google's proprietary language model.

It does so by translating the robot’s movements into a series of numbers — a process called tokenizing — and incorporating those tokens into the same training data as the language model.

By implementing both its language data with robotic information, including knowledge of how robotic joints should move, the researchers showed that RT-2 exhibits proficiency in understanding directions given in languages other than English.

This makes RT-2 a notable advancement in the cross-lingual capabilities for AI-driven robots.

According to the researchers at Google DeepMind, RT-2 is the "first-of-its-kind" VLA model that uses data scraped from the internet to enable better robotic control through plain language commands.

Eventually, just like Bard or OpenAI's ChatGPT, the robot is able to learn to guess how a robot’s arm should move to pick up a ball or throw an empty soda can into the recycling bin.

"In other words, this model can learn to speak robot," said Karol Hausman, a Google research scientist.

Computers have long been great at complex tasks like analysing data, but not so great at simple tasks like recognizing & moving objects. With RT-2, we’re bridging that gap by helping robots interpret & interact with the world and be more useful to people. https://t.co/GG2IXl1ZxN

— Demis Hassabis (@demishassabis) July 28, 2023

The ultimate goal of this project is to create general-purpose robots that can navigate human environments, similar to fictional robots like Disney/Pixar's WALL·E or Star Wars' C-3PO.

Prior to the advent of VLA models like RT-2, teaching robots required huge resources, including time-consuming individual programming for each specific task.

Like for example, move arm to a specific distance, rotate arm until encountering resistance, raise arm and so on.

Robots would then practice the task again and again, with engineers tweaking the instructions each time until they got it right.

In all, the trained robots were only able to do mechanical, and repetitive tasks, like doing works at factories.

But for all this time, robots wouldn't be able to reliably manipulate objects they had never seen before, and that they certainly weren't capable of making the logical leap of choosing a plastic dinosaur when told to choose an "extinct animal."

Google with RT-2 simply makes robots far smarter and given them new powers of understanding and problem-solving.

The power of these advanced RT-2 model enables robots to draw from a vast pool of information, allowing them to make informed inferences and decisions on the fly.

Google's earliest attempt to create more intelligent robots started in 2022, with the announcement of integrating its language model LLM PaLM into robotics, culminating in the development of the PaLM-SayCan system.

This system united LLM with physical robotics, but lacked the ability to interpret images. But nevertheless, it helped laid the foundation for Google's current achievements.

"We started playing with these language models around two years ago, and then we realized that they have a lot of knowledge in them," said Karol Hausman.

"So we started connecting them to robots."

Nevertheless, the new robot is not without its imperfections.

For example, as reported by publications, the robot struggled with correctly identifying the flavor of a can of LaCroix placed on the table in front of it, and occasionally misidentified fruit as the color white.

"We’ve had to reconsider our entire research program as a result of this change," said Vincent Vanhoucke, Google DeepMind’s head of robotics. "A lot of the things that we were working on before have been entirely invalidated."

While robots are still far from having human-level dexterity, and are still failing at some of the most basic tasks, Google with RT-2 is giving robots an AI to give them skills of reasoning and improvisation.

At this time, the approach worked for certain, limited use. While it's way too slow for labor-intensive works, Google's RT-2 represents a promising breakthrough.

Also at this time, Google has no plans to sell RT-2 robots or release them more widely, but its researchers believe these new language-equipped machines shall eventually be useful for more than just tricks.

Robots like these, which have built-in language models, could be put into warehouses, used in medicine or even deployed as household assistants — folding laundry, unloading the dishwasher, picking up around the house, they said.

"This really opens up using robots in environments where people are," said Vanhoucke. "In office environments, in home environments, in all the places where there are a lot of physical tasks that need to be done."