When light are shone onto objects, the objects are categorized by either transparent, translucent, or opaque.

An object is transparent is it allows light to pass through it. Just like air, water, and clear glass. Translucent on the other hand, only allows partial light to pass. Frosted glass and some plastics are two good examples. And lastly, Opaque objects are those that don't allow any light to pass through them. Materials such as wood, stone, and metals are opaque to visible light.

In general, objects that have one state, cannot have the other state.

For example, an opaque object cannot be transparent, at least in an instant.

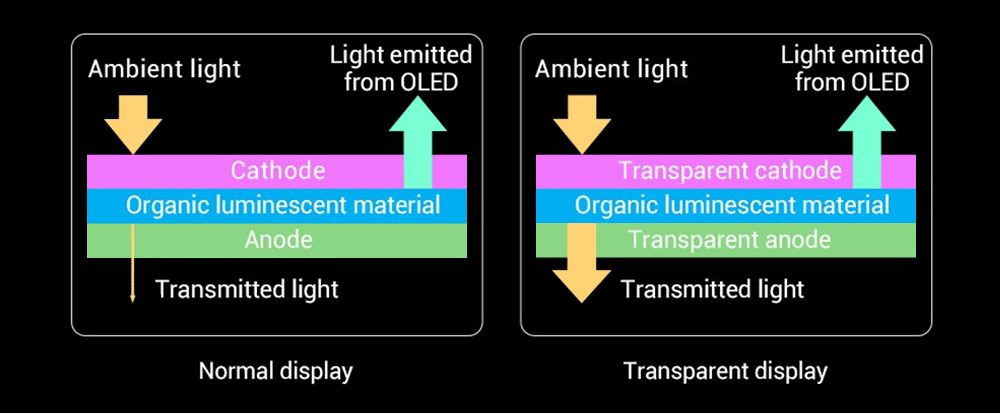

In this case, a smartphone screen is known to be opaque. This is because it uses light-emitting diodes as pixels, meaning that anything inside a phone shouldn't be visible through the screen.

But when researchers have managed to create in-screen fingerprint scanner, people started speculating that in-screen camera would be next breakthrough.

And yes, they are right.

Smartphones have increased the size of their screen, increasing their screen-to-body ratio to occupy most of the front-facing display.

With more screen aesthetics and an increasingly notable 'bezel-less deisgn', phone manufacturers started to struggle in putting their front-facing cameras. Here, they needed to brainstorm to find a way to put those cameras, without ruining the aesthetics of the phone.

Apple came up with the 'notch, which occupy a part of its upper bezel. Most Android phones, started experimenting their own versions of the notch, and then with popup cameras, flip up cameras, punchhole cameras, and others.

Ever since the first of these designs hit the market, people have all been yearning for the next evolution in smartphone design. And that is a way to completely eliminate the visibility of the cameras.

And to do that, manufacturers had to put the cameras behind the screen.

To make that happen, the screen needs to be able to become opaque on normal conditions, and transparent when the selfie camera is launched.

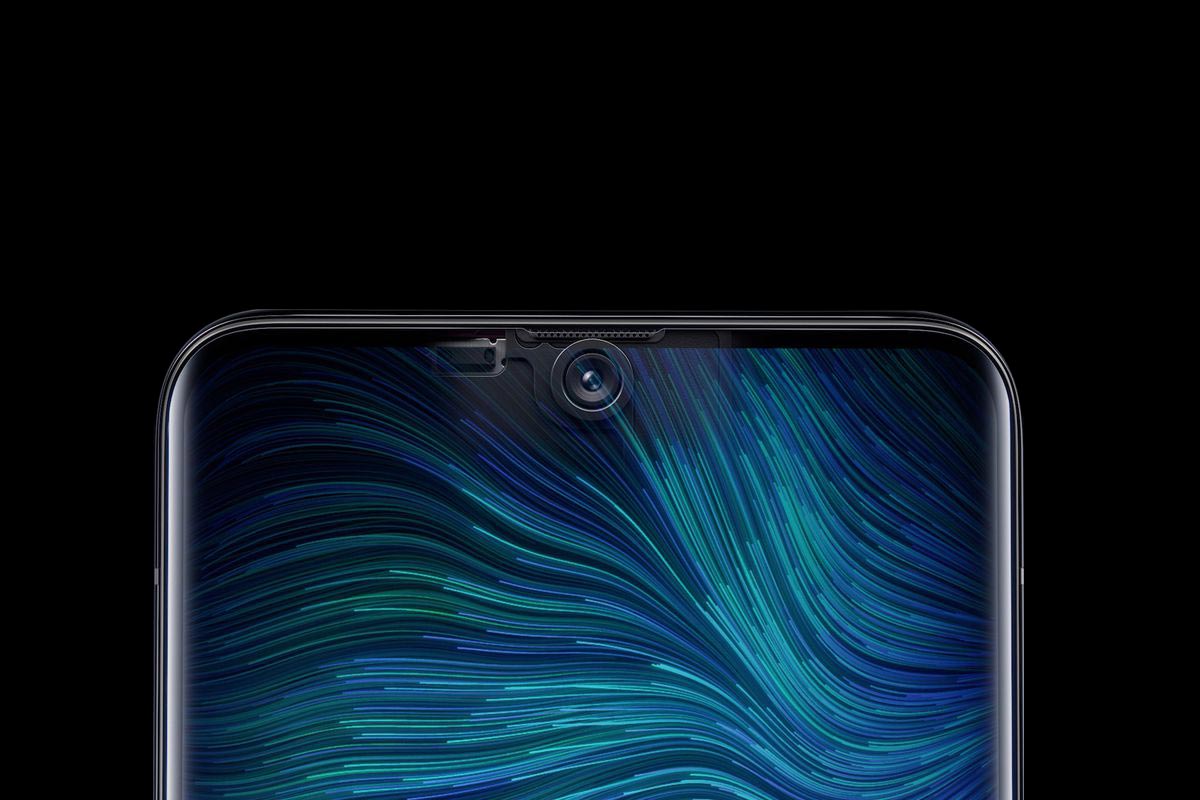

For those seeking the perfect, notchless smartphone screen experience – prepare to be amazed.

You are taking a very first look at our under-display selfie camera technology. RT! pic.twitter.com/FrqB6RiJaY— OPPO (@oppo) June 3, 2019

One of the first to introduce in-screen camera technology, was OPPO.

The Chinese phone manufacturer uses a custom transparent material that works with a redesigned pixel structure so that light can get through to the camera.

The area of the screen reserved for the camera still works with touch control, and OPPO said display quality won’t be compromised.

Xiaomi is also one of the very first to introduce its own in-screen camera technology.

According to Xiaomi, which is also a Chinese phone manufacturer, its technology involves a “special-low-reflective glass with high transmittance.”

Xiaomi said that the setup allows the area of the display to become transparent to allow light to enter, allowing a selfie. Otherwise, or in normal conditions, the area is opaque, allowing content to be displayed in full.

The company doubled the number of horizontal and vertical pixels in the screen circle above the hidden camera, allowing light to pass through the gap area of sub-pixels while having the same display pixel density as the rest of the smartphone screen.

The result is a functional camera tucked under a display area that has similar if not the same brightness, color gamut, and color accuracy as the whole display.

Do you want a sneak peek at the future? Here you go...introducing you to Under-Display Camera technology!#Xiaomi #InnovationForEveryone pic.twitter.com/d2HL6FHkh1

— Xiaomi (@Xiaomi) June 3, 2019

To instantly switch an opaque object to transparent is like 'magic'.

But in technical terms, it involves obscuring the camera when not in used, so it is 'disguised' within the handset’s display, without ruining the edge-to-edge display effect, resulting in a far nicer finish.

In other words, technical wizardry has allowed the pixel density, brightness, and color accuracy in front of the under-the-display camera to be the same as the rest of the phone, when phone is in normal operating condition.

It should be noted that smartphones' screens cannot be a 100% transparent (clear). It must have at least some degree of translucency.

Any object that is placed between a sensor and the target object, will obstruct and affect clarity.

This is why the manufacturers use specially-designed circuits to hide more components under the RGB sub-pixels to further increase the light transmittance of the in-screen camera area.

The camera sensor also takes advantage of being behind the screen. Manufacturers can use larger-than-other selfie sensors because they aren't restricted to the bezel size. And with wider aperture lens, manufacturers can counter the effect of this translucency.

Optimization algorithm is also used to help the camera match non-hidden cameras in quality.

It should also be noted that the camera app on phones can have black borders when the selfie mode is switched on. Besides making users focus to what the camera sees, this is also an approach intended to obscure and disguise the selfie camera when the pixels of the screen is altered from opaque.