In the ever-evolving landscape of AI, large language models (LLMs) have emerged as the cornerstone of next-generation digital assistants.

Since OpenAI introduced ChatGPT in late 2022, the tech world has witnessed a seismic shift in how conversational AI is perceived and utilized. Companies like Google followed with Gemini, Microsoft launched Copilot, and Anthropic introduced Claude — all intensifying the competition.

These advancements have set new benchmarks for AI capabilities, leaving companies like Apple grappling with a critical question: how does one innovate when the industry has already set the pace?

Apple's voice assistant, Siri — once a pioneer in voice recognition — now finds itself lagging behind its more advanced counterparts. Internal research has revealed that Siri’s performance trails that of ChatGPT by approximately 25%, both in accuracy and in the range of questions it can handle.

This disparity has led to growing expectations from users and industry observers alike, who anticipate that Apple will leverage its vast ecosystem to deliver a more integrated and intuitive AI experience.

In response to these challenges, Apple has begun reconsidering its approach.

At first, Apple attempted to market its Apple Intelligence platform with ChatGPT-powered features to enhance its AI offerings. However, the response was lukewarm — if not cold. The technology proved neither particularly attractive nor sufficiently capable compared to its rivals.

Following a series of high-profile shortcomings around Siri's personalization and intelligence, the company finally acknowledged the gap.

Apple then considered building its own LLM to power Siri. But developing a state-of-the-art model from scratch requires immense effort, massive resources, and significant time — all of which Apple may not be willing to commit at this stage.

Given these challenges, the company is now exploring the alternative of integrating third-party LLMs.

Its top candidates include OpenAI’s ChatGPT and Anthropic’s Claude, both of which Apple is considering embedding directly into Siri’s infrastructure.

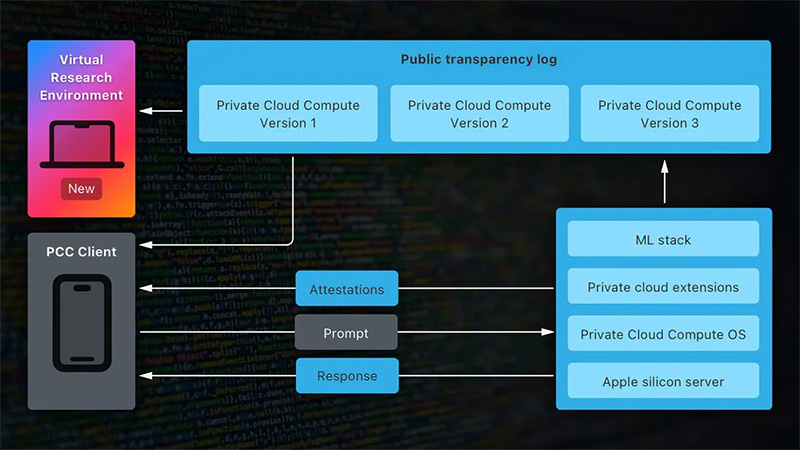

Reports suggest Apple has already met with both companies and has requested them to test and train their models on Apple’s Private Cloud Compute servers.

Early testing has indicated that Anthropic’s Claude may be the most promising candidate for powering Siri. As a result, Apple’s VP of Corporate Development, Adrian Perica, has reportedly begun talks with Anthropic about a potential partnership.

Interestingly, OpenAI had offered to train models for Apple long before Anthropic was in the picture, but Apple — at the time — was still confident that its in-house models would be sufficient. Now, with Anthropic reportedly asking for a multibillion-dollar annual fee that increases each year, Apple hesitated at first. And this overpricing reopened the door for OpenAI and perhaps other third parties.

Apple’s move toward integrating third-party AI represents a major shift from its longstanding preference for proprietary, closed development.

It marks a pragmatic recognition that in order to keep up in the rapidly advancing AI race, it may need to rely on external innovations.

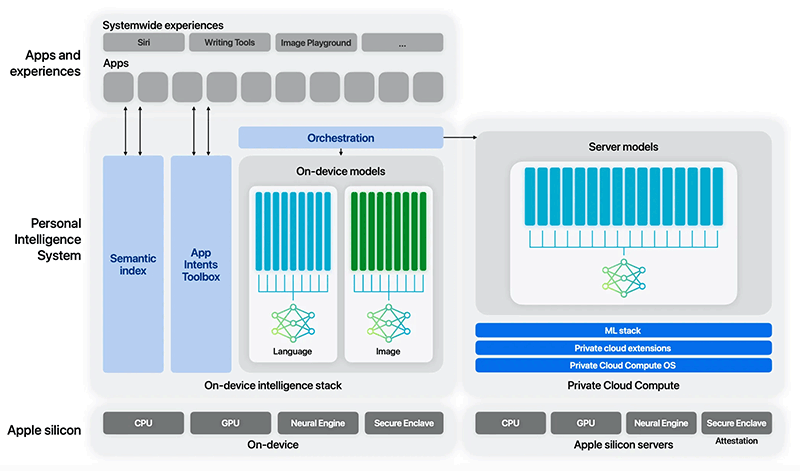

Still, Apple’s deep commitment to user privacy adds complexity. The company prefers on-device processing (computationally expensive while using a lot of storage) and strict control over user data — while most LLMs operate on cloud-based infrastructure. This presents a unique challenge.

As a result, Apple is pushing to run any third-party models, like Claude or ChatGPT, on its own chips and servers.

By doing so, Apple believes it can maintain tighter control over performance and security, while continuing to honor its privacy-first philosophy.

Balancing these priorities — performance, privacy, and platform control — will be critical as Apple moves forward in enhancing Siri and defining its role in the future of AI.

As the AI race accelerates, Apple’s next move may not just determine the fate of Siri, but its position in the broader tech landscape. The intersection of privacy, innovation, and user experience is being redrawn — and Apple must choose its path wisely.

At this time, tech giants utilizing a third party for high-quality AI models is not new.

For example, Samsung is already using Gemini in its smartphones, whereas Amazon's Alexa voice assistant relies on Claude models.

Read: The Complex Plan, Where Apple Plans To Improve Its AI By Privately Analyzing User Data