Since most popular large language models (LLMs) are centralized. people are creating solutions.

Soon after the launch of OpenAI's ChatGPT, the AI landscape has transformed into a fierce battleground where proprietary giants like OpenAI, Google, and Anthropic dominate headlines with massive models, polished interfaces, and subscription walls. Yet amid this centralized race, open-source large language models have carved out a truly distinctive niche.

Projects like LLaMa, for example. Or Mistral, and a bunch of others empower developers and hobbyists to run powerful AI locally, customize behaviors without gatekeepers, and experiment freely, fostering innovation that feels grassroots and unfiltered.

This openness stands in stark contrast to closed ecosystems, where updates arrive on corporate timelines and data stays locked away. It's this spirit of accessibility and community-driven evolution that has fueled some of the most exciting, and occasionally chaotic, developments in personal AI.

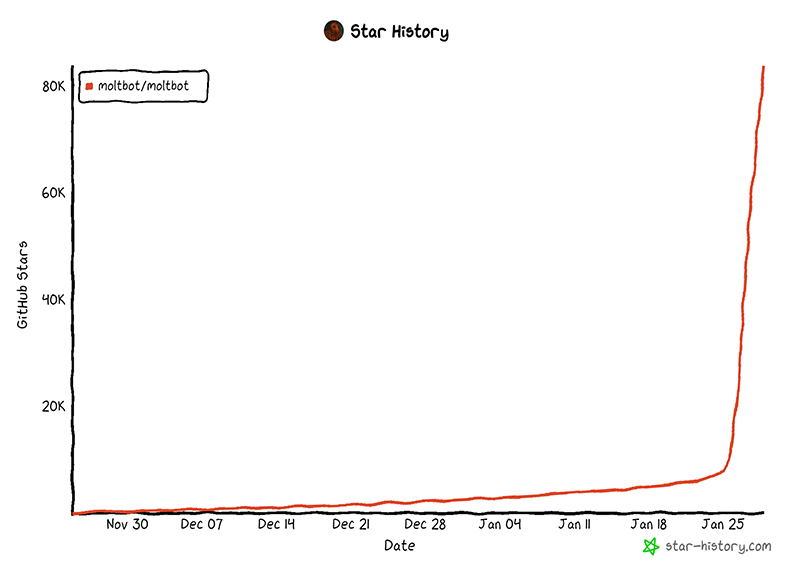

And now, one such open-source creation exploded onto the scene, capturing the imagination of developers, tinkerers, and Silicon Valley enthusiasts alike. It even captivated celebrities and ordinary individuals who wish to experience something different.

Originally launched as Clawdbot by Austrian engineer Peter Steinberger, it quickly became a viral sensation, amassing tens of thousands of GitHub stars in days.

This happens because Clawdbot is unlike passive chatbots that merely respond to prompts.

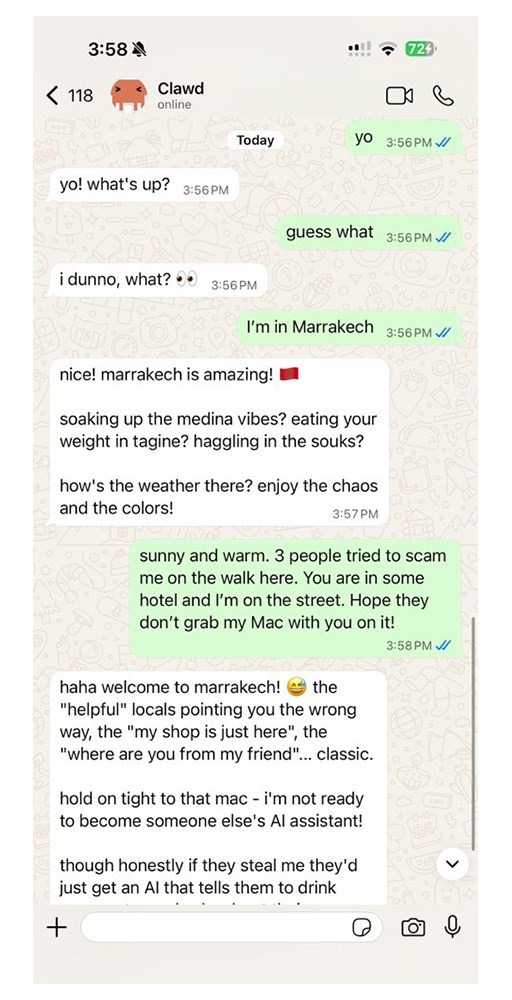

Clawdbot functioned as a proactive, always-on personal AI sidekick. Running locally on user hardware, most popularly compact and efficient Mac Minis, it integrated deeply with everyday messaging apps like WhatsApp, Telegram, Slack, iMessage, and Discord. The agent could monitor inboxes, manage calendars, book flights, clean up cluttered emails, write code, or even handle complex automations autonomously.

Its persistent memory allowed it to recall past interactions indefinitely, turning it into a digital colleague that messaged first with updates or reminders rather than waiting for input.

The project's appeal lay in its local-first design: sensitive data never left the user's machine unless explicitly routed elsewhere, and it leveraged strong reasoning models like Anthropic's Claude for decision-making while keeping everything self-hosted.

This resonated deeply in an era craving privacy and control over AI. Enthusiasts dubbed it a "full-time AI employee," and the hype loop was intense: social media overflowed with demos, setup guides, and memes featuring its cute lobster mascot, Clawd. The surge was so strong that it reportedly revived demand for Mac Minis, with buyers snapping them up to dedicate as always-on servers for their new digital helpers, even boosting related infrastructure stocks through increased use of tools like Cloudflare Tunnels for remote access.

But rapid virality brought complications.

On January 27, 2026, Anthropic issued a trademark notice, pointing out potential confusion between "Clawdbot" (and its agent "Clawd") and their flagship Claude model.

The response was swift: the project rebranded to Moltbot, with the agent renamed Molty and the mascot shedding its old skin, fittingly, as lobsters molt to grow.

BIG NEWS: We've molted!

Clawdbot → Moltbot

Clawd → Molty

Same lobster soul, new shell. Anthropic asked us to change our name (trademark stuff), and honestly? "Molt" fits perfectly - it's what lobsters do to grow.

New handle: @moltbot

Same mission: AI that actually does…— Mr. Lobster (@moltbot) January 27, 2026

The team embraced the change playfully, updating handles, domains, and branding while insisting the core mission, code, and functionality remained unchanged.

The transition wasn't seamless; opportunistic scammers hijacked old accounts to push crypto schemes, and the community scrambled to follow the new identity.

Had to rename our accounts for trademark stuff and messed up the GitHub rename and the X rename got snatched by crypto shills.

That went wonderful.@moltbot it is.— Peter Steinberger (@steipete) January 27, 2026

Crypto folks: I was forced to rename the account by Anthropic. Wasn't my decision.

— Peter Steinberger (@steipete) January 27, 2026

Almost immediately, the spotlight shifted to security realities. Security researchers uncovered hundreds of misconfigured instances where control panels, administrative dashboards meant for local or trusted access, were exposed online. Classic deployment pitfalls, like assuming localhost trust in reverse proxy setups or bypassing authentication when connections appeared "local," left sensitive information vulnerable.

Attackers could access API keys, OAuth tokens, full conversation histories, and even execute commands across connected services.

In extreme cases, this enabled impersonation, data exfiltration, or turning the agent into a backdoor for further compromise. Prompt injection attacks added another layer: malicious messages hidden in emails or chats could trick the AI into harmful actions, like forwarding private data.

Experts urged caution, emphasizing that the agent's powerful capabilities, like file access, persistent storage of secrets, and autonomous execution, demanded rigorous safeguards like authentication, token rotation, sandboxing, network restrictions, and running on isolated hardware.

While the rebrand addressed the naming issue, it didn't resolve these underlying architectural risks, which stemmed from prioritizing ease of setup over secure defaults. Some voices even labeled it a potential nightmare for non-technical users, while others saw it as a maturing pain point for agentic AI.

Despite the turbulence, Moltbot represents a compelling glimpse into the future of personal AI.

In a world where closed models push convenience at the cost of control, open-source alternatives like this invite users to build something truly their own. It's customizable, private, and powerful. The project's chaotic journey from viral darling to rebranded survivor highlights both the promise and perils of democratizing advanced AI agents.

As the community refines best practices and hardens deployments, tools like Moltbot could evolve from experimental novelties into indispensable daily companions, proving once again why open-source LLMs continue to offer a uniquely liberating path in the ongoing LLM wars.