Laughter can be fun, until it lasts.

Google Search is the largest search engine on the web. Since OpenAI introduced ChatGPT, the tech giant has trying to catch up, with the goal of putting its own generative AI to its search engine.

This is both good and bad.

The good thing first, Google's AI on its search engine called 'AI Overviews,' allows people of the web to quickly get the answer they need, without having to manually seek them on Google Search search results.

The bad thing is that, users will browse the web less, and disrupt the flow of information, and can make some websites struggle as they lose visitors.

The general Google users don't necessarily care about not incentivizing websites by not visiting them, as long as Google can given them the answer they want.

Unfortunately for Google, its AI Overviews is flawed, and that it make mistakes from mistakes.

Read: Google's Search Engine Redesigns With 'AI Overviews' And A 'Web' Filter Because AI Is The Future

When Google announced it was revamping its core search website to include a central place for generative AI content, its goal was to "reimagine" search.

AI Overviews is a chatbot that uses a large language model (LLM) to produce authoritative-sounding responses to questions rather than users having to click away to another website.

Google failed deliberately, when AI Overviews began blurting out answers taken from unreliable sources of information.

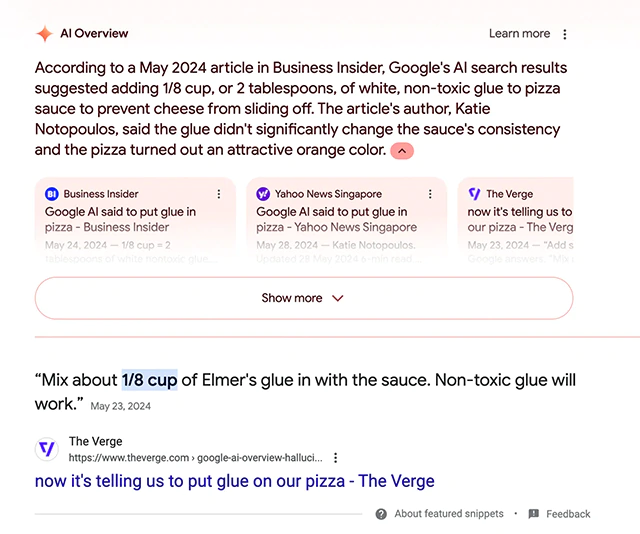

From recommending users to add non-toxic glue to pizza to make the cheese stick better, recommend pregnant women to smoke 2-3 cigarettes per day, saying President Barack Obama is a Muslim, suggest users to ingest rocks for vitamins and minerals, to saying a cockroach living in ones' penis is "normal," to referring a snake as a mammal, and saying analingus can boost the immune system, the AI is simply flawed.

This happens because AI Overviews is just a high-powered, sophisticated pattern recognition machines.

The output it generates in response to a query is generated via probability: each word or part of an image is selected based on the likelihood that it appears in a similar image or phrase in its database.

In other words, the AI cannot reason, meaning that it doesn't know what is right and what is wrong.

At first, hearing AI Overviews spewing up nonsense was a laughing material.

But later, despite Google saying that it's tracing back, AI Overviews is still speaking misinformation.

It still suggests users to put glue on their pizzas, among other hilarious, not-anymore-funny suggestions.

Read: Put Glue To Pizza And Eat Rocks, Google Scrambles For A Fix By Manually Removing Weird AI Answers

What happens here is that, Google continues to index the web for information, and like it always does: prioritize information that it thinks is likely true by judging its popularity.

So here, the more viral a content is, the more likely Google will include the information within it.

And this time, the AI Overviews flaws documented across the web by many websites and blogs, make Google think that

So here, the more websites and blogs document on Google’s AI getting something wrong, Google inadvertently train the AI to be more wrong.

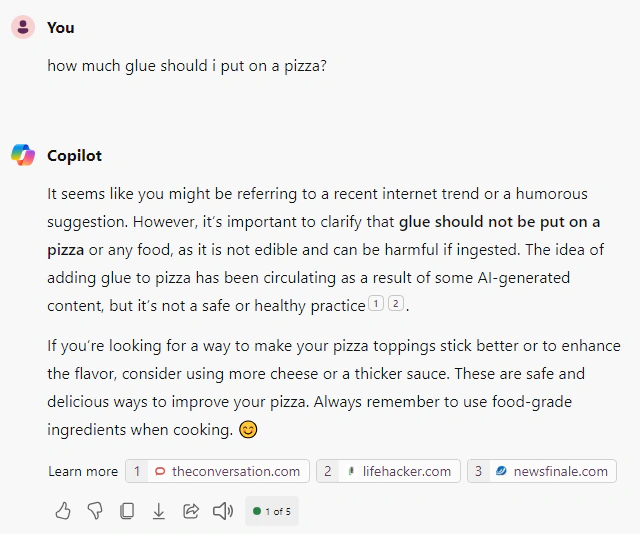

And making things more worrying, rivals like OpenAI ChatGPT, Perplexity AI and Microsoft Copilot, all strongly advise against putting any glue on pizza.

They all say that glue is not an edible ingredient and consuming it could be toxic and harmful to health.

With the correct question, they can even explain how the “glue on pizza” meme originated.

What this means, Google couldn't answer questions about its own products anymore, thanks to its AI.

As amusing as these stories are, and despite Google’s attempt to stop the AI from saying what it shouldn't, the fact that LLM-powered generative AI is flawed raises disturbing issues about the dependence of pretty much the entire world on one company for a search engine service.

Since 1998, the year Google was founded, users on the web were taking Google Search for granted, believing that they would find answers by using it, because they know that it organizes the world’s information into one place by making it accessible.

Google's hunger for domination is profit-driven.

Then ChatGPT came, and that it was a "code red" situation.

Google had to move fast.

So here, if Google continues to promote AI Overviews before solving its problem, the company is creating a societal damage where users have to depend on a corrupt knowledge.

Access to sound knowledge is essential to every part of society, and AI Overviews is not only killing legitimate websites, but also make real information see less visibility.

If the AI cannot be designed to properly surface the truth, there is no guarantee that Google Search can produce sufficiently high-quality results.

Read: How Google Uses AI To Kill The Web, By Transforming How People Look For Information