Things are heating up after OpenAI announced the release of two new models.

Since the debut of ChatGPT in late 2022, companies across the tech industry have been racing to develop increasingly powerful AI systems capable of writing code, analyzing data, and supporting complex knowledge work. What began as simple conversational AI has rapidly evolved into a broader ecosystem that includes advanced reasoning systems, coding assistants, and autonomous agents able to interact with tools and software environments.

This rapid progress in large language models (LLMs) has transformed the AI space into a fiercely competitive arena.

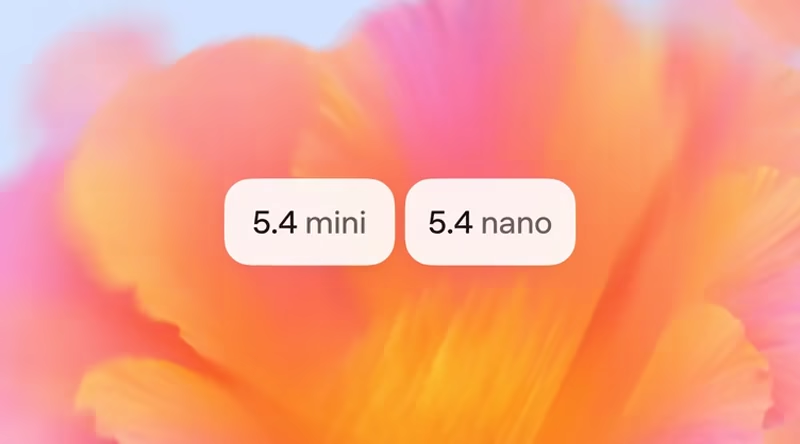

Amid this momentum, OpenAI continues refining its GPT series, now with the announcement of 'GPT-5.4 mini' and 'GPT-5.4 nano.'

These smaller variants are intended for situations where low latency and reduced costs matter more than maximum intelligence, particularly in coding tools, agent systems, and high-volume processing.

The announcement buids on the earlier rollout of GPT-5.4 Thinking earlier in the month.

We’re introducing GPT-5.4 mini and nano, our most capable small models yet.

GPT-5.4 mini is more than 2x faster than GPT-5 mini. Optimized for coding, computer use, multimodal understanding, and subagents.

For lighter-weight tasks, GPT-5.4 nano is our smallest and cheapest… pic.twitter.com/cdp5HWtM2M— OpenAI Developers (@OpenAIDevs) March 17, 2026

GPT-5.4 mini represents the more capable of the two new options.

It runs more than twice as fast as the previous GPT-5 mini while showing measurable gains in coding, step-by-step reasoning, multimodal tasks that combine text and images, and tool integration including function calling and web or file searches. Benchmarks indicate it approaches the performance of the full GPT-5.4 on several evaluations, reaching 54.4% on the public SWE-Bench Pro test for software engineering tasks compared with 57.7% for the larger model and 45.7% for the prior mini.

Similar improvements appear on Terminal-Bench, GPQA Diamond, and OSWorld-Verified tests that measure real-world computer interaction through screenshot analysis.

"GPT‑5.4 mini significantly improves over GPT‑5 mini across coding, reasoning, multimodal understanding, and tool use, while running more than 2x faster. It also approaches the performance of the larger GPT‑5.4 model on several evaluations, including SWE-Bench Pro and OSWorld-Verified," explained OpenAI.

In ChatGPT, GPT‑5.4 mini is available to Free and Go users via the "Thinking" feature in the + menu. For all other users, GPT‑5.4 mini is available as a rate limit fallback for GPT‑5.4 Thinking.

The model supports a 400,000-token context window in the API and handles computer-use scenarios effectively by interpreting on-screen interfaces. It is available immediately through the developer API, the Codex coding environment, and inside ChatGPT, where free and Go-tier users can select it via the Thinking feature or as a fallback when rate limits apply to the larger model.

GPT-5.4 mini approaches the performance of the larger GPT-5.4 model on several evaluations, including SWE-Bench Pro and OSWorld-Verified. https://t.co/DqTjjZ835S

— OpenAI Developers (@OpenAIDevs) March 17, 2026

In Codex specifically, the mini version consumes only about 30% of the quota allocated to GPT-5.4, allowing developers to route simpler subtasks to it at roughly one-third the cost while reserving the flagship model for planning and coordination.

This setup supports subagent architectures, where a larger model outlines a project and one or more mini instances execute narrower steps in parallel, such as searching codebases or reviewing documents.

Pricing for API access stands at 75 cents per million input tokens and $4.50 per million output tokens.

"GPT‑5.4 nano is the smallest, cheapest version of GPT‑5.4 for tasks where speed and cost matter most. It is also a significant upgrade over GPT‑5 nano. We recommend it for classification, data extraction, ranking, and coding subagents that handle simpler supporting tasks," explained OpenAI.

This model fills the lower end of the spectrum as the smallest and least expensive member of the GPT-5.4 family. It is designed for lightweight, repetitive work such as data classification, information extraction, ranking items, or basic coding support tasks.

While its scores trail those of the mini on complex benchmarks, for example, 52.4% on SWE-Bench Pro and 46.3% on Terminal-Bench, it still outperforms the earlier GPT-5 nano and remains sufficient for high-throughput applications.

Tool use and function calling are supported in the API, though the model is not offered in ChatGPT or Codex. Its pricing is set at 20 cents per million input tokens and $1.25 per million output tokens, making it practical for volume-driven automation.

GPT-5.4 mini is available today in the API, Codex, and ChatGPT.

In the API, it has a 400k context window. In Codex, it uses only 30% of the GPT-5.4 quota, letting you handle simpler coding tasks for about one-third of the cost.

GPT-5.4 nano is only available in the API.— OpenAI Developers (@OpenAIDevs) March 17, 2026

Together, the releases reflect OpenAI’s continued emphasis on offering a tiered selection of models rather than relying solely on the most powerful version for every use case.

Coverage from technology outlets notes that organizations working in document-heavy fields and productivity software have begun testing the mini for cost savings on routine operations while maintaining acceptable accuracy. Some developer commentary on social platforms has highlighted the pricing relative to older mini models and a preference among certain users for legacy options like GPT-4o, yet the new variants provide clear technical trade-offs in speed and efficiency for targeted workflows.

As the GPT-5 lineage matures, these additions give builders additional flexibility in matching model size to specific requirements without defaulting to the largest available option.