In a report, it is said that Google is using machine-learning technology to help it design its next-generation computer chips.

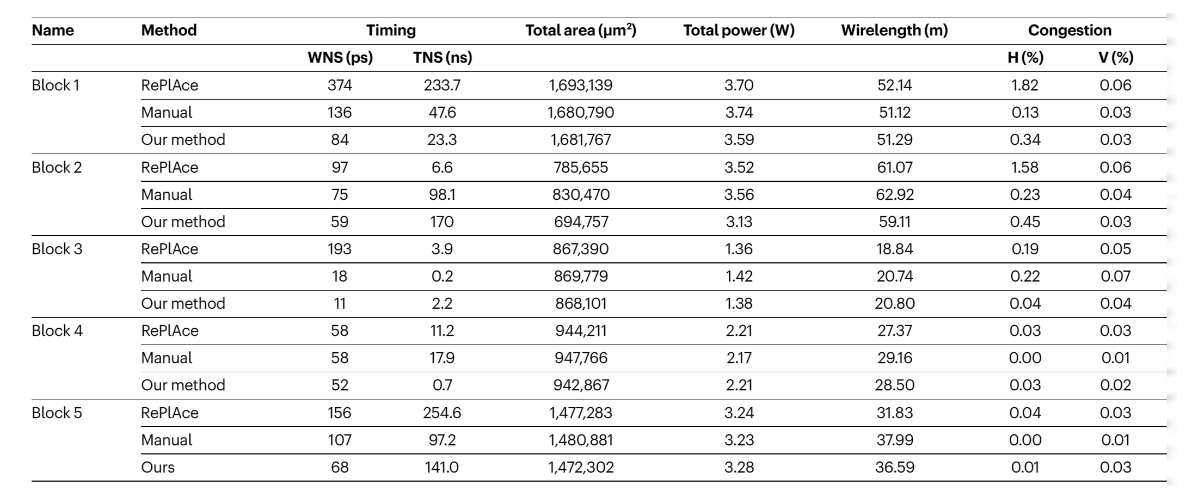

Google relies on AI to create its machine-learning chips, because algorithm’s designs are “comparable or superior” to those created by humans in all key metrics, including power consumption, performance and chip area, said Google’s engineers, and that they can also be generated much, much faster.

In comparison, when it requires a team of engineers a few months to create new designs, AI-powered algorithms can finish the job in under six hours.

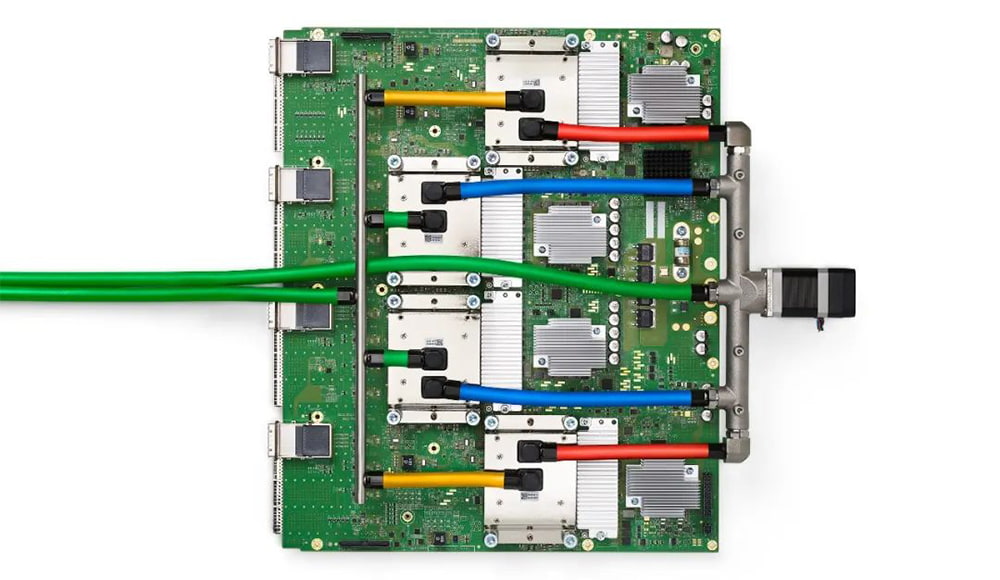

Google is said to have been working with AI to create new chips for years, but it has only been described in a post by the journal Nature, that the Google's initiative is being applied to a commercial product, which is at this time, Google's own TPU (Tensor Processing Unit) chips, which are designed for optimum AI computation.

“Our method has been used in production to design the next generation of Google TPU,” wrote the authors of the paper, led by Google’s head of ML for Systems, Azalia Mirhoseini.

Another way of saying this, Google is using AI, to help AI development.

The step involves a task called 'floorplanning,' which is working on finding out the most optimal layout on a silicon die for a chip's sub-systems. These components like CPUs, GPUs, and memory cores, which are connected together using tens of kilometers of minuscule wiring.

Usually, the job is done using human designers who work with computers. But using AI-powered algorithms, Google finds that computers can work well in the nanometer-sized environment.

Previously, Google has shown that AI can outperform human in games like chess and Go.

Google's engineers note that floorplanning is no different.

Instead of board games, the AI is put to work on a silicon die. And instead of using game pieces like Knights or Rooks, the AI deals with components like CPUs and GPUs. The task, then, is to simply find each board’s “win conditions.”

In chess, the goal is a checkmate. In chip design, it’s computational efficiency.

In the paper, Google’s engineers note that the work has “major implications” for the overall chip industry.

This is because using computers, the process of designing chips can be accelerated a lot faster, and this should allow companies to more quickly explore the possible architecture space for upcoming designs and more easily customize chips for specific workloads.

An editorial in Nature calls this research an “important achievement.”

Our method was used to design the next generation of Google’s artificial intelligence (AI) accelerators, and has the potential to save thousands of hours of human effort for each new generation," the paper said.

"Finally, we believe that more powerful AI-designed hardware will fuel advances in AI, creating a symbiotic relationship between the two fields."

What's more, this kind of work could help offset the forecasted end of Moore’s Law, which is an axiom of chip design from the 1970s that states that the number of transistors on a chip should double every two years.

Using AI to design computers chips for AIs to run, won’t necessarily solve the physical challenges of squeezing more and more transistors onto chips, or to debunk the Moore's Law, but it could help find other paths to increasing performance at the same rate.

Read: When Machine Learns, It All Started With A Human's Dream And A Good Imagination