With increasing abilities of devices, thanks to their increasingly powerful hardware, and also with the more reliable internet, smartphones have become the powerful computers people have always dreamed of.

In the modern days of computing and internet, people can do practically anything with their smartphones.

From taking pictures to videos, to doing work as well as collaborative tasks, social media, emails, instant messaging, browsing the web, read news, live streaming, entertainment, and lots more.

The are also disadvantages, and among them, includes the decreasing privacy of its users.

With modern devices connected to the internet 24/7, data can flow behind people's back. Not many people know which of their data is being analyzed, shared or sent.

And this time, Apple announced its plan to scan every single picture its iPhone users have.

The tech giant wants to do that in the U.S. for Child Sexual Abuse Material (CSAM).

As a part of this initiative, the company is partnering with the government to make some changes to its iCloud, iMessage, Siri and also Search.

That, in order to be able to scan files to look for any CSAM.

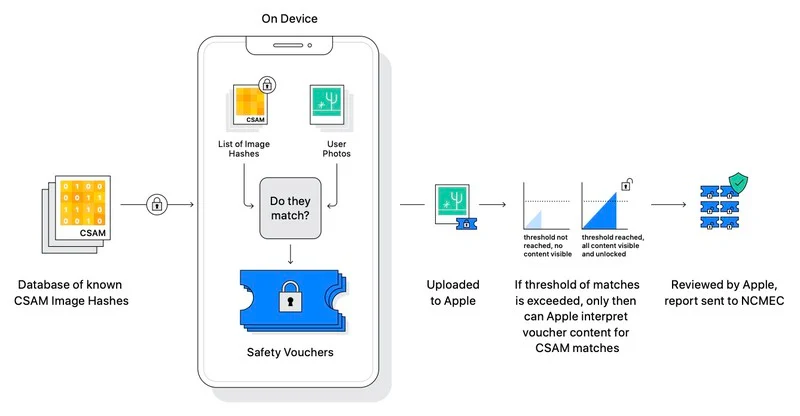

Apple plans to do this by matching the images users have, with fingerprinted images provided by the National Center for Missing and Exploited Children (NCMEC).

Apple says that before an image is sent to the iCloud storage, its algorithm will perform a check against known CSAM hashes.

Apple can do this by scanning images that are already sent to its iCloud storage, and plans to perform these actions also on users' devices.

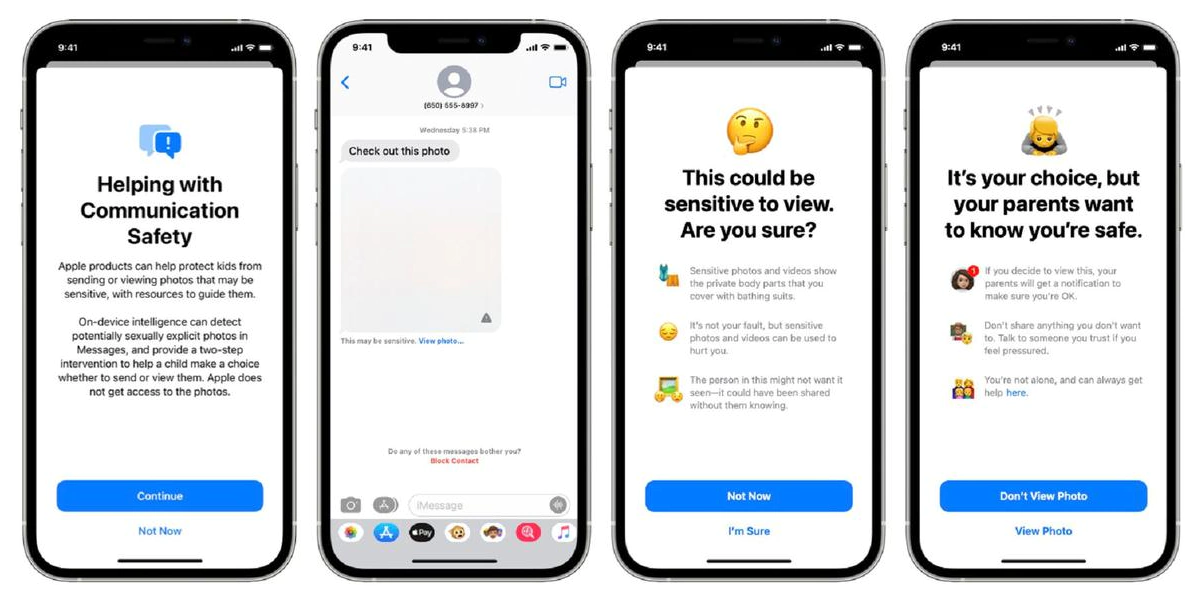

As for the scanning method on iMessage, Apple plans to perform a scan and if it ever finds a CSAM, it will blur it.

And if a child (user under 13 years old) views such image, Apple can notify the child's parents, so they can take appropriate action.

If a child is trying to send such an image, they’ll be warned, but Apple won't stop them.

If the child continues, Apple can also send notification to the parents.

Teenagers aged 13-17 will only get a warning notification on their own phones.

And as for Siri and Search, Apple is tweaking the features to provide additional CSAM-related resources for parents and children.

Furthermore, if Apple finds users perform CSAM-related searches, Siri can intervene and give them a warning about the content.

Apple's method is meant to help stop the spread of CSAM.

But when it comes to privacy, Apple is crossing some boundaries.

According to digital rights organization Electronic Frontier Foundation (EFF), Apple's implementation has the change to open potential backdoors in an otherwise robust encryption system.

“To say that we are disappointed by Apple’s plans is an understatement,” EFF added.

The organization suggested that scanning for contents using a pre-defined database could lead to dangerous use cases. For instance, in a country where homosexuality is a crime, the government “might require the classifier to be trained to restrict apparent LGBTQ+ content.”

Edward Snowden weighted in, saying that Apple is rolling out a mass surveillance tool, and they can scan for anything on your phone tomorrow.

After all, practically nobody wants anybody else to go to their camera roll or cloud storage account, scan for things stored in there, and conclude that they are guilty of doing something (even if they are guilty).

That anyone is not limited to just people, as most users of devices also don't want any sort of algorithms or AI to judge what they are supposed or not supposed to do.

Apple plans to bring this CSAM prevention method through iOS 15, iPadOS 15, watchOS 8, and macOS Monterey.

No matter how well-intentioned, @Apple is rolling out mass surveillance to the entire world with this. Make no mistake: if they can scan for kiddie porn today, they can scan for anything tomorrow.

They turned a trillion dollars of devices into iNarcs—*without asking.* https://t.co/wIMWijIjJk— Edward Snowden (@Snowden) August 6, 2021