Three scientists who kickstarted an AI revolution by studying the technology's learning abilities, have received the Turing Award.

Geoffrey Hinton, Yann LeCun, and Yoshua Bengio have been dedicating their knowledge and time to study neural networks, when practically everyone in the AI community preferred to focus on symbolic approaches to building intelligent machines.

Sometimes called the ‘godfathers of AI’, the three have been recognized for their work in developing the AI subfield of deep learning.

The techniques they developed in the 1990s and 2000s, enabled huge breakthroughs in tasks like computer vision and speech recognition.

"There was a dark period between the mid-90s and early-to-mid-2000s when it was impossible to publish research on neural nets, because the community had lost interest in it," explained LeCun. "In fact, it had a bad rep. It was a bit taboo."

It was in 2012 when people finally realized that deep neural networks have astonishing capabilities.

"We organized regular meetings, regular workshops, and summer schools for our students," added LeCun. "That created a small community that [...] around 2012, 2013 really exploded."

What Hinton, LeCun and Bengio did, was underpinning the modern proliferation of AI technologies, from image-recognition used by companies like Google and Facebook, to self-driving cars, automated medical diagnoses and many others.

The key ingredient is that, people need to feed the AI with huge quantities of labeled training data, to then run them on powerful graphics processing chips (GPUs), which are better suited for parallel computation than CPUs.

With this knowledge, the community started developing deep learning in a pace never previously seen.

But here, scientists, including Hinton, LeCun and Bengio, have sometimes wondered if this technology advancement is moving too quickly.

Deep learning has powered face recognition and other forms of surveillance, for example. In China, this technology has been used for mass-surveillance across the country, limiting the move of criminals, including ordinary people, reducing privacy significantly.

Furthermore, the technology is somehow consolidated in the hands of those with lots of data and computing power.

"It’s a great honor," said LeCun, describing what he felt receiving the award. "As good as it gets in computer science. It’s an even better feeling that it’s shared with my friends Yoshua and Geoff."

For Hilton, his job reflects a fundamental truth about AI that goes back to the man who first speculated about intelligent machines. "One person who strongly believed the root of learning was learning was Turing." he said.

The Turing Award is known as the "Nobel Prize of computing".

Held annually with financial support from Google and Intel, the prize is given by the Association for Computing Machinery (ACM) to individual selected for contributions "of lasting and major technical importance to the computer field."

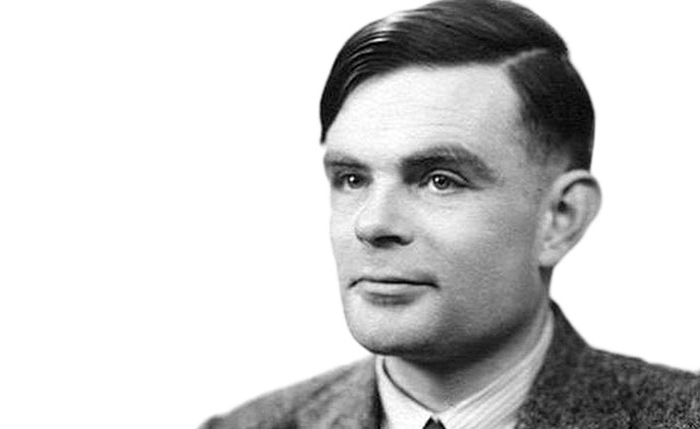

The award is named after Alan Turing. The British mathematician, computer scientist, logician, cryptanalyst, philosopher and theoretical biologist is often credited as being the founder of theoretical computer science and artificial intelligence.

At that time, during the World War II, Turing worked for the Government Code and Cypher School (GC&CS) at Bletchley Park, to create a machine to break cryptic codes used by the German naval. Here, he devised a number of techniques for speeding the breaking of German ciphers, including improvements to the pre-war Polish bombe method, an electromechanical machine that could find settings for the Enigma machine.

His work is said to have shortened the war in Europe by more than two years, and saved over 14 million lives.

It was after the war that Turing designed the Automatic Computing Engine, which was one of the first designs for a stored-program computer.

In the 1950, Turing addressed the problem of AI, and proposed an experiment that became known as the 'Turing test.' This test is an attempt to define a standard for a machine to be called "intelligent".

The idea was that a computer could be said to "think" if a human interrogator could not tell it apart, through conversation, from a human being.

Turing suggested that rather than building a computer program to simulate a human adult's mind, it would be better rather to create a simpler machine to simulate a child's mind, to then subject it to a course of education.

This idea eventually became the foundation of modern AI and deep learning.

In the field that continues to develop and expand, humans will create and discover new methods that are foundational as those developed by the three godfathers of AI.

"Whether we’ll able to use new methods to create human-level intelligence, well, there’s probably another 50 mountains to climb, including ones we can’t even see yet” said LeCun. "We’ve only climbed the first mountain. Maybe the second."