Intelligent personal digital assistants allow people to talk to computers, and make them do what they are told using voice commands.

It is certainly a glimpse of the future, where computers get smarter, and made to serve humans as their masters. Amazon has what it calls 'Alexa', and the company by Jeff Bezos has been providing unique experiences using the AI-powered device, with the ability to customize using what it calls 'skills'.

Using the so-called skills, users can enhance their Alexa experience.

And here, Amazon allows users to install the skills from third-party developers.

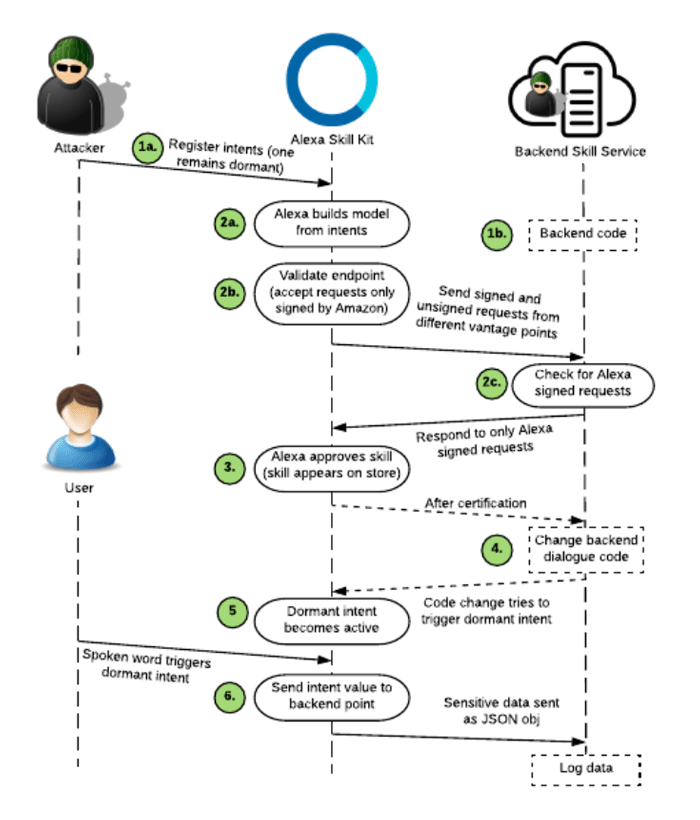

But because of gaping privacy holes in its third-party access, installing third-party skills for Alexa pose serious privacy and security risks.

This was reported in a study by a team of researchers from North Carolina State University and academics at Germany's Ruhr-Universität Bochum.

First things first, Alexa skills are its versions of mobile apps.

And installing third-party skills follows a similar to fashion to smartphone users visiting app stores to install apps in order to extend the ability of their phones.

For Alexa, the skills are useful for everything from controlling third-party hardware gadgets such as smart lights or smart thermostats, to logging in to bank account using voice command via the Alexa digital assistant.

But the thing is, users' personal data – including, potentially, their banking information and contact lists – could all be at risk if they’ve installed any third-party skills from the Alexa skills marketplace.

The cause of this issue, is Amazon in seemingly lacking the proper vetting when dealing with third-party skill developers.

For example, Amazon at this time has no verification in place to ensure that the person or company selling or giving Alexa users a skill, is who they say they are. Apparently, Amazon's system is only set up so users might think that they are using a skill from their smart thermostat or smart lock manufacturer when in fact, they've been tricked into installing a shady skill from a malicious imitator.

Things get worse when the researchers also found that third-party skill developers can use redundant wake words.

And things can go from worse and worst, when third-party skills developers are apparently allowed to change their privacy policy after gaining approval and publishing.

What this means, they can get away in the act, even if they are caught doing nefarious things.

According to the researchers' press release:

In their paper, the researchers wrote that:

The only way to address the issue, at least at this time, is to ensure that there is third-party skills installed on the Alexa.

It should be noted though, that the only thing that stopped the researchers from constituting a red-alert is because they have yet to see any evidence that the security risks have been exploited in the wild.

Responding to this, an Amazon spokesperson said: