Users have a choice. This is why YouTube has the Like and the Dislike button, so users can provide their opinion, without having to type anything.

On the largest video-sharing platform, where Likes are appreciated and Dislikes are hidden, users tend to expect that Disliking a video means that they want to tell the uploader that they don't like the video they see, and signal YouTube that they want to see less similar videos.

The thing is, things don't work like that, unfortunately.

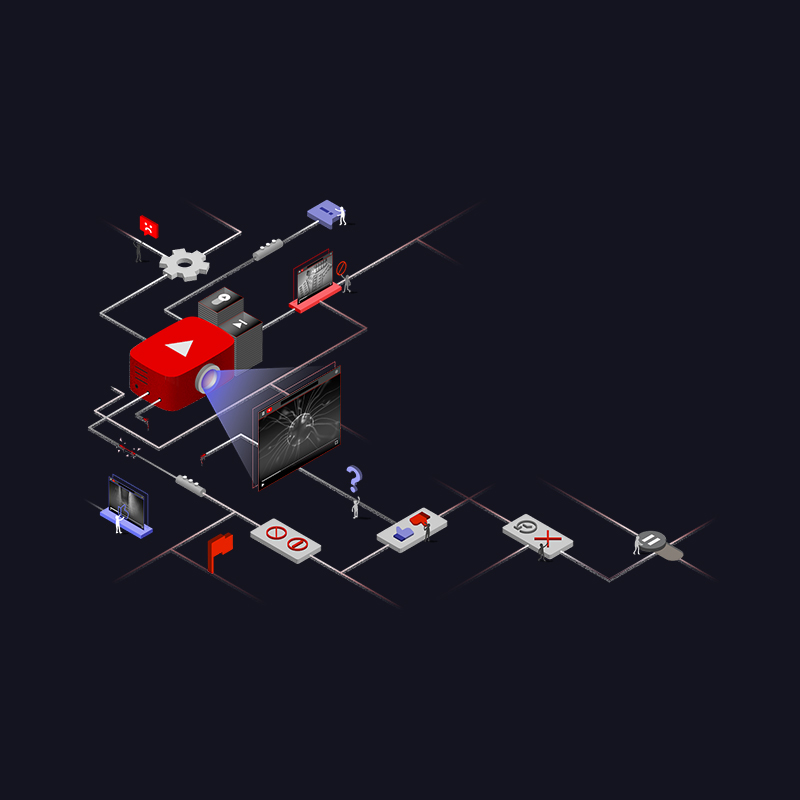

According to a research from browser maker Mozilla, YouTube users have little control over what is recommended to them.

The researchers at the company found that YouTube's Dislike button can reduce similar, unwanted recommendations, but only by a tiny fraction.

In other words, pressing that Dislike button may not make a big difference.

Feel like your YouTube recommendations are out of control? You’re not alone.

We scrutinized @YouTube’s user controls, and quite simply, they don’t work.

Help us give the power back to YouTube users. Sign our petition. #YouTubeRegretshttps://t.co/QZ17tTsRNd— Mozilla (@mozilla) September 20, 2022

To come to this conclusion, in its report, Mozilla analyzed data from 22,722 volunteers who donated their data, powered by Mozilla’s RegretsReporter, an open source tool Mozilla built to study YouTube’s recommendation algorithm.

With it, Mozilla scrutinizes 567,880,195 videos the participants were recommended, and worked with 2,757 people to determine how much control people actually have, to be able to independently audit the platform’s user controls.

By combining massive-scale community with qualitative and quantitative insights to paint a more complete picture, "including a randomized controlled experiment and a machine learning model," Mozilla found that YouTube’s user controls doesn't really change users recommendations at all.

"Nothing changed. Sometimes I would report things as misleading and spam and the next day it was back in. It almost feels like the more negative feedback I provide to their suggestions the higher bulls**t mountain gets. Even when you block certain sources they eventually return," said one person.

"We found that YouTube’s user controls somewhat influence what is recommended, but this effect is meager and most unwanted videos still slip through," Mozilla said.

What this means, YouTube viewers can’t really escape bad recommendations.

It's worth noting that in its experiment, Mozilla's definition of a “bad recommendation” is when YouTube recommends videos to users that are similar to a video they had previously rejected.

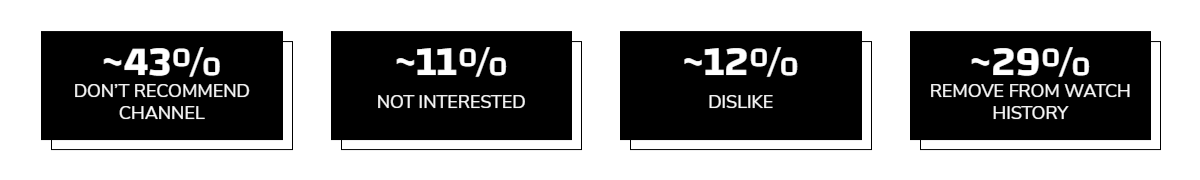

And rejecting a video via Disliking a video, only affects a mere 12%.

Other methods to to give YouTube the feedback, include: Don't Recommend Channel (~43%), Not Interested (~11%), and Remove from Watch History (~29%).

Read: The 'Autoplay' Feature: YouTube Denies The Existence Of ‘Rabbit Hole Effect’

So, which method is the most effective? "None of them really," said Mozilla.

They do work, but not so much to make a difference that can be felt or experienced.

Jesse McCrosky, one of the researchers who conducted the study, said that YouTube should be more transparent and give users more influence over what they see.

"Maybe we should actually respect human autonomy and dignity here, and listen to what people are telling us, instead of just stuffing down their throat whatever we think they’re going to eat," McCrosky said in an interview.

As for YouTube, uses can manage their video recommendations through the feedback tools the platform offers, and defended that users do have a choice.

YouTube said that its recommendation system relies on numerous “signals” and is constantly evolving, so asking about transparency on how the algorithm works is not as easy as "listing a formula."

"A number of signals build on each other to help inform our system about what you find satisfying: clicks, watch time, survey responses, sharing, Likes and Dislikes," said Cristos Goodrow, a vice president of engineering at YouTube.

"Our controls do not filter out entire topics or viewpoints, as this could have negative effects for viewers, like creating echo chambers," said Elena Hernandez, a spokeswoman for YouTube, said in a statement.

"Mozilla’s report doesn’t take into account how our systems actually work, and therefore it’s difficult for us to glean many insights."

And off course, YouTube also said that it has surveyed users, and found that users were generally satisfied with the recommendations they saw, and that the platform has tried to not prevent recommendations of all content related to a topic, opinion or speaker.