Snapchat has long been known as a messaging and fun communications app. But Snap the company is introducing something than can change that.

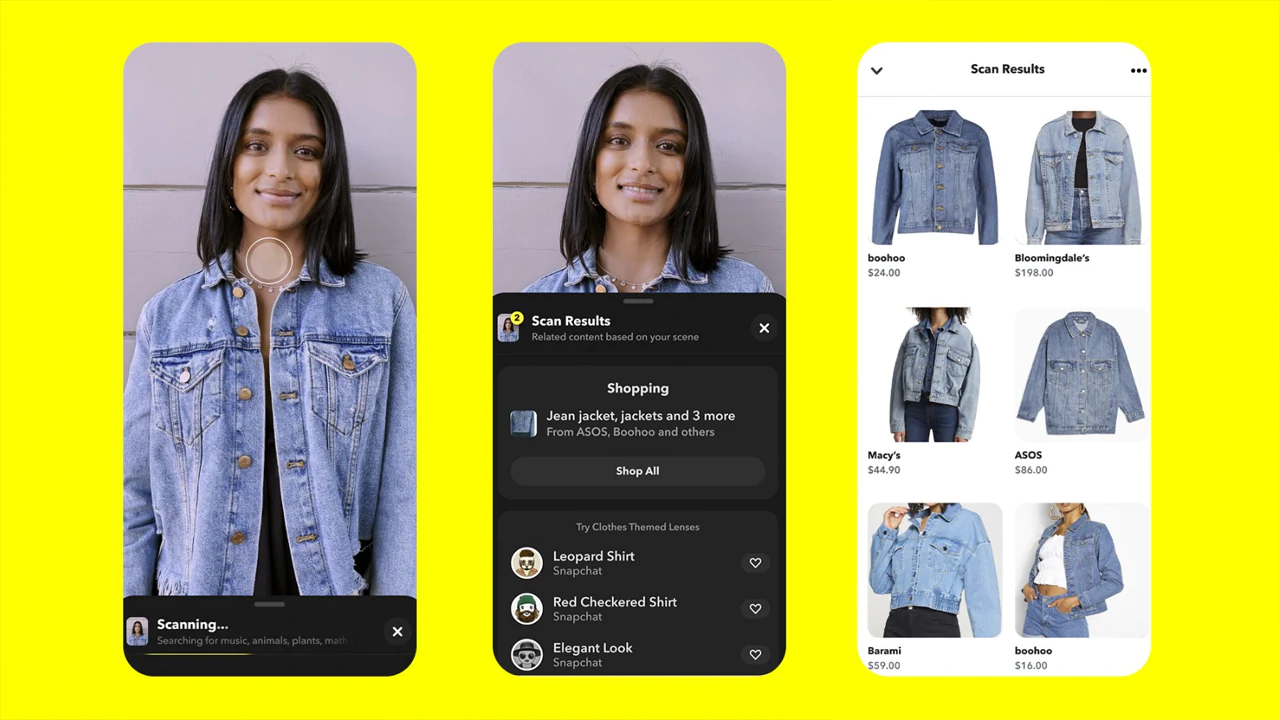

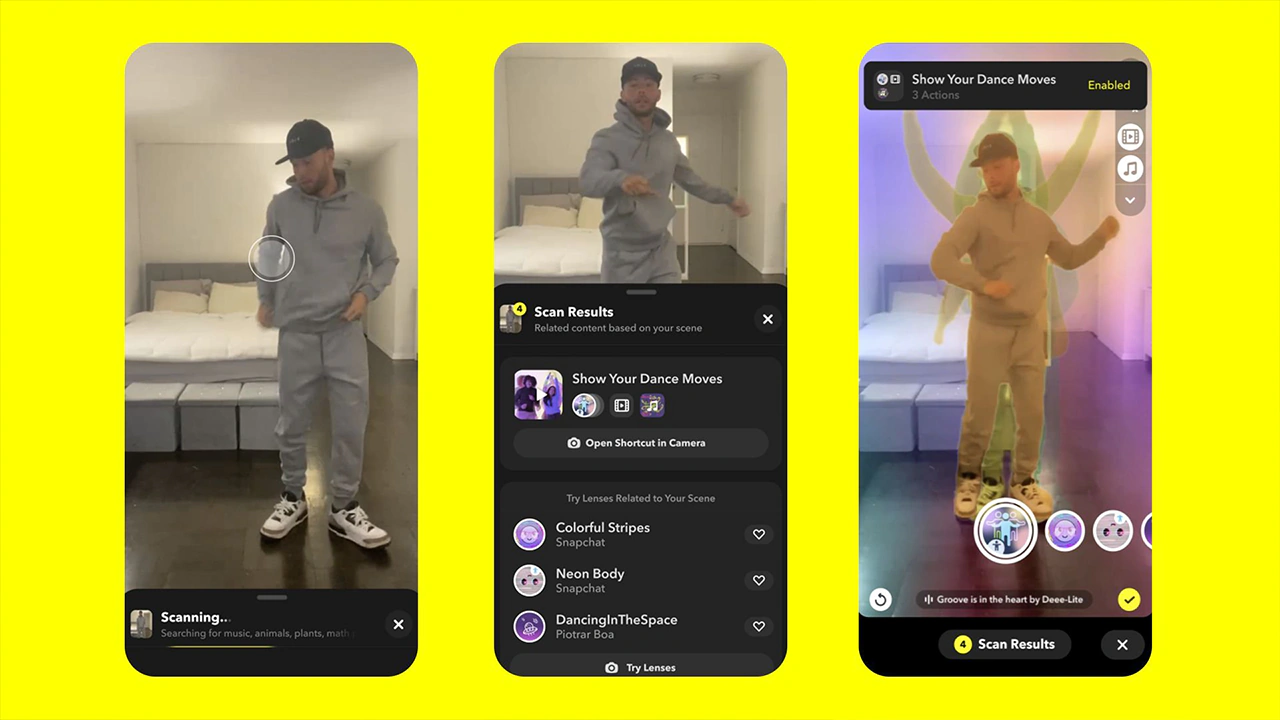

With what it calls the 'Scan' feature, Snapchat wants to allow users to scan objects in the real world using Augmented Reality (AR) technology, so they can identify things like clothes to even dog breeds. While the Scan feature has been around since 2019, Snapchat has improved it drastically, and has made it more prominent.

In other words, the Scan feature is transforming the Snapchat app into a visual search engine, which can make Snapchat the app to go way beyond messaging.

On top of that, the Scan feature can also suggest Lenses based on what the camera is looking at.

This way, Scan can also help Snapchat address a growing problem for Snapchat users: how to find the millions of AR effects, that are made by Snap’s creator community.

Snap started its work on Scan, after introducing the ability to scan profile QR codes, and after working with Shazam to identify songs, and after working with Photomath to solve math problems through its camera.

At first, Snapchat's ability to identify items was to see whether the item is on sale on Amazon.

But this time, the Scan feature can do a lot more than just that.

Snap that previewed the upgrade at its developer conference earlier this 2021, showed that Scan can detect dog breeds, plants, wine, cars, and food nutrition information.

To make this possible, Snapchat partners with a number of other companies and apps.

For example, it partners with the app Vivino to help it identify wines, with Allrecipes to help it suggest recipes based on a specific food ingredient, and more.

Snap plans to keep adding more abilities to Scan over time using a mix of outside partners and what it is able to build in-house.

But the biggest addition here, is through its acquisition of Screenshop, an app that allows users to upload screenshot of clothing and shop for similar items.

The term 'visual search' is nothing new.

Back in 2017, Google debuted Lens, allowing users to scan items through their phone camera and identify them using Google's vast index of search results. Lens is integrated in the Google Pixel phones and a number of other Android handsets, as well as baked into the main Google mobile app.

Other prominent player that also has a visual search feature, is Pinterest, which has what it calls Lens that can be used to show similar images based on what users scan in the app.

Even Apple has plans for a similar feature.

In this field, Snapchat is fighting an uphill battle.

However, Snapchat has the chance to be unique, as Snapchat defaults to the camera. Because the Scan feature is front and center, Snapchat is introducing a big implication in how its nearly 300 million daily users interact with the app.

Snap the company said that more than 170 million people already use Scan at least once a month, before it was put front and center on the camera.

“We definitely think Scan will be one of the priorities for [Snapchat’s] camera going forward,” said Eva Zhan, Snap’s head of camera product. “Long term, we see the camera doing a lot more than what it can do today.”