AI-powered algorithms used Google, Facebook, YouTube, Amazon, Spotify and some others seem to know their users, more than the users know themselves.

This happens because the AI deployed on the platforms 'learn' from their users' habits, to understand what they want the most. With that, they can recommend contents (and ads) that the users like, even before they say anything.

Those companies are confident in using machine learning technology, simply because they sit on an abundance form of big data and computing resources. With those, they can create and train AIs to bring remarkable enhancement to all sorts of operations, including content recommendation, inventory management, sales forecasting, and fraud detection.

AI needs a lot of data to learn, in order to understand the patterns. The more the data it can churn, the better the AI can do what it is supposed to do.

Despite their seemingly magical behavior, AI algorithms are only as good as the data they have been trained on.

In other words, the technology can only predict outcomes as long as they don’t deviate too much from the norm.

What this means, as long as the data is predictable, the resulting AI should be predictable. And if ever the data is corrupted, altered in any ways, or changed due to moments like a crisis, the machine learning models get confused.

Read: Human-Sourced Biases That Would Trouble The Advancements Of Artificial Intelligence

Machine learning models are designed to respond to changes. But unfortunately for most of the models, they are also fragile.

They can work like intended when the data is predictable.

But when a sudden change happened, like during the novel 'COVID-19' coronavirus for example, they can perform badly. This is because the input data they need to process, differs too much from the data they were trained on.

This can cause some hiccups, as the machine-learning models trained on normal human behavior are finding that normal has changed, and some are no longer working as they should.

For example, an AI utilized by a company that supplies groceries can forecast that it needs to reorder stock that is no longer matched up with what the company was actually selling.

Many of these problems with models arise because more businesses are buying machine-learning systems but lack the human resources capable of maintaining them. In cases like during the coronavirus pandemic, some AI-powered algorithms need to be retrained.

And this process requires expert human intervention.

The pandemic has revealed how intertwined modern human lives are with AI, exposing a delicate codependence in which changes to our behavior change how AI works, and changes to how AI works change our behavior.

This is a reminder that human involvement in automated systems remains crucial.

It is a mistake to assume that an AI is a plug-and-forget system.

During the coronavirus pandemic, things are anything but normal.

People are working from home, studying from home, commute less, shop more online and less from brick-and-mortar stores. Instead of meeting others in person, people use video call apps, and practice social distancing to stop the spread of this COVID-19 disease.

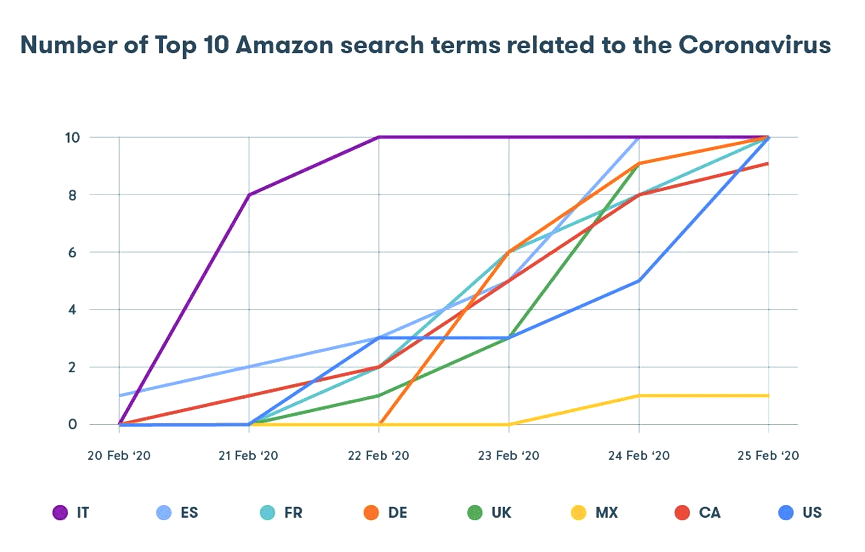

In the image above provided by Nozzle, it is shown that it took less than a week at the end of February of 2020 for the top 10 Amazon search terms in multiple countries to fill up with products related to COVID-19. Certain items peaked first in Italy, followed by Spain, France, Canada, and the U.S., with the UK and Germany lagging slightly behind.

“It’s an incredible transition in the space of five days,” said Rael Cline, Nozzle’s CEO. The ripple effects have been seen across retail supply chains.

While we humans can get confused when faced with unusual events, humans have the needed intelligence that extends way beyond pattern-recognition and rule-matching. Humans have all sorts of cognitive abilities that enable them invent and adapt to the ever-changing world.

Machines on the other hand, aren't blessed with those abilities. They couldn't experience the world like how humans would, making them practically senseless and clueless in this pandemic.

With many things people are doing are connected to the internet, the impact of this coronavirus pandemic has been felt far and wide, touching mechanisms that in more typical times remained hidden.

As much as modern AI algorithms are fascinating, they certainly don’t see or understand the world as we do. More importantly, while they can be good at spotting correlations between variables, machine learning models don’t understand causation.

Read: Paving The Roads To Artificial Intelligence: It's Either Us, Or Them

As a result, AIs get confused when facing situations that they're not trained for, and cannot work like usual.

From warehouses that depended on machine learning to keep their stock filled at all times couldn't predict the right items that need to be replenished. Fraud detection systems that target anomalous behavior are confused by new shopping and spending habits. Shopping recommendations are also not as good as they used to be.

So if machines are to be trusted, humans need to watch over them.

And this pandemic is the perfect trigger for that, which also teaches us humans that AIs are simply "breathing engines" that need to be taken care of.

For the moment, what we have are AI systems that can perform specific tasks in limited environments. One day, if humans can create Artificial General Intelligence (AGI), the computer software that has the general problem-solving capabilities of the human mind, may eliminate this issue for good.

At that time, machines may work unsupervised. That kind of AI that can innovate and quickly find solutions to pandemics and other black swan events.

Until then, as the coronavirus pandemic has highlighted, artificial intelligence will be about machines complementing human efforts, not replacing them.