Black and white are just two colors. Between them, there are countless of shades.

For more than many years, AIs researchers developed have been biased towards white men, and discriminating women. Sometimes, AIs can also be racists. To help prevent, or at least reduce those kinds of biases, Google the tech giant experiments by introducing different skin colors for its AI to learn from.

Google knows that in order to create a smarter AI, bias problems must be addressed.

To make this happen, Google said in a blog post, that it partners with a Harvard professor to promote a scale for measuring skin tones.

The tech giant is working with Ellis Monk, an assistant professor of sociology at Harvard and the creator of the 'Monk Skin Tone' scale, or MST.

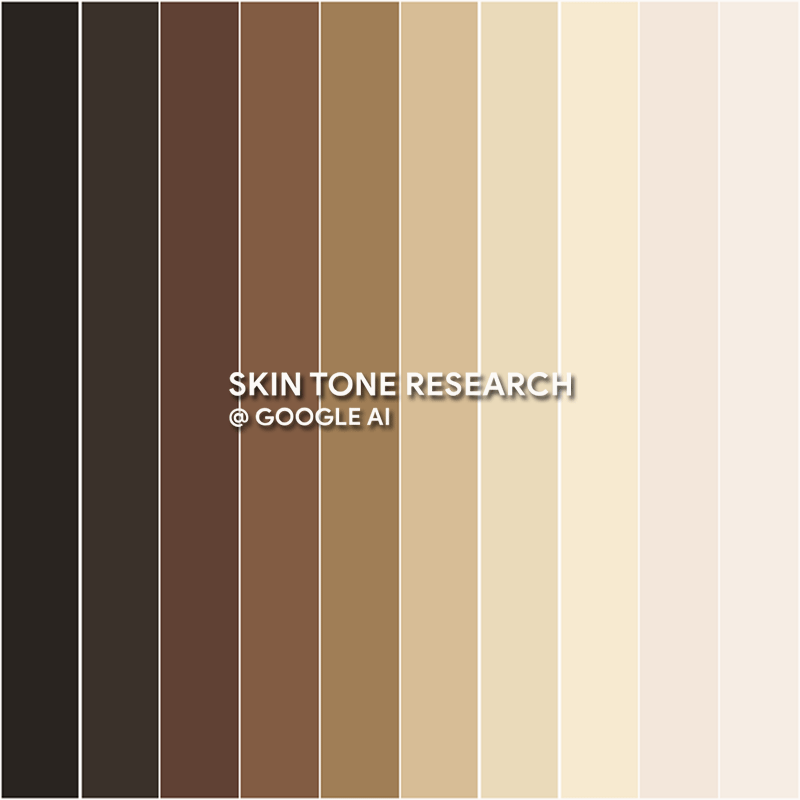

The MST scale is designed to replace outdated skin tone scales that are biased towards lighter skin. According to Monk, older scales used by tech companies to categorize skin color, can lead to products that perform worse for people with darker skin colors.

Not only the experiment can help fix problems of biases, as it can also solve diversity problems happening in the company’s products.

As explained by Monk:

"The Monk Skin Tone scale is a 10-point skin tone scale that was deliberately designed to be much more representative and inclusive of a wider range of different skin tones, especially for people [with] darker skin tones."

While there are certainly more ways to help solve AI biases, Monk suggests that one common factor is the use of outdated skin tone scales when collecting training data.

For example, one of the most popular skin tone scale systems is the Fitzpatrick scale, which is widely used in both academia and AI. This scale however, was originally designed in the 1970s to classify how people with paler skin burn or tan in the sun and was only later expanded to include darker skin.

It wasn't purposefully meant to train AI of any kind.

As a result, machine learning versions trained on the Fitzpatrick data, tend to fail to capture a full range of skin tones, resulting to a bias to lighter skin type.

The main difference between Fitzpatrick scale and the MST scale is that the former is comprised of six categories, whereas the latter expands this to 10 different skin tones.

Monk said that he chose this number based on his own research, that balances diversity and ease of use.

He compared his MST scale to some other skin tone scales which offer more than a hundred different categories, and said that too many choices can lead to inconsistent results.

"Usually, if you got past 10 or 12 points on these types of scales [and] ask the same person to repeatedly pick out the same tones, the more you increase that scale, the less people are able to do that," Monk explained. "Cognitively speaking, it just becomes really hard to accurately and reliably differentiate."

So here a choice of 10 skin tones is much more manageable, he concluded.

Creating and using MST is one step. The next step would be integrating the scale into AI learning system, and then into real-world applications.

In order to promote the MST scale, Google has created a new website, skintone.google, which is made to explain the research and the best practices for its use in AI.

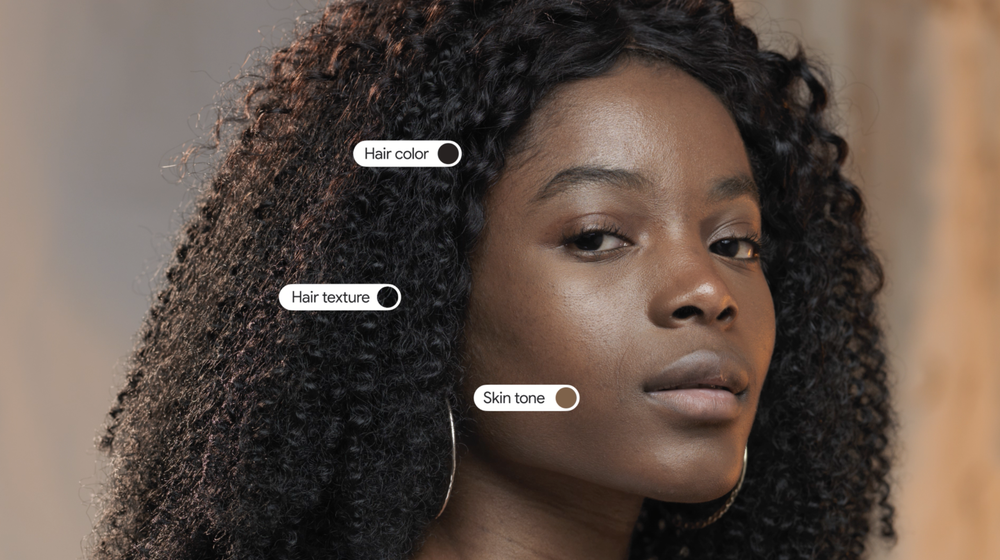

And not just that, as Google also said that it is applying the MST scale to a number of its own products.

Read: 7 Ways To Build A Lawful, Ethical And Robust AI, According To The EU Commission

Google hopes that in the near future, users can search for things like “eye makeup” or “bridal makeup looks,” and then filter the results by skin tone. In the future, the company also plans to use the MST scale to check the diversity of its results so that if users search for images on Google the search engine would return more images of people of color.

"One of the things we’re doing is taking a set of [image] results, understanding when those results are particularly homogenous across a few set of tones, and improving the diversity of the results," said Google’s head of product for responsible AI, Tulsee Doshi.

Solving AI biases created by training data can be culturally and politically challenging. But if the goal is to create a better AI that is less biased, diversity in training data is the key.

If all goes well, all Google has to do, is to adjusts its search engine results pages based on skin color, and filter them based on the geography of the user.

"What diversity means, for example, when we’re surfacing results in India [or] when we’re surfacing results in different parts of the world, is going to be inherently different," explained Doshi.

"It’s hard to necessarily say, ‘oh, this is the exact set of good results we want,’ because that will differ per user, per region, per query."

Introducing a new and more inclusive scale for measuring skin tones is a step forward, but much thornier issues involving AI and bias remain.

Further reading: Human-Sourced Biases That Would Trouble The Advancements Of Artificial Intelligence