In the crowded landscape of large language models (LLMs) war, companies like OpenAI, Anthropic, xAI, and Google continue to release more advanced models at a rapid pace.

For users, keeping up is becoming a challenge. Switching between separate apps for different models fragments workflows and often requires repeating the same context over and over again. This is where Poe positions itself differently.

Rather than competing as a single model, Poe acts as a central hub, giving users access to a wide range of leading systems, including GPT, Claude, Grok, and Gemini, all within one interface.

The appeal is simple: no need to juggle multiple platforms, subscriptions, or logins just to compare outputs or complete everyday tasks.

This approach has made Poe a practical response to the fragmentation that defines today’s AI ecosystem. Instead of committing to one provider, users can move fluidly between models depending on what they need.

Now, Poe is pushing that idea further with the introduction of its memory feature.

Memory is now available

Poe can remember your preferences, interests, and what you're working on across chats, so you don't have to repeat yourself. Works with most official bots, including Claude, GPT, and Gemini.

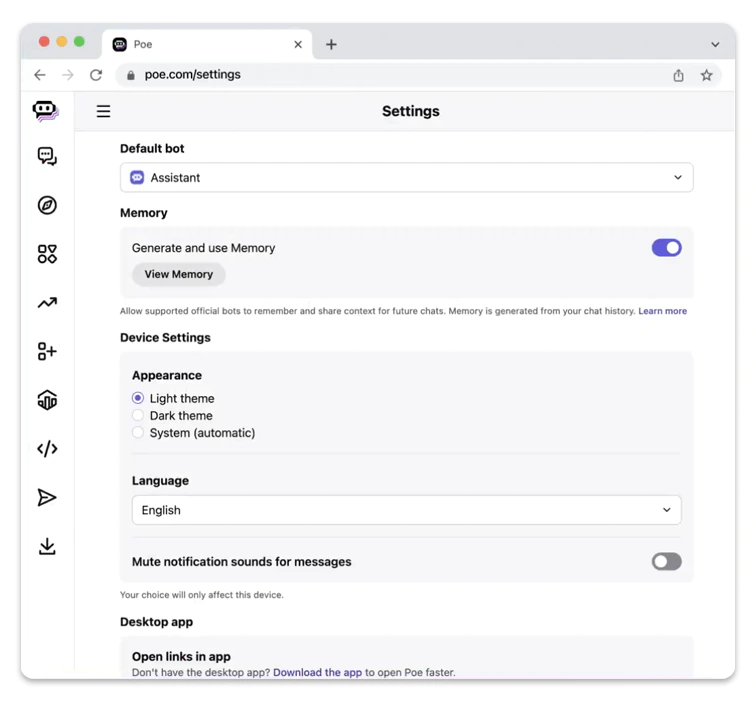

Off by default. Turn it on in Settings Memory. pic.twitter.com/Nzmu53d11Q— Poe (@poe_platform) May 6, 2026

Unlike memory systems in individual platforms, this feature operates at the platform level.

While tools like ChatGPT, Grok, and Claude store context within their own ecosystems, Poe creates a shared memory profile that multiple supported bots can access.

This means users can switch between models without losing continuity. Preferences, ongoing projects, and communication styles carry over automatically, reducing the need to restate information each time.

The way this memory is built also sets it apart. It remains off by default and only begins collecting information after activation. From that point, it updates once per day using only new conversations, avoiding older data and creating a more controlled, intentional profile.

Users also retain full control. They can review what is stored, edit details, or delete the memory entirely at any time. The system focuses on practical context, such as work focus or preferred tone, while aiming to avoid overly sensitive information.

In contrast, memory features in individual models tend to accumulate context more continuously across past interactions. While that can create deeper personalization within a single platform, it does not transfer when users switch tools.

Poe’s approach addresses that exact limitation.

In practice, a discussion started with one model can carry over to another without interruption. A project outlined in GPT can continue in Claude, or a preference established in one chat can shape responses in another. The experience becomes less about restarting conversations and more about continuing them across systems.

This kind of interoperability is what sets Poe apart. It does not replace the strengths of individual models, but instead connects them, turning a fragmented ecosystem into something more coherent.

As the LLM space continues to evolve, that may become increasingly valuable. The challenge is no longer just choosing the best model, but managing how they all fit together.

With its memory feature, Poe is making a clear bet: that the future of AI is not just smarter models, but smoother transitions between them.