AIs can be used for many things. From altering the reality to creating something out of thin air.

The world has been taken by storm when Deepfakes plagued the internet, for example. This time, a team of researchers from Tel-Aviv University developed a neural network capable of reading recipes to generate images of what finished cooked food should look like.

Recipe books have photos on them for reasons like because most of us cannot really picture how a cooked food will look like by only reading the ingredients. Here, the researchers are proving that AIs don't really have that same problem, at least.

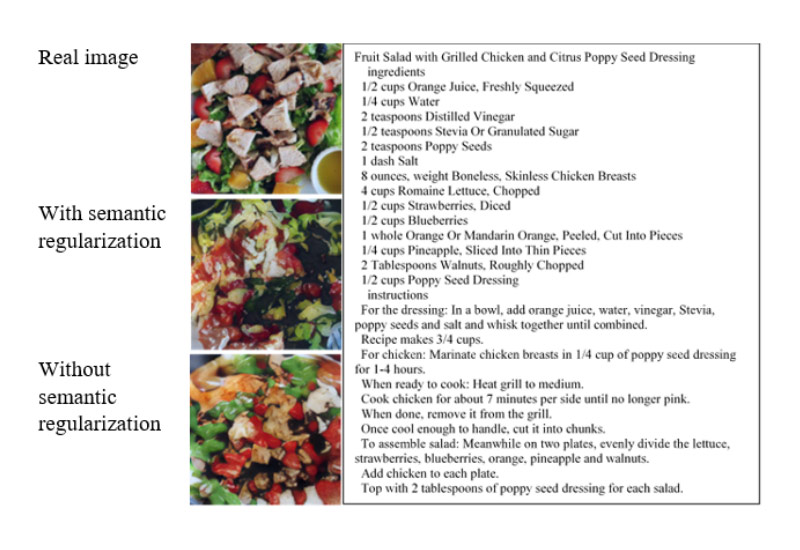

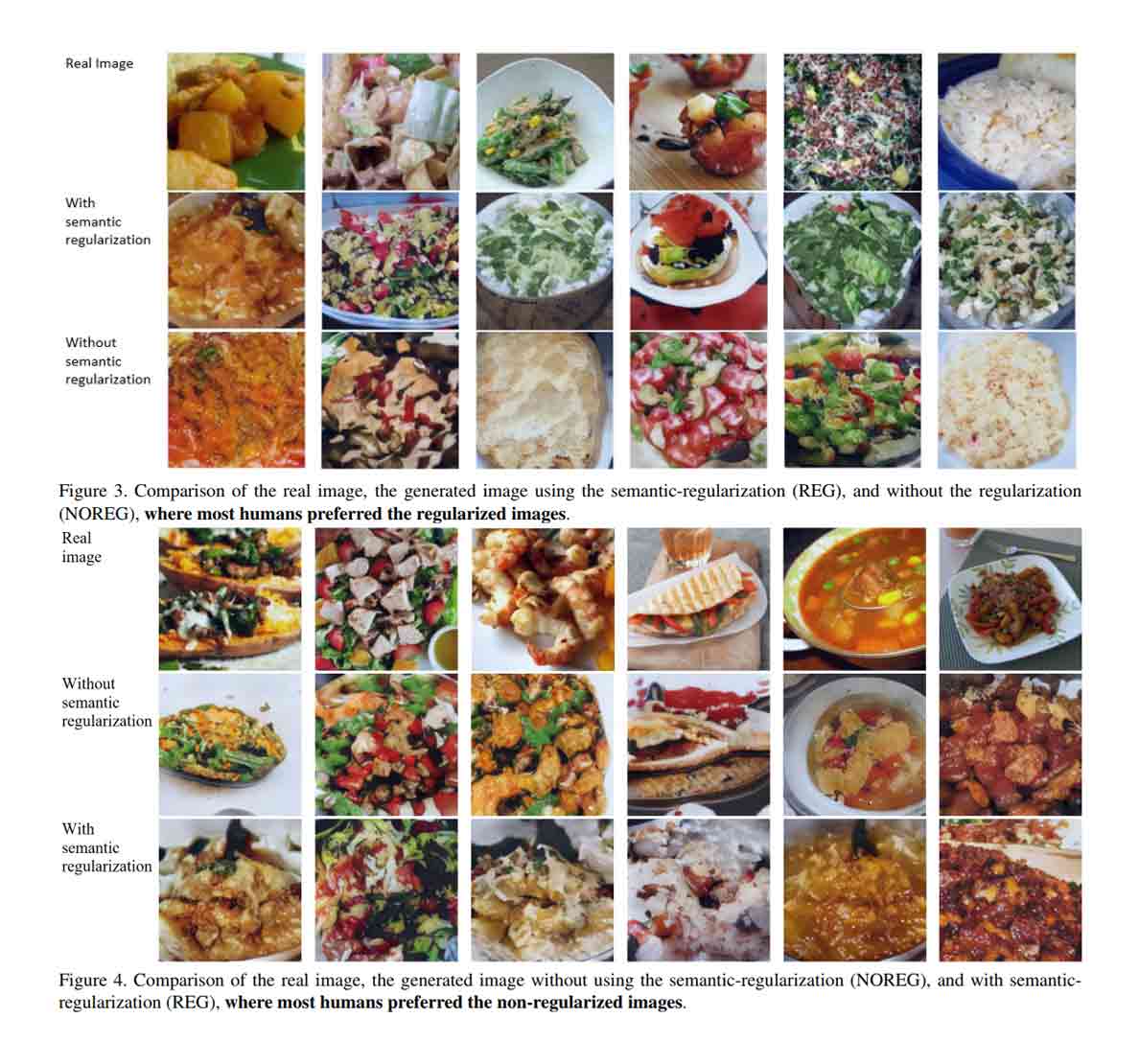

In short, the researchers created an AI that is capable of generating imaginary food images from just reading the list of ingredients and instructions on how to make them.

The team consisting of researchers Ori Bar El, Ori Licht, and Netanel Yosephian created the AI using a modified version of a generative adversarial network (GAN) called StackGAN V2. The researchers then feed the AI with 52,000 written recipes and images of completed food combinations from recipe1M's dataset.

According to Researcher Ori Bar El:

"Then, I wondered if I can do the opposite, instead. Namely, generating food images based on the recipes. We believe that this task is very challenging to be accomplished by humans, all the more so for computers. Since most of the current AI systems try replace human experts in tasks that are easy for humans, we thought that it would be interesting to solve a kind of task that is even beyond humans’ ability. As you can see, it can be done in a certain extent of success."

"Our system takes a recipe as an input and generates, from scratch, an image that reflects the food that the system ‘believes’ this recipe describes."

"The important aspect is that the system has no access to the title of the recipe — otherwise this task would have been pretty easy — and that the text of the recipe is both long and does not describe the visual content of the image directly. [This fact] makes this task very hard even for humans, and all the more so for computers."

The AI needs a two-stage process to generate the images.

First, the text of the recipe is converted into vector of numbers using a method called text embedding. This is to make the AI understands the meaning of the text by mapping semantically similar pieces of text to close vectors in the embedding space. After this is done, a separate network maps the text vectors and images to align them.

The next step is where the GAN steps in to generate the new imaginary images and evaluating them. By having the GAN attempt to fool itself into thinking a generated image is a real photo, the pictures the system generates can become increasingly realistic.

On their paper, the researchers acknowledge that the AI is not perfect, at least yet.

For example, the quality of the images in the recipe1M dataset is lower than images found in CUB and Oxford102 datasets.

This can be seen as the dataset has many blurred images that are low in quality, and others have bad lightning conditions. There is also the fact that the images aren't square shaped, in which made it difficult for the researchers to train the models.

This is one of the reasons why the images the AI succeeded in producing ”porridge-like” images for food like pasta, soup and salad, but had difficulties in generating images for food with distinct shapes like hamburger or chicken.

Ori Bar El and his team want to continue developing the AI, with the hopes to extend its capabilities to generate images beyond food: