When it comes to artificially-made intelligence, researchers seem to love tweaking on the algorithms to see smart computers can be.

One of the best things about computers is that, they can learn as much from a simulation as they can from "real-world" experiences. What this means, computers given the proper driving simulator, should learn as much as when they are given tests using cars as they bodies.

The world is seeing AIs capable of controlling cars, like changing lanes, doing emergency braking, cruising and more.

In order to make those autonomous or semi-autonomous cars smarter, researchers need to train them with heaps and tons of training materials and data.

Usually, the simulators car companies use to train their autonomous cars weren't all that interesting, as they're mostly physics engines designed to be interpreted by a neural network.

Sony is changing things a bit, by making car driving simulation for AI a lot more interesting, and of course, a lot more fun, by making AI play one of the most popular driving simulator ever: Gran Turismo.

First of, it should be noted that Gran Turismo isn't at all an advanced software designed to train AIs. All Gran Turismo games right from the start in 1997, are designed as racing games playable by humans and not machines.

But still, the game has shown capabilities.

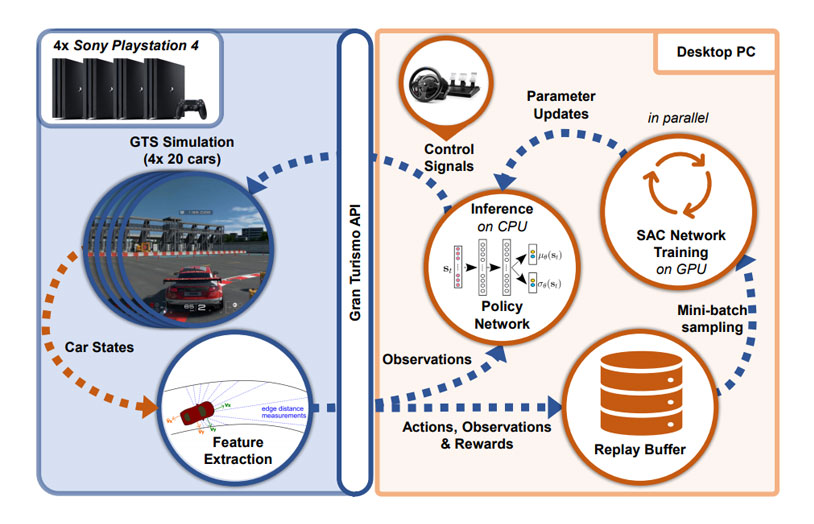

Researchers from the University of Zurich and Sony AI Zurich published a pre-print paper showcasing the development of an autonomous agent designed to learn from Gran Turismo Sport, the racing game from Polyphony Digital released by Sony Interactive Entertainment in 2017.

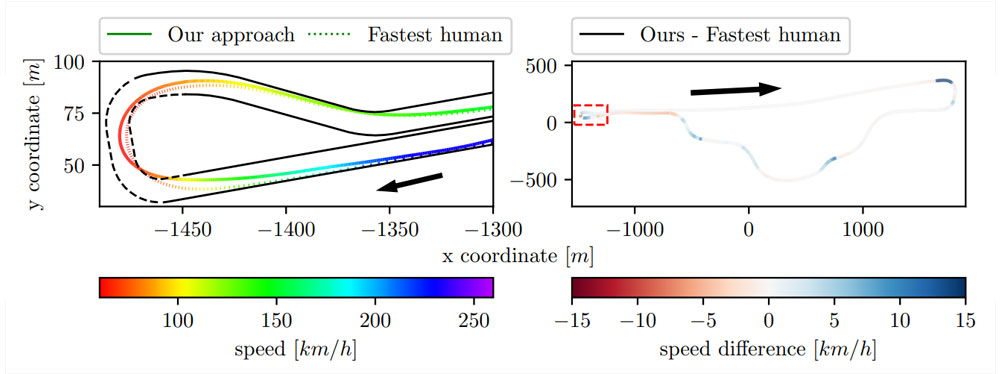

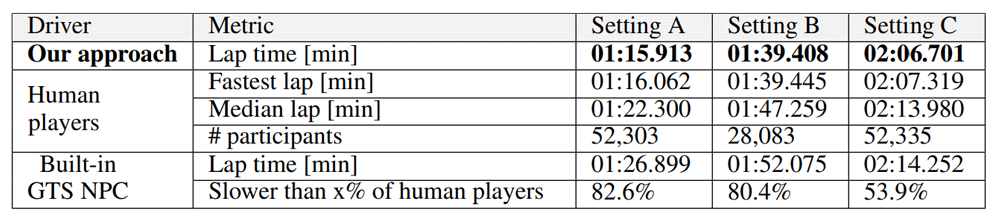

The researchers showed that the game can successfully train an AI to drive faster than "built-in non-player character of Gran Turismo Sport", and also capable of outperforming "the fastest known times in a dataset of personal best lap times of over 50,000 human drivers."

This indeed, is an achievement.

According to the researchers:

Gran Turismo has been one of the popular gateway to real-life racing. With each newer version of the game, Gran Turismo improves the experience, by making it a step closer to real driving reality.

This is also why the game franchise has been billed as “the real driving simulator”, as it can help gamers move to the driver’s seat.

Gran Turismo also has what it calls the Gran Turismo Academy, where PlayStation partners with Nissan to create an international virtual-to-reality driving competition between Gran Turismo players around the world. Winners would be given a chance to become a real-life professional racing car driver.

And in this case, the game has proven itself to researchers that it can train computers too.

But here, the researchers needed to address one common trick most built-in non-player characters (NPC) in racing games have. And that is the unfair advantages when dealing with human expert drivers.

For example, when dealing with human drivers that are faster then them, the racing games can give their NPCs an increased engine power, traction or even top speed, that go well beyond the car's specifications and capacity.

This shouldn't be present in a truly competitive autonomous control policy.

The researchers had the idea of using the game due to its similarity to real driving, its relatively low price instead of using real driving simulators dedicated to AI learning, or using actual race cars.

So rather than cheating or tweaking the rules, the team turned to a facet of AI called deep reinforcement learning, where the AI is trained to recognize the road ahead, and react in a more human-like fashion.

"We aim to find a policy that minimizes the total lap time for a given car and track."

The researchers then evaluated the approach in three different race settings, which feature different cars and tracks of varying difficulties.

"We compare our approach to the built-in NPC as well as a set of over 50,000 human drivers," the researchers added.

That to ensure a fair comparison to the human drivers.

"We constrain the policy to produce only actions that would also be feasible with a physical steering wheel."

Two cars were used in this test.

The first, is the “Audi TT Cup ’16”. This car uses a setting that has a higher maximum speed, more tire grip, and a faster acceleration. The second car is the “Mazda Demio XD Turing ’15”, which is slower, has less grip and slower in acceleration.

The researchers also use a third setting, which features the Audi, but driving around a more challenging track.

"The tracks and cars are used as reference settings to compare the team's approach to real human drivers.

In the tests that were different from classical state estimation, trajectory planning and optimal control method, the researchers said that they don't rely on human intervention, human expert data, or explicit path planning. In other words, the AI plays and learns by itself.

"To the best of our knowledge this is the first time an autonomous car AI has beaten human experts in Gran Turismo Sport," one of the researchers said.

Previously, researchers have tried building, training and fiddling with numerous AIs, to see how good they can be.

For example, Tencent has developed an AI capable of defeating StarCraft II's built-in 'AI' in full matches. DeepMind then followed by creating an AI that also plays StarCraft II, and showed that it could matches professional gamers, and came out as a clear undefeated champion.

DeepMind later trained that AI, and made it reach the Grandmaster status at the StarCraft II game.

In short, researchers know that AI can play games. And with proper training and experience, computers that have reflexes and speed processing speed faster than humans, should be capable of outperforming certain things.

And in this case, the researchers have proven that AIs can also drive faster than humans, despite still limited in a simulator game.