One of the things that make the world interesting is that, objects have dimensions.

From the tiniest particles to the largest stars, they have their own height, width, and depth. With their dimensions, things have their own perception of depth. Unfortunately for machine learning, when considering depth of things, the Z-axis that comes after X and Y, examples aren't many.

There can be endless stream of images for AIs to learn from. But training materials that include 3D models aren't as plentiful for AIs to learn about the world we live in.

This is a problem Nvidia wants to solve, using a specialized AI it calls 'DIB-R', which stands for 'differentiable interpolation-based renderer'.

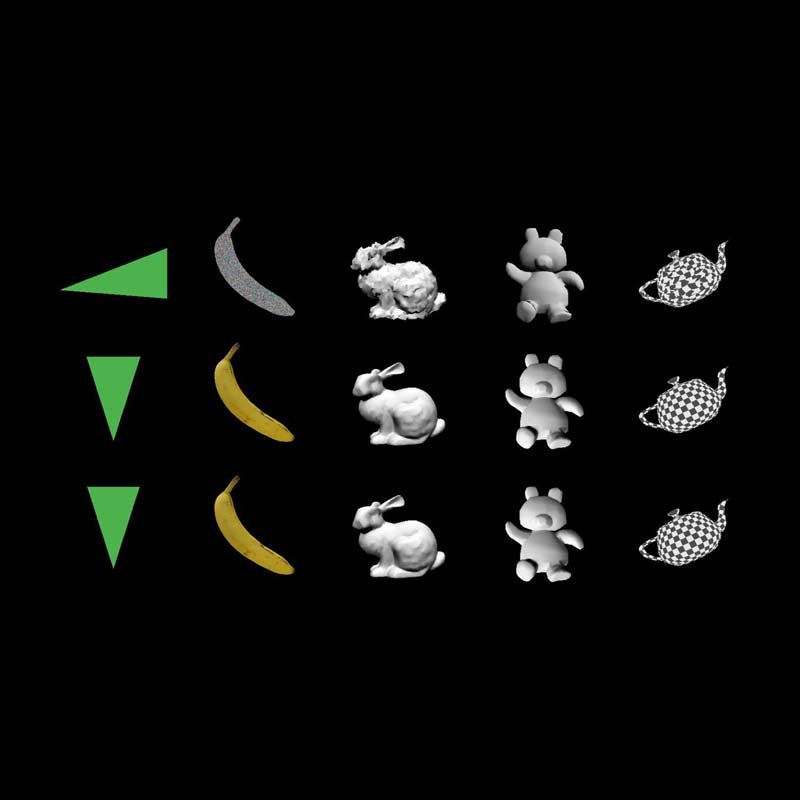

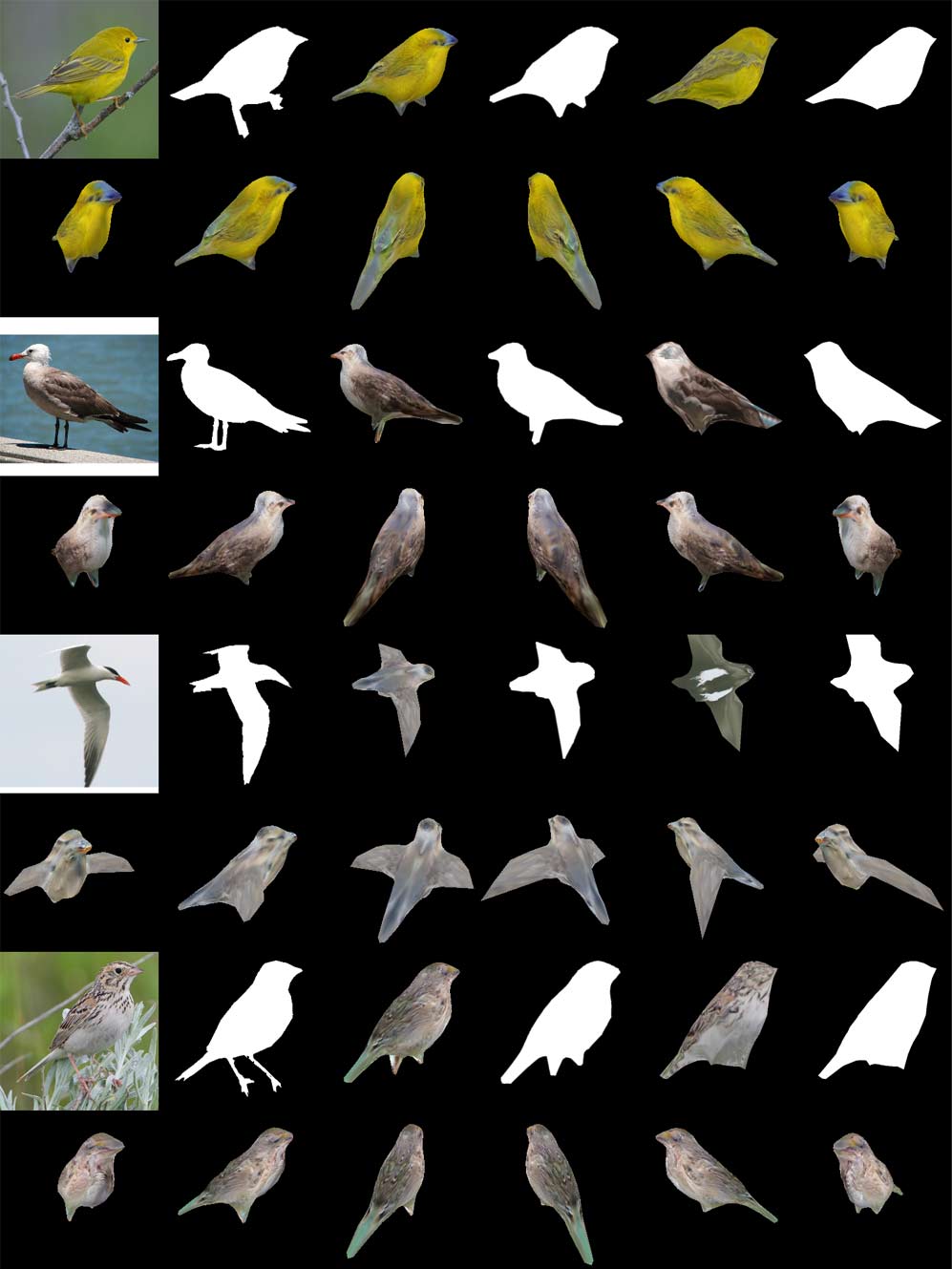

What it does, is taking a picture of any 2D object, to then predicts what it would look like in 3D.

To do this, DIB-R combines the 2D image it “sees”, and makes inferences based on a 3D "understanding" of the world. This method is strikingly similar to how humans translate 2D input from our eyes into a 3D mental image.

According to Nvidia on its blog post:

"Machine learning models need this same capability so that they can accurately understand image data."

As for how the technology can be used, Nvidia continued by saying that:

Sanja Fidler, Nvidia’s director of AI and co-author on the team’s paper, said that:

With further development, the researchers hope to expand DIB-R to include functionality that would essentially make it a virtual reality renderer. Such system should open the possibilities for AIs to be able to create fully-immersive 3D worlds in milliseconds using only photographs

In other words, the AI technology can also be used in gaming, AR/VR, or object tracking systems.

There are other AIs that can predict 3D properties from 2D images, like DeepMind's AI that learned how to turn flat images into 3D scenes, and the AI from the researchers from The University of Nottingham and Kingston University that can create a 3D model of people's face, by just looking at their photo.

But Fidler said that "none of them really was able to predict all these key properties together."

Because DIB-R can predict shape, 3D geometry, and color and texture of the object, the AI is considered one of the first neural or deep learning architectures that can take 2D images and then predict several key 3D properties at the same time.

The research was conducted by researchers Nvidia, with the help from those from Vector Institute, University of Toronto, and Aalto University.