OpenAI is known for its generative pre-training models that can learn world knowledge and process long-range of dependencies with long stretches of text.

But the world is not only occupied by text. According to its about page, OpenAI's mission is "to ensure that artificial general intelligence (AGI)" can benefit humanity. To pursue AGI, AI must exhibit general intelligence while performing tasks that are useful to humans.

But before becoming useful to humans in the real world, AIs need to first understand the real word.

To do that, its AI needs to go beyond just text.

And this time, OpenAI ventures its AI models to also include images.

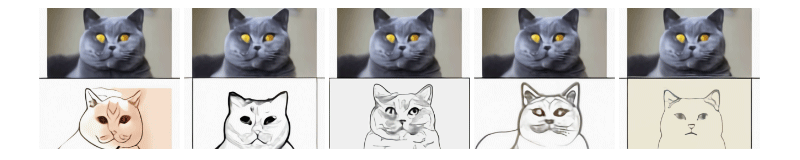

With what it calls 'DALL·E', OpenAI is developing AI model that can improve computer vision, and can also produce original images from only a text prompt.

Read: Artificial General Intelligence, And How Necessary Controls Can Help Us Prepare For Their Arrival

First of, DALL·E is a neural network that can "take any text and make an image out of it," says Ilya Sutskever, OpenAI co-founder and chief scientist.

OpenAI picked the name "DALL·E" as a portmanteau of the surrealist artist Salvador Dali and the yellow Pixar robot WALL-E. With the naming, OpenAI wants to fulfill the dream of having a computer that can create something using regular languages.

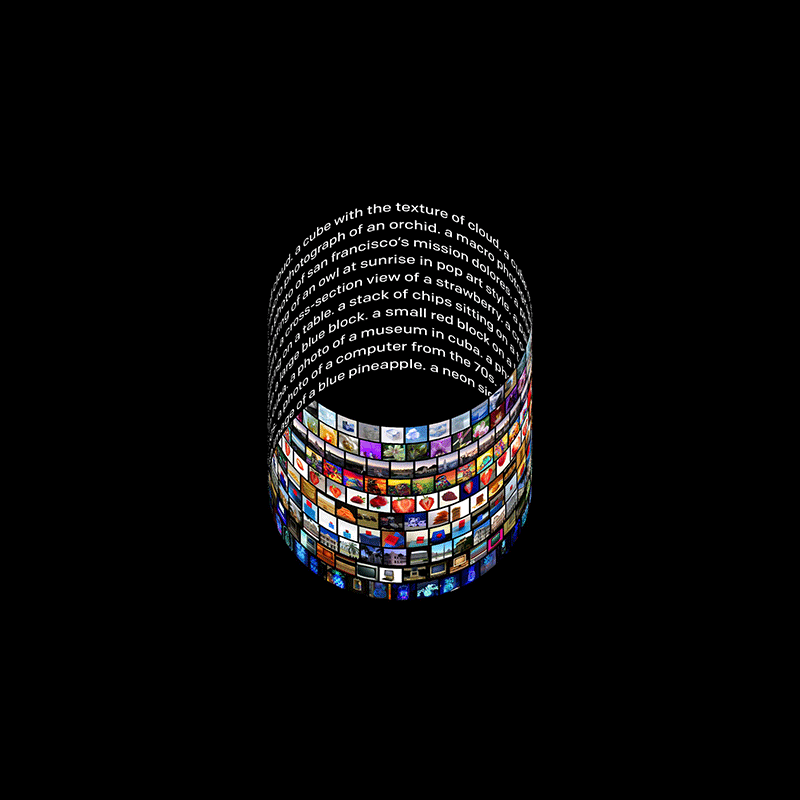

To do this, DALL·E operates a similar transformer model to the provenly-capable GPT-3 that can generate original passages of text based on a short prompt.

With that capacity, DALL·E can work alongside CLIP, another neural network OpenAI introduces, to "take any set of visual categories and instantly create very strong and reliable visually classifiable text descriptions," explained Sutskever.

Here, DALL·E and CLIP can improve existing computer vision techniques with less training and less expensive computational power.

"Last year, we were able to make substantial progress on text with GPT-3, but the thing is that the world isn't just built on text," said Sutskever. "This is a step towards the grander goal of building a neural network that can work in both images and text."

DALL·E works by taking prompt from its user. For example:

On its blog post, OpenAI wrote that:

<

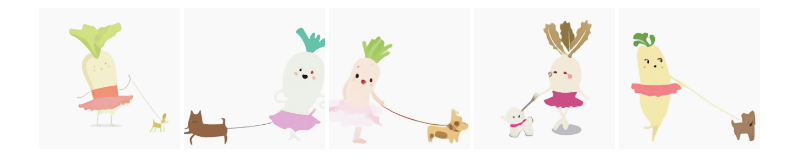

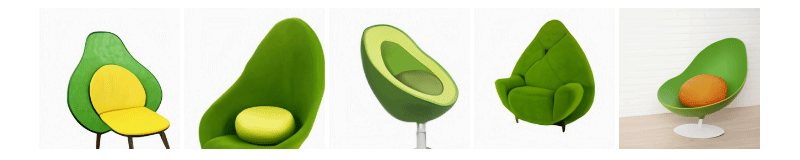

"It can take unrelated concepts that are nothing alike and put them together into a functional object," added Aditya Ramesh, the leader of the DALL·E team.

While DALL·E does this, CLIP helps DALL·E by identifying the images with comparatively little training, allowing DALL·E to caption pictures it encounters. But it's main purpose is efficiency, which has become a bigger issue in the field as the computational cost of training machine learning models.

For a long time, training AI involves a lot of computing power, thus consuming a lot of hardware power and electricity, which in turn is bad for the environment and cost.

Back in 2019 in a paper, researchers at the University of Massachusetts said that training large AI models can emit more than 626,000 pounds of carbon dioxide. This is an equivalent of nearly five times the lifetime emissions of the average car.

With DALL·E working alongside CLIP, OpenAI wants the creation of AIs capable of processing images, just like what its GPT-2 and GPT-3 did for text generation, but a lot friendlier to the environment, as well as cheaper.

At this time, OpenAI said that DALL·E has a bit of issue when associating between objects and their colors, and is depends on how the caption is phrased..

"DALL·E is prone to confusing the associations between the objects and their colors, and the success rate decreases sharply. We also note that DALL·E is brittle with respect to rephrasing of the caption in these scenarios: alternative, semantically equivalent captions often yield no correct interpretations," the team wrote.

Further reading: Paving The Roads To Artificial Intelligence: It's Either Us, Or Them