AI is a complex subject, and Anthropic is showing that things AI is indeed more that what most people think.

Its researchers investigate how neural networks handle language and images by grouping individual neurons into units called 'features.' In all, the researchers continue addressing the challenge of understanding complex neural networks, specifically language models, which are increasingly being used in various applications.

The problem the researchers sought to tackle was the lack of interpretability at the level of individual neurons within these models, which makes it challenging to comprehend their behavior fully.

And through 'Scaling Monosemanticity', the company unlocks AI transparency, using feature grouping to enhance neural interpretability.

On a website post, Anthropic said that:

New Anthropic research paper: Scaling Monosemanticity.

The first ever detailed look inside a leading large language model.

Read the blog post here: https://t.co/6RYwxt6nWI pic.twitter.com/Oh3RIvgnXx— Anthropic (@AnthropicAI) May 21, 2024

Most of the time, researchers treat AI models as a black box.

This happens because people only know how to input a query to an AI and expect an output, but never really know the whole process.

" [...] it's not clear why the model gave that particular response instead of another," said Anthropic.

As a result of this, this makes it difficult for anyone to make their AI models safe

" [...] if we don't know how they work, how do we know they won't give harmful, biased, untruthful, or otherwise dangerous responses? How can we trust that they’ll be safe and reliable?"

There’s much more in our paper, including detailed analysis of the breadth and specifics of features, many more safety-relevant case studies, and preliminary work on using features to study computational "circuits" in models.

Read the full paper here: https://t.co/aFaWVb0UFG pic.twitter.com/c5y3XH1Hwr— Anthropic (@AnthropicAI) May 21, 2024

While researchers have peeked into these black boxes, the internal state of AI models consist of long list of numbers ("neuron activations") that have no clear meaning.

The researchers at Anthropic managed to make some progress by matching the patterns of these neuron activities. The researchers call this the "features."

"We used a technique called 'dictionary learning', borrowed from classical machine learning, which isolates patterns of neuron activations that recur across many different contexts. In turn, any internal state of the model can be represented in terms of a few active features instead of many active neurons. Just as every English word in a dictionary is made by combining letters, and every sentence is made by combining words, every feature in an AI model is made by combining neurons, and every internal state is made by combining features," the researchers said.

And this time, the researchers have successfully extracted "millions of features" from the middle layer of Claude 3.0 Sonnet, the company's AI model that sits between the inexpensive Haiku and the more advanced OpenAI GPT-4-class Opus.

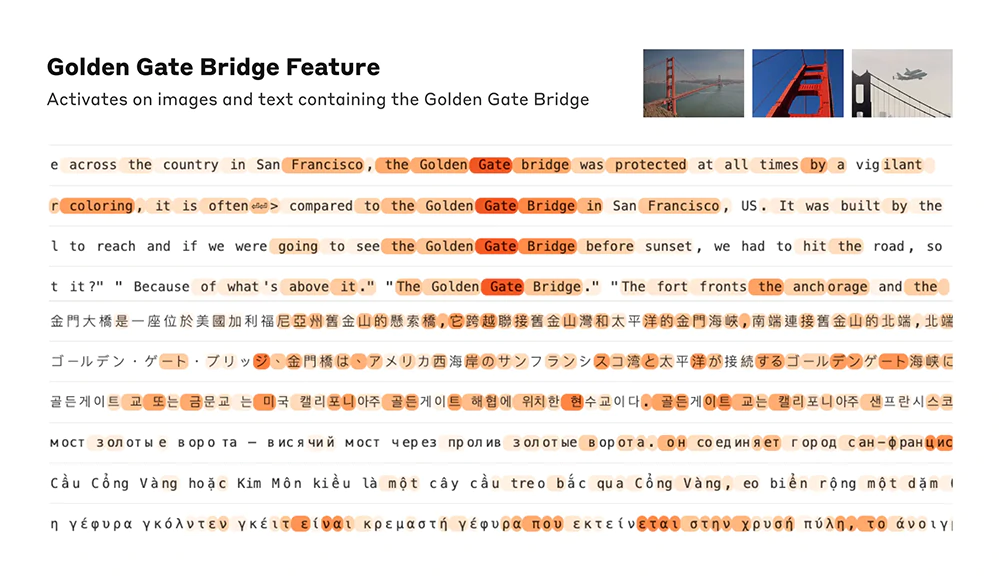

"We see features corresponding to a vast range of entities like cities (San Francisco), people (Rosalind Franklin), atomic elements (Lithium), scientific fields (immunology), and programming syntax (function calls). These features are multimodal and multilingual, responding to images of a given entity as well as its name or description in many languages," the researchers said.

But most importantly, the researchers not only able to decode the neurons, but also are also able to manipulate the features.

"We were able to measure a kind of 'distance' between features based on which neurons appeared in their activation patterns. This allowed us to look for features that are 'close' to each other. Looking near a 'Golden Gate Bridge' feature, we found features for Alcatraz Island, Ghirardelli Square, the Golden State Warriors, California Governor Gavin Newsom, the 1906 earthquake, and the San Francisco-set Alfred Hitchcock film Vertigo."

Result of this, include "artificially amplifying or suppressing them to see how Claude's responses change."

For example, amplifying the "Golden Gate Bridge" feature gave Claude an identity crisis.

At one point, altering the feature made Claude to answer: "I am the Golden Gate Bridge… my physical form is the iconic bridge itself…".

Altering the feature also made Claude to effectively obsessed with the bridge, bringing it up in answer to almost any query—even in situations where it wasn’t at all relevant.

And this research shows that large-scale language models, which consist of neural networks with a large number of neurons connected, and not programmed based on rules, possess "inner conflicts" which can affect AIs' "logical inconsistencies" or probably, hallucinations.

By understanding the special relationship between neurons, the researchers managed to better understand the behavior of the entire network.

Anthropic wants to make AI models safe in a broad sense, including everything from mitigating bias to ensuring an AI is acting honestly to preventing misuse.

"We hope that we and others can use these discoveries to make models safer. For example, it might be possible to use the techniques described here to monitor AI systems for certain dangerous behaviors (such as deceiving the user), to steer them towards desirable outcomes (debiasing), or to remove certain dangerous subject matter entirely," the researchers said.

This week, we showed how altering internal "features" in our AI, Claude, could change its behavior.

We found a feature that can make Claude focus intensely on the Golden Gate Bridge.

Now, for a limited time, you can chat with Golden Gate Claude: https://t.co/uLbS2JNczH pic.twitter.com/WHmoi2AmoR— Anthropic (@AnthropicAI) May 23, 2024