On the internet, people cannot directly harm others. But simply with words, they can attack others that sometimes results in more damage than physical pain.

Trolls and bullies promote violence or threaten others on the basis of race, ethnicity, national origin, caste, sexual orientation, gender, gender identity, and religious affiliation. This usually covers all kinds of harassment. But Twitter goes more than that by adding three more.

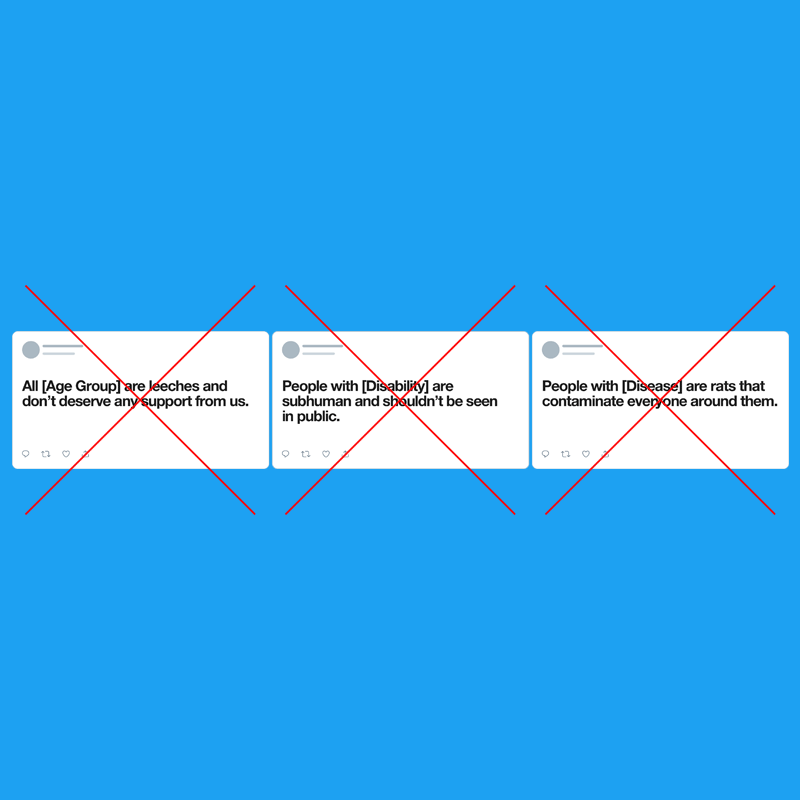

And here it includes age, disability, or serious disease.

Twitter said that it is not allowing accounts whose purpose is to inciting harm towards others on the basis on these categories. And the overall policy insists that users may not use Twitter to incite violence or make threats on the basis of any of these characteristics.

According to Twitter on its updated Hateful Conduct Policy page:

We continuously examine our rules to help make Twitter safer. Last year we updated our Hateful Conduct policy to address dehumanizing speech, starting with one protected category: religious groups. Now, we’re expanding to three more: age, disease and disability.

For more info:— Twitter Safety (@TwitterSafety) March 5, 2020

The move comes just in time as the world is living under fear of the deadly COVID-19.

For instance, by including disease in as a matter of hate speech, Twitter should be able to curb harassment that target people already infected with the novel Wuhan coronavirus. And add that with ethnicity and race, Twitter should also be able to handle those tweets that target Asians, particularly Chinese.

As for disability, it is any condition that makes it more difficult for a person to do certain activities or interact with the world around them. Whatever caused people to have disabilities, others have no reason to hate them.

As for age, the internet is populated with people in diverse age groups.

Not just because one is older and the other one is younger that the former demands respect from the latter. What Twitter is trying to address here is those comments that often come on social media networks. When concerning age, there are talks about, for example, millennials being lazy, or baby boomers suck.

"If reported, Tweets that break this rule pertaining to age, disease and/or disability, sent before today will need to be deleted, but will not directly result in any account suspensions because they were Tweeted before the rule was in place," Twitter said on its blog post.

It began back in 2018, when Twitter started asking for feedback to ensure it considered a wide range of perspectives and to hear directly from the different communities and cultures who use Twitter around the globe.

Among the feedback it received, people wanted Twitter to be clearer about the matter. Another criticism was people said that Twitter's “identifiable groups” was too broad, and that they should have given the permission to use potentially hateful language to discuss groups that don’t necessarily fall into the protected categories, like for example when dealing with political groups, hate groups, and other non-marginalized groups.

Many people wanted to "call out hate groups in any way, any time, without fear."

Twitter realizes that it doesn't have all the answers, "which is why we have developed a global working group of outside experts to help us think about how we should address dehumanizing speech around more complex categories."

"All of this builds on our ongoing work with the Trust and Safety Council and our commitment to strengthening and focusing those partnerships. We agree that these are difficult areas to get right, so we want to be thoughtful and effective as we expand this rule," closed Twitter.